ETL with DuckDB and Apache Airflow® for travel analytics

ETL with DuckDB and Apache Airflow® for travel analytics

ETL with DuckDB and Apache Airflow® for travel analytics

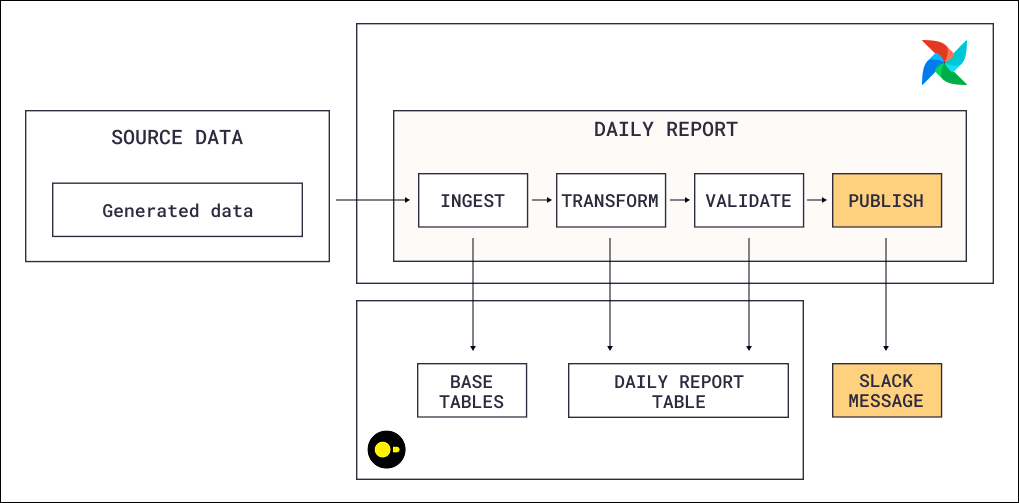

This reference architecture shows how to build an ETL pipeline for a fictional interplanetary travel company. It ingests booking data, transforms it into a daily revenue report per destination in DuckDB, and validates the output with data quality checks before publishing it as an asset for downstream consumers. A single Apache Airflow® Dag orchestrates the entire flow: ingest, transform, validate, publish.

Using a local DuckDB file makes this architecture a lightweight way to get started with an ETL pipeline without provisioning any external infrastructure. Because the pipeline uses Airflow’s common SQL operators (SQLExecuteQueryOperator, SQLColumnCheckOperator), it is warehouse-agnostic by design. These operators rely on DB-API 2.0, Python’s standard database interface, so the same Dag works with Snowflake, BigQuery, Postgres, or any other compliant database by changing only the Airflow connection. For production workloads, replace the local DuckDB file with a cloud-hosted option like MotherDuck or swap DuckDB for a traditional data warehouse entirely, without changing the Dag logic.

A single Dag runs on a @daily schedule and executes four tasks in sequence:

SQLExecuteQueryOperator with a Jinja-templated SQL file that generates booking and payment records. Each run produces a configurable number of bookings (params.n_bookings), each with a random customer, route, passenger count, and optional promo code discount. The fare calculation happens inline in SQL: passengers x base_fare x planet_multiplier x (1 - discount_pct).SQLExecuteQueryOperator runs an aggregation query that joins bookings with routes, destinations, payments, and promo codes. It groups the data by report date and destination, classifying each trip as active or completed and summing passengers, gross fares, discounts, net fares, and paid amounts. The result is upserted into a daily_planet_report table using ON CONFLICT DO UPDATE, so re-runs for the same date overwrite rather than duplicate data.SQLColumnCheckOperator runs data quality checks on the report table before any downstream work proceeds. It validates that planet_name has no nulls and at least three distinct values, and that total_passengers has no nulls and a minimum value of one. If any check fails, the task fails and the asset is not published.daily_report asset. Any downstream Dag that schedules on this asset (for example, a Dag that formats the report for a dashboard or sends a Slack summary) only triggers when fresh, validated data is available.Data flows in a clear sequence within the single Dag: raw bookings and payments are generated, aggregated into a daily report per destination, validated against quality constraints, and then published as an asset that signals readiness to downstream consumers.

daily_report asset after successful validation. Downstream Dags schedule themselves on this asset, so they only trigger when fresh, quality-checked data is available. This decouples producers from consumers without hard-coded cross-Dag dependencies.SQLColumnCheckOperator validates the report table before the asset is published. Checks include non-null constraints on destination names, distinct value thresholds (at least three destinations), and minimum passenger counts. Failed checks block the asset update, which prevents downstream Dags from triggering on bad data. See Run data quality checks using SQL check operators for detailed usage of all available SQL check operators.params with Jinja loops and conditionals to control the SQL structure itself (how many bookings to generate per run). The transformation step uses parameters to pass the execution date as a database-level bound parameter ($reportDate), which preserves type safety and protects against SQL injection. This separation, Jinja for structure, parameters for values, is a best practice for any SQL-heavy pipeline.include/sql folder and referenced by task via template_searchpath. The Dag file contains only orchestration logic (task definitions, dependencies, parameters), while the business logic lives entirely in SQL files. This separation makes the SQL independently testable and reusable across Dags.The SQLExecuteQueryOperator supports two ways to pass values into SQL, and this architecture uses both:

params: Used for Jinja template rendering. Values are accessed in SQL files via {{ params.param_name }} and rendered as strings by default. Use params when the SQL structure itself needs to change, such as looping to generate a variable number of INSERT statements or conditionally including SQL clauses. Note that params are not templated themselves.parameters: Used for database-level parameterized queries. Values are passed directly to the database driver using syntax like $param_name or %(param_name)s, preserving native Python types (integers, floats, booleans). Use parameters when passing values into a fixed SQL structure, especially user-provided values, since the database driver handles escaping and type safety. Note that parameters are templated, so you can use Airflow macros like {{ ds }} in their values.Both approaches enable dynamic SQL, but they operate at different stages of query processing. Use params for structural flexibility (Jinja), parameters for safe value binding (database driver).

max_active_tis_per_dagrun=1 to avoid write conflicts. For production workloads with multiple concurrent writers, consider MotherDuck (managed DuckDB) or a traditional cloud warehouse.To build your own ETL pipeline with DuckDB, Snowflake, or any other SQL database supported, explore the individual Learn guides linked in the Airflow features section for detailed implementation guidance on each pattern. Astronomer recommends deploying Airflow pipelines using a free trial of Astro.