The open-source Blueprint package lets data engineers define reusable Dag building blocks called blueprints in Python. Each blueprint wraps an Airflow task group containing one or more Airflow operators, decorators, or nested task groups into a configurable template that other team members can use without needing to write Airflow code.

Team members who don’t know Airflow can create Dags by chaining blueprints together either using YAML or the no-code interface in the Astro IDE.

In this tutorial, you’ll learn how to create new blueprints for your team from scratch.

To get the most out of this tutorial, you should have an understanding of:

Create a new Astro project. Delete the dags/example_astronauts.py file.

Add the blueprint package to your requirements.txt file. Make sure to pin the latest version.

A blueprint template is a Python class that inherits from the Blueprint class and defines a render() method. The render() method returns an Airflow TaskGroup or a single operator.

In your Dags folder, create a subdirectory called templates with one file math_etl.py and add the following scaffolding code.

The MyMathETLConfig class contains the definition of each configuration Field that is available to the end user using the template in a Dag.

The template class MyMathETLBlueprint inherits from Blueprint[MyMathETLConfig], which ties the blueprint to that configuration model. The class’s render() method returns a TaskGroup that contains the tasks to be executed when the blueprint is used in a Dag.

Fill the MyMathETLConfig class with two fields: my_number and my_name.

Add a TaskGroup to the render() method that contains three tasks: extract, multiply and print. Make sure the render() method returns the task group object. Note how you can access the configs provided by the end user inside the blueprint template by using config.my_number and config.my_name.

If your end users are using the Astro IDE to create blueprint Dags, you need to generate a JSON schema file that describes the blueprint configuration model. This file is used by the Astro IDE to validate the configuration fields and provide a visual interface for the end user to configure the blueprint.

Create a new folder at the root of your project called blueprint and create a subfolder called generated-schemas.

Run the following command to generate the blueprint schema JSON file for your template.

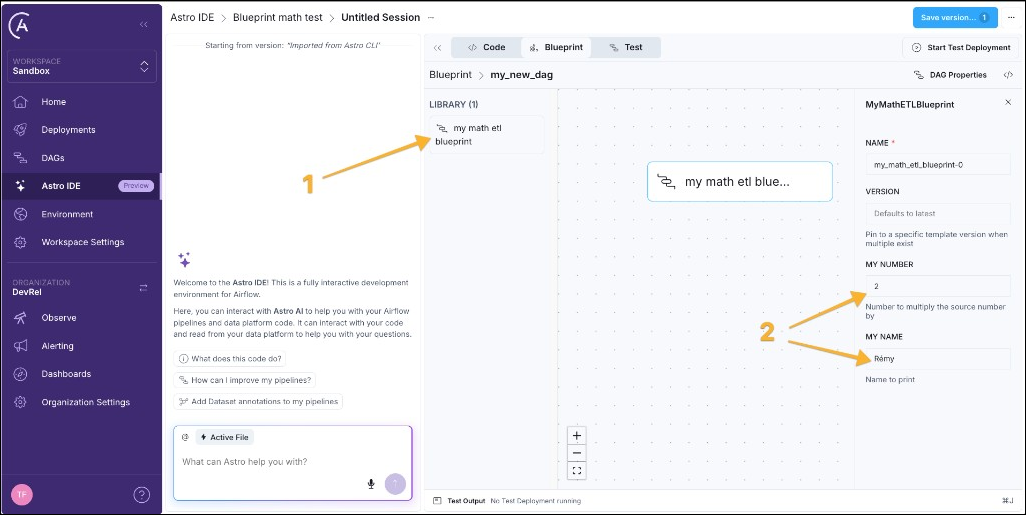

Once the schema file is present in the blueprint/generated-schemas directory, importing this Astro project into the Astro IDE will automatically generate an entry in the Library of the blueprint interface, for users to build Dags using drag-and-drop. Users can drag the blueprint node (1) to the canvas and configure all input fields in the form to the right (2).

When you create a Dag using blueprint in the Astro IDE, the Astro IDE automatically creates a YAML file for the Dag. This YAML file references the blueprint using the blueprint key. To make Airflow aware of this Dag, you need to add the Dag loader file.

Create a new file in the dags folder called loader.py and add the following code. Note that for Airflow to parse the file, it needs to include either the string airflow or dag (case-insensitive). You can toggle this behavior by setting the [core].dag_discovery_safe_mode configuration to False.

This function call discovers all *.dag.yaml files in the dags folder and resolves the referenced blueprints, validates configurations, and creates Dag objects that can be picked up by Airflow.

Of course, you can also directly use blueprints in YAML without using the Astro IDE.

Create a new YAML file in the dags folder called my_math_etl.dag.yaml and add the following code. Note that the filename needs to end with .dag.yaml for the blueprint loader to pick it up by default.

You can add as many blueprints within the steps key as you want. Dependencies are set using the depends_on key.

(Optional) You can test your blueprint Dag like any other Dag in a local Airflow environment. Start Airflow using astro dev start and run your Dag in the Airflow UI

Every task generated by Blueprint includes two extra fields visible in the Rendered Template tab in the Airflow UI: blueprint_step_config (the resolved YAML configuration) and blueprint_step_code (the Python source of the blueprint class). You can use these fields to trace any task back to its configuration.

As your blueprints evolve, you might need to introduce breaking changes to a configuration schema. Blueprint supports versioning so existing Dag YAML files continue to work while new ones can use the updated schema. You apply the same pattern to MyMathETLBlueprint when you publish a MyMathETLBlueprintV2 (or later) class.

Each version is a separate Python class. The initial version uses a clean class name (implicitly version 1). Later versions add a V{N} suffix:

MyMathETLBlueprintV2 and make any changes to the contents that you want.version key to the blueprint step.Congratulations! You created a blueprint template and used it to create a Dag using YAML. You can now create blueprints for common data engineering patterns and provide them in an Astro project for your team members to build Dags without writing Python code.