Airflow task groups are a tool to organize tasks into groups within your DAGs. Using task groups allows you to:

default_args to sets of tasks, instead of at the DAG level using DAG parameters.In this guide, you’ll learn how to create and use task groups in your DAGs. You can find many example DAGs using task groups on the Astronomer GitHub.

To get the most out of this guide, you should have an understanding of:

Task groups are most often used to visually organize complicated DAGs. For example, you might use task groups:

There are two ways to define task groups in your DAGs:

TaskGroup class to create a task group context.@task_group decorator on a Python function.In most cases, it is a matter of personal preference which method you use. The only exception is when you want to dynamically map over a task group; this is possible only when using @task_group.

The following code shows how to instantiate a simple task group containing two sequential tasks. You can use dependency operators (<< and >>) both within and between task groups in the same way that you can with individual tasks.

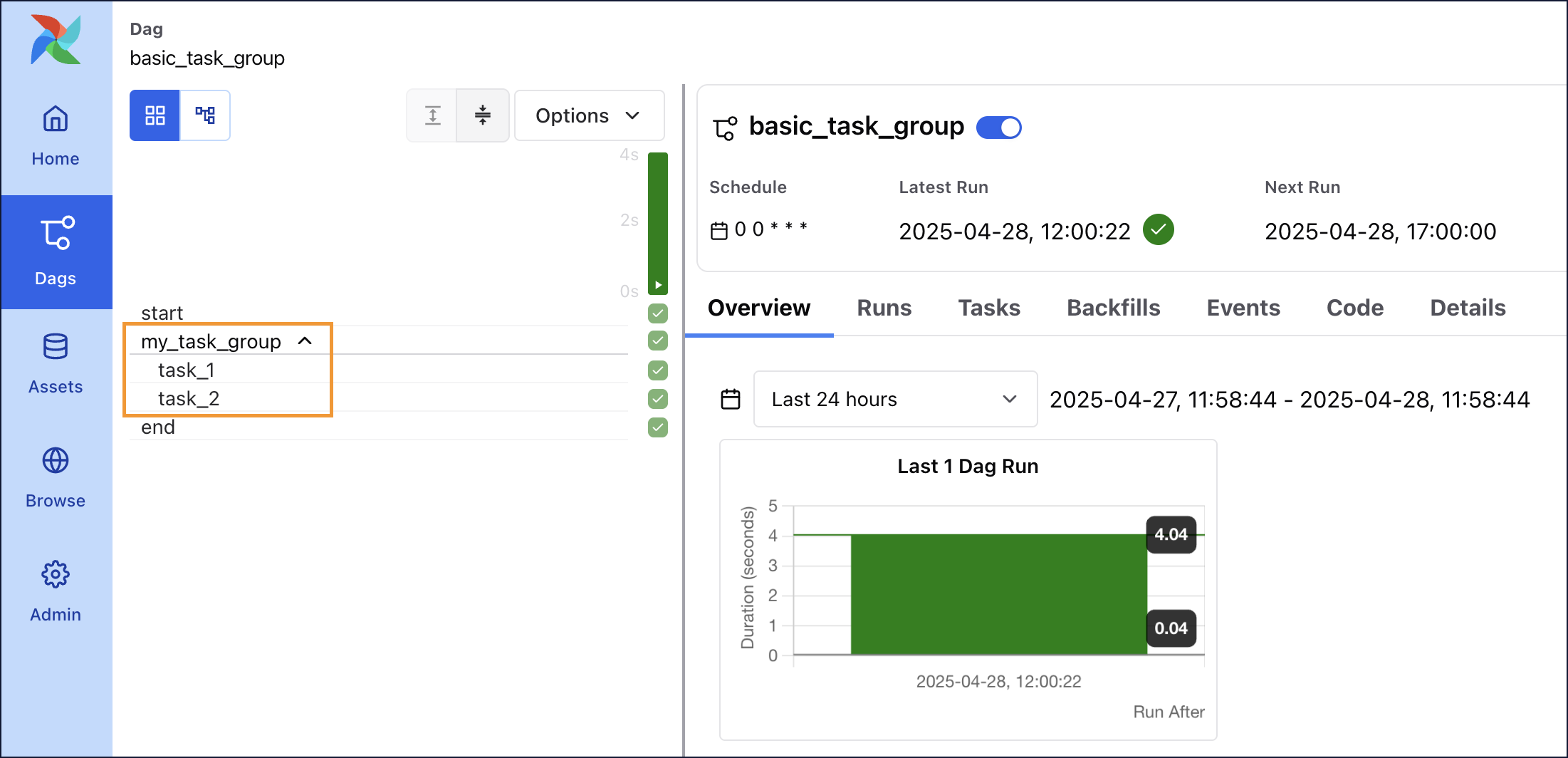

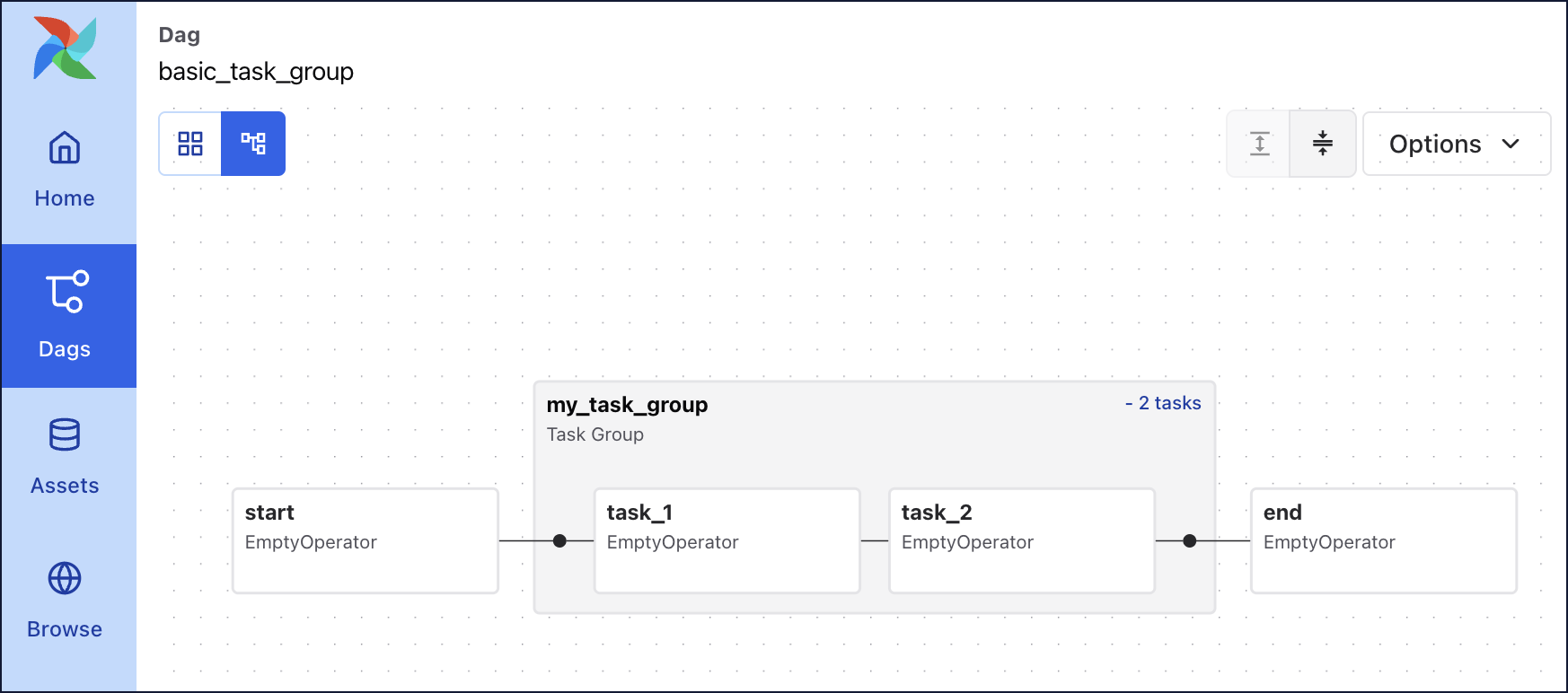

Task groups are shown in both the Grid and the Graph of your DAG:

You can use parameters to customize individual task groups. The two most important parameters are the group_id which determines the name of your task group, as well as the default_args which will be passed to all tasks in the task group. The following examples show task groups with some commonly configured parameters:

task_id in task groupsWhen your task is within a task group, your callable task_id will be group_id.task_id. This ensures the task_id is unique across the DAG. It is important that you use this format when referring to specific tasks when working with XComs or branching. You can disable this behavior by setting the task group parameter prefix_group_id=False.

For example, the task_1 task in the following DAG has a task_id of my_outer_task_group.my_inner_task_group.task_1.

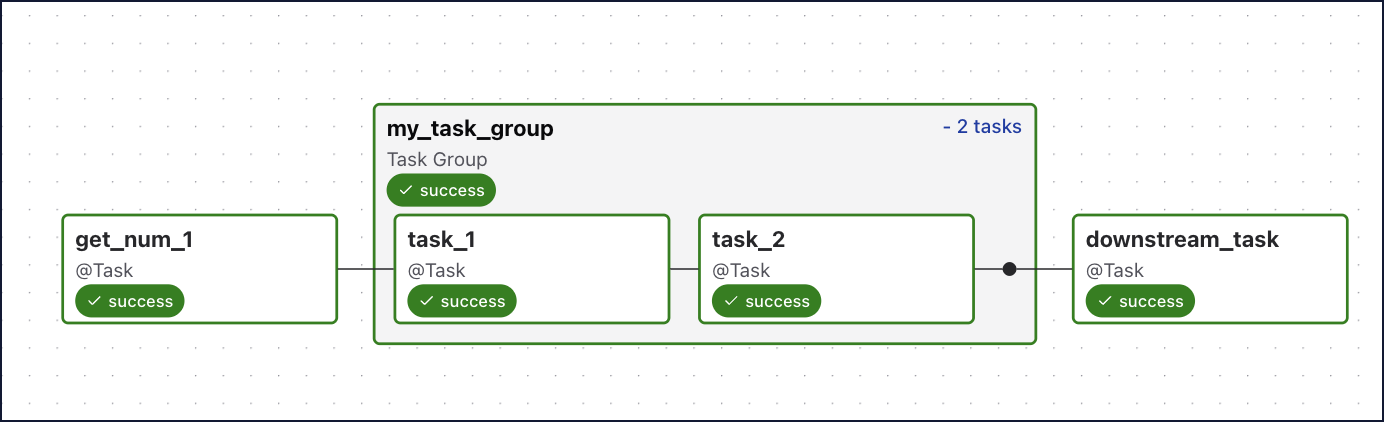

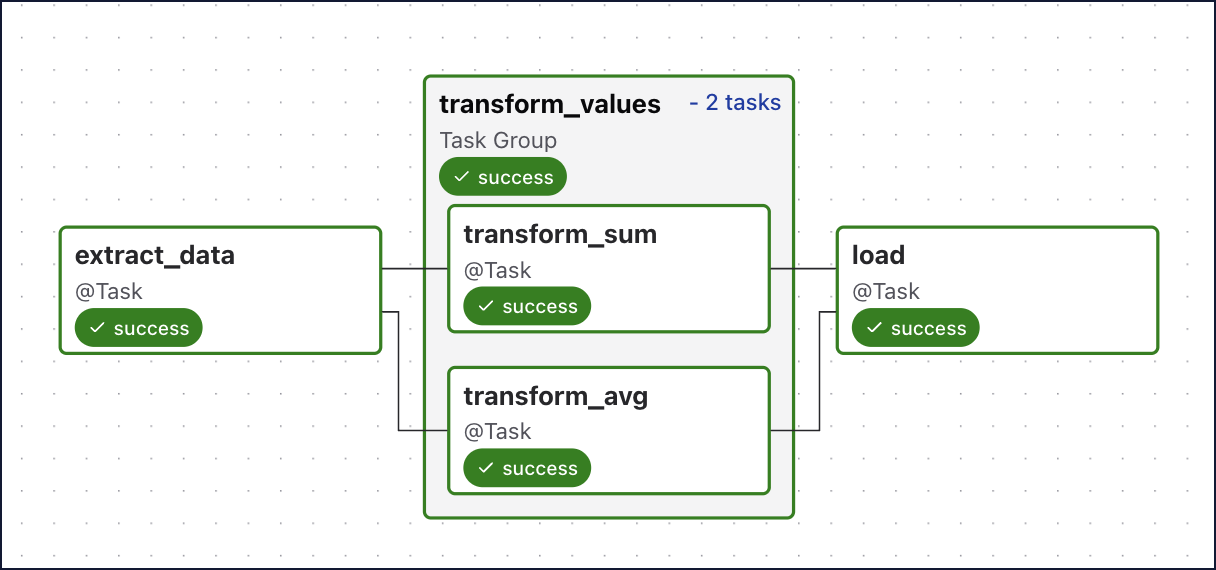

When you use the @task_group decorator, you can pass data through the task group just like with regular @task decorators:

The resulting DAG is shown in the following image:

There are a few things to consider when passing information into and out of task groups:

load() task.<< or >>) to define downstream dependencies to the task group.You can use dynamic task mapping with the @task_group decorator to dynamically map over task groups. The following DAG shows how you can dynamically map over a task group with different inputs for a given parameter.

This DAG dynamically maps over the task group group1 with different inputs for the my_num parameter. 6 mapped task group instances are created, one for each input. Within each mapped task group instance two tasks will run using that instances’ value for my_num as an input. The pull_xcom() task downstream of the dynamically mapped task group shows how to access a specific XCom value from a list of mapped task group instances (map_indexes).

For more information on dynamic task mapping, including how to map over multiple parameters, see Dynamic Tasks.

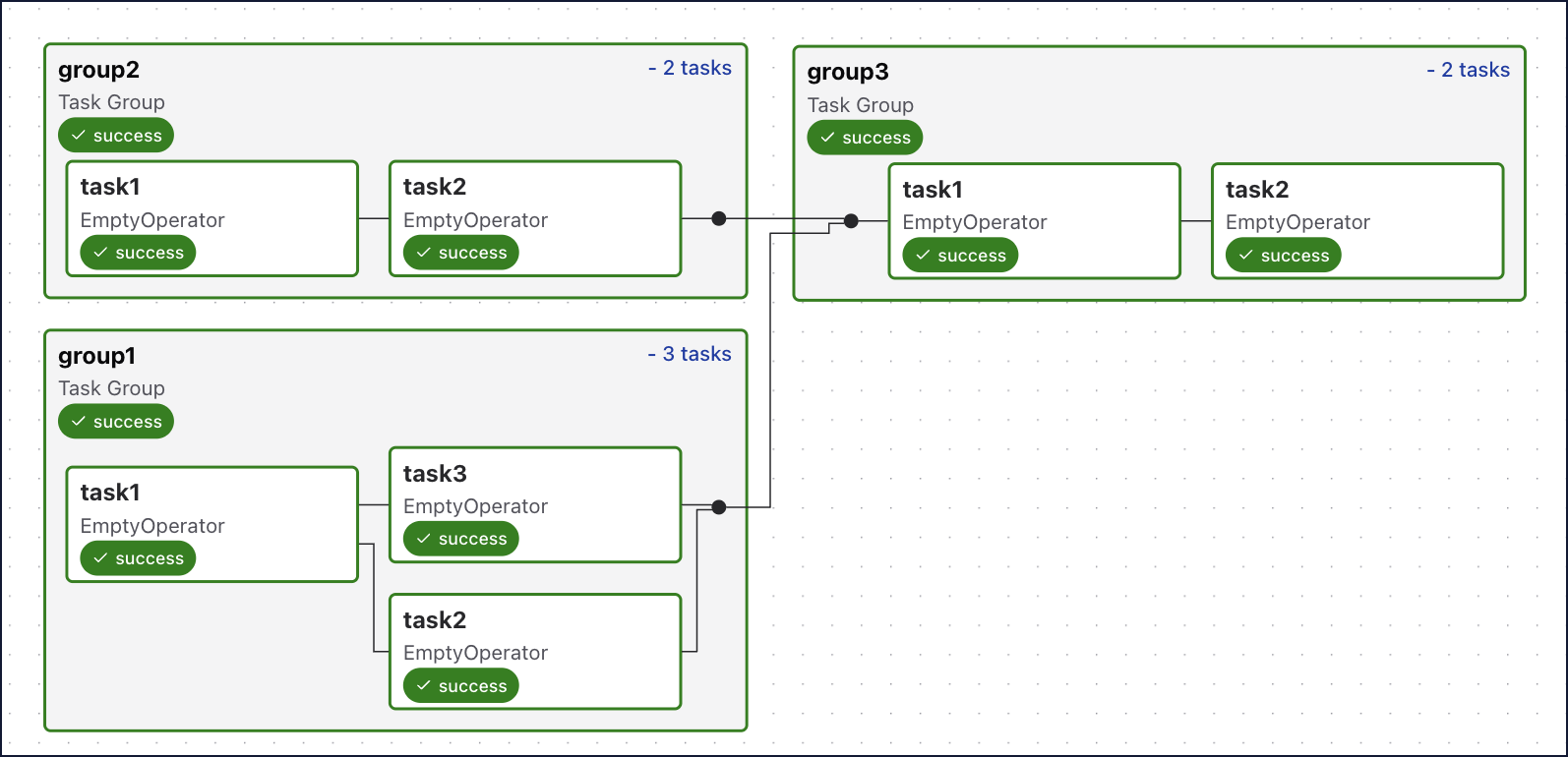

By default, using a loop to generate your task groups will put them in parallel. If your task groups are dependent on elements of another task group, you’ll want to run them sequentially. For example, when loading tables with foreign keys, your primary table records need to exist before you can load your foreign table.

In the following example, the third task group generated in the loop has a foreign key constraint on both previously generated task groups (first and second iteration of the loop), so you’ll want to process it last. To do this, you’ll create an empty list and append your task group objects as they are generated. Using this list, you can reference the task groups and define their dependencies to each other:

The following image shows how these task groups appear in the Airflow UI:

This example also shows how to add an additional task to group1 based on your group_id, Even when you’re creating task groups in a loop to take advantage of patterns, you can still introduce variations to the pattern while avoiding code redundancies.

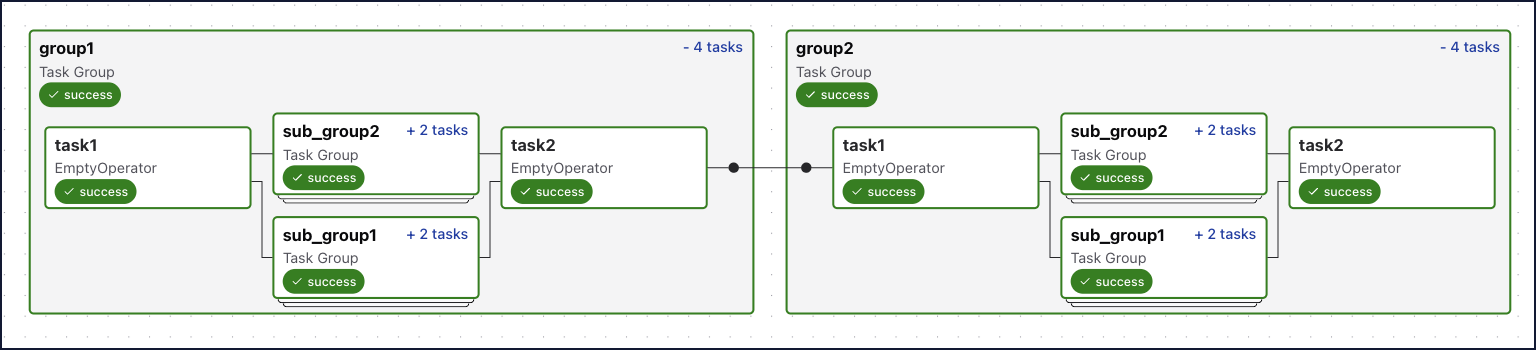

For additional complexity, you can nest task groups by defining a task group indented within another task group. There is no limit to how many levels of nesting you can have.

The following image shows the expanded view of the nested task groups in the Airflow UI:

If you use the same patterns of tasks in several DAGs or Airflow instances, it may be useful to create a custom task group class module. To do so, you need to inherit from the TaskGroup class and then define your tasks within that custom class. You also need to use self to assign the task to the task group. Other than that, the task definitions will be the same as if you were defining them in a DAG file.

In the DAG, you import your custom TaskGroup class and instantiate it with the values for your custom arguments:

The resulting image shows the custom templated task group which can now be reused in other DAGs with different inputs for num1 and num2.