Partitioned Dag runs and asset events in Apache Airflow®

Partitioned Dag runs and asset events in Apache Airflow®

Airflow 3.2 introduced the concept of partitioned Dag runs and partitioned asset events. A partitioned Dag run is a Dag run with a partition_key attached to it, and a partitioned asset event is an asset event that has a partition_key attached to it. Any string can be a partition key, with time-based partition keys being the most common. Partition keys can be used in tasks in a partitioned Dag run to partition data, for example in a SQL statement.

In this guide, you’ll learn:

- When to use partitioned Dag runs and asset events.

- How to create a partitioned Dag run.

- How to create a partitioned asset event.

- How to schedule a Dag based on partitioned asset events.

Assumed knowledge

To get the most out of this guide, you should have an existing knowledge of:

- Airflow scheduling concepts. See Schedule Dags in Airflow.

- Basic asset-based scheduling. See Basic asset-based scheduling in Apache Airflow®.

When to use partitions

Situations in which you should consider using partitioned Dag runs and asset events are:

- When you want to process data from a specific time period in every Dag run. For example, if you have a Dag that runs once a day and should always process the data from the previous day.

- When you have a Dag that is scheduled based on asset events, within which you want to process data from a specific time period. You can use the

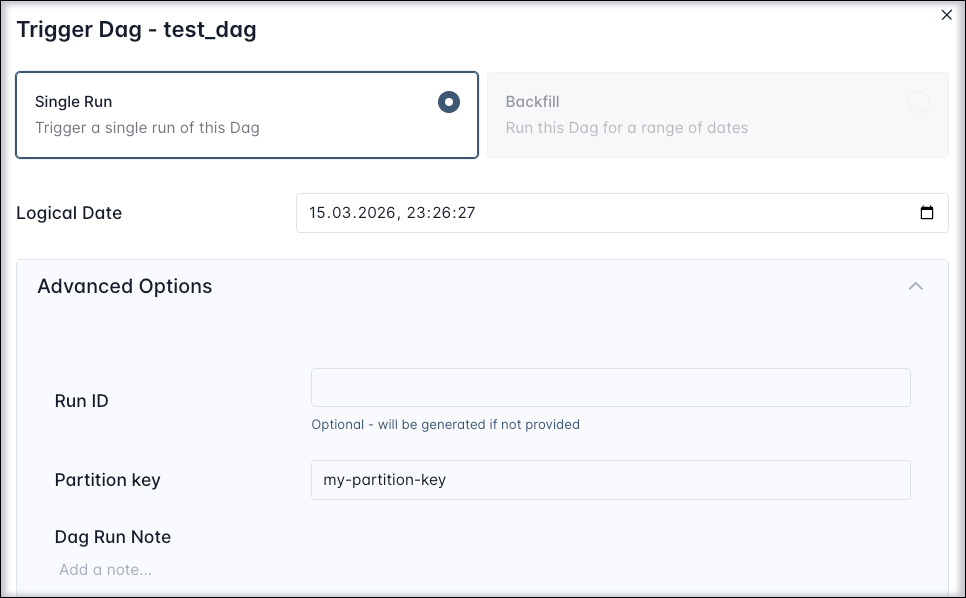

partition_keyto partition the data inside of the downstream Dag run. The partition_key propagates from the upstream Dag run to the downstream Dag run, and you can adjust its grain with partition key mappers. - When you want to process data for a specific segment in manual or API-triggered Dag runs. For example, if your Dag generates a report for a specific department, you can set a different

partition_keyfor each Dag run in the Trigger Dag run config or the API request body.

Create a partitioned Dag run

A partitioned Dag run is a Dag run with a partition_key attached to it. There are four ways to create a partitioned Dag run:

-

By running a Dag manually in the Airflow UI and attaching a

partition_keyin the Trigger Dag run config.

-

By running a Dag using the Airflow REST API and attaching a

partition_keyin the request body. -

By fulfilling the asset schedule condition of a Dag that uses the

PartitionedAssetTimetabletimetable. -

By using the

CronPartitionTimetabletimetable. Note that only Dag runs of the typescheduledandbackfillare partitioned, manual runs are not, unless you are providing apartition_keyin the Trigger Dag run config.

You can access partition keys from within any task in a partitioned Dag run, see Accessing partition keys for more information.

CronPartitionTimetable

The CronPartitionTimetable is a timetable that creates partitioned Dag runs with an automatic partition_key attached that is based on the run_after timestamp of each scheduled and backfilled Dag run.

You can offset the partition key by providing the run_offset parameter to the CronPartitionTimetable instance. The offset is relative to the cron expression, for example if your Dag runs once every hour, a run_offset of -12 will offset the partition key by 12 hours. So the Dag run with the run id 2026-03-16T09:00:00 will have a partition key of 2026-03-15T21:00:00.

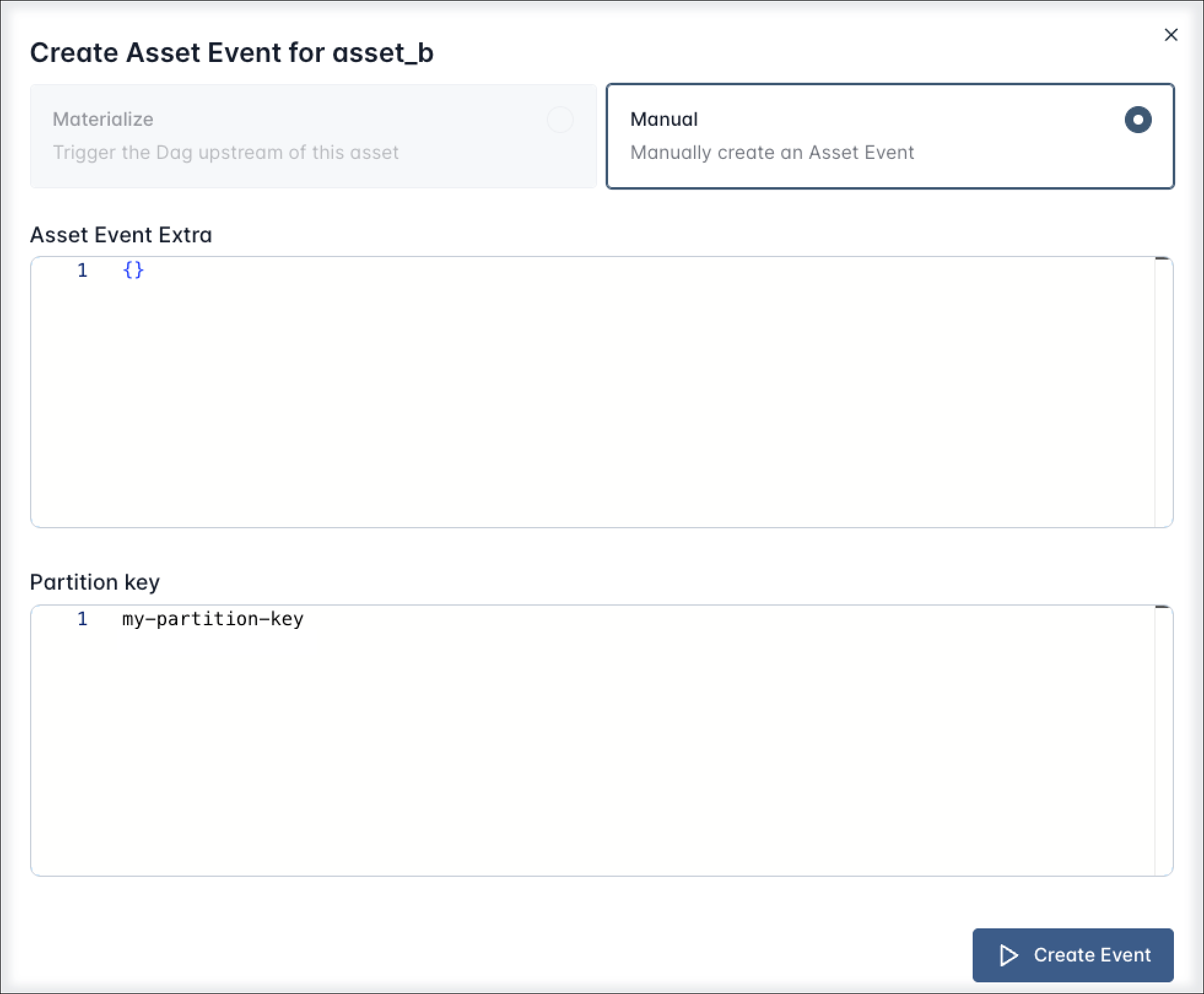

Create a partitioned asset event

A partitioned asset event is an asset event that has a partition_key attached to it. There are three ways to create a partitioned asset event:

-

By updating an asset manually in the Airflow UI and providing a

partition_keyin the Asset Event creation dialog.

-

By updating an asset using the Airflow REST API and providing a

partition_keyin the request body. -

By updating an asset using the

outletsparameter of a task in a Dag that is scheduled using aCronPartitionTimetabletimetable.

Partitioned asset events created by a task in a Dag using the CronPartitionTimetable timetable are intended for partition-aware downstream scheduling, and do not trigger non-partition-aware Dags.

The example below shows a Dag that is scheduled using a CronPartitionTimetable timetable. In every scheduled or backfilled run of this Dag, successful completion of the my_task_partitioned_upstream task will create a partitioned asset event for the my_partitioned_asset asset. The partition_key will be the run_after timestamp of the Dag run.

Schedule on a partitioned asset

To schedule a Dag on a partitioned asset, you set its schedule parameter to an instance of PartitionedAssetTimetable.

This Dag will run whenever the my_partitioned_asset is updated by a partitioned asset event. It will not run based on regular asset events produced for the my_partitioned_asset asset.

You can modify the grain of the partition key by providing a partition_key_mapper to the PartitionedAssetTimetable instance.

For example, to partition the data by day, you can use the StartOfDayMapper to normalize the partition key to the day in the format YYYY-MM-DD. See Partition key mappers for more information on the available partition key mappers.

Combined partitioned asset schedules

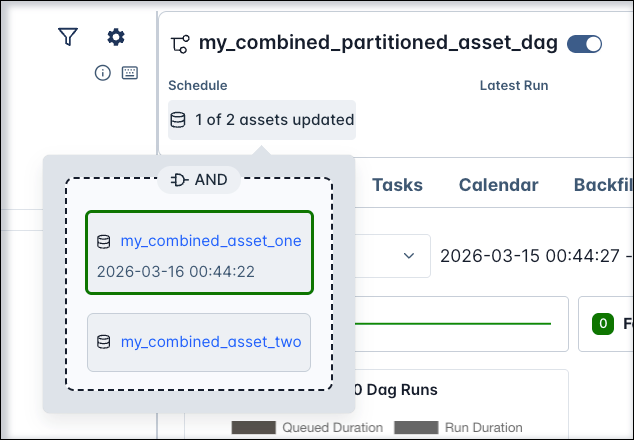

You can combine multiple assets in a single PartitionedAssetTimetable instance to create a composite asset schedule using the same logical expressions (AND (&) plus OR (|)) as when creating a conditional asset schedule with regular assets.

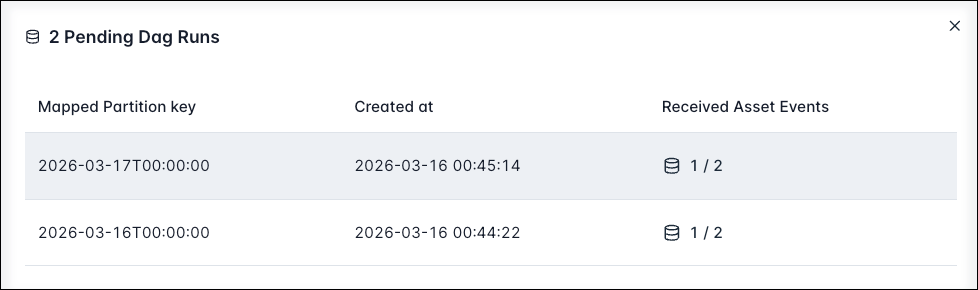

If one of the assets is updated with a partitioned asset event, a pending run of this Dag will be created. Pending runs are visible in the Airflow UI by clicking on its schedule.

If several assets have been updated with a partitioned asset event of different partition keys, several pending runs are created, each with a different partition key. Each pending run has a visualization of which assets have been updated for that specific partition key and which assets are still pending.

A run of this Dag is only triggered when both my_combined_asset_one and my_combined_asset_two are updated with a partitioned asset event sharing the same partition key. Note that queued partitioned asset events for other partition keys are not reset by this.

You can use different partition key mappers for each asset in the PartitionedAssetTimetable, see Partition key mappers.

Partition keys

Partition keys are strings attached to partitioned Dag runs and asset events. You can use them in tasks in a partitioned Dag run to partition data, for example in a SQL statement.

Accessing partition keys

The partition_key can be accessed inside any task in a partitioned Dag run from within the Airflow context or by using Jinja templating.

One of the most common use cases is to use the partition key in a SQL statement to partition the data used in a specific Dag run. For example, in a Dag that runs once a day, you can use the partition key to select the data for the previous day.

Partition key mappers

Partition key mappers are used to modify the partition key of a Dag run. You can use them to change the grain of the partition key, to map composite keys segment by segment, or to validate that keys are in a fixed allow-list. The following partition key mappers are available:

IdentityMapper: keeps keys unchanged. Default mapper.- Temporal mappers change the grain of the partition key:

StartOfHourMapper: normalizes time keys to the hour in the formatYYYY-MM-DDTHH, for example the partition key2026-03-16T09:37:51is mapped to2026-03-16T09.StartOfDayMapper: normalizes time keys to the day in the formatYYYY-MM-DD(2026-03-16T09:37:51->2026-03-16)StartOfWeekMapper: normalizes time keys to the week in the formatYYYY-MM-DD (W%V)(2026-03-16T09:37:51->2026-03-16 (W12))StartOfMonthMapper: normalizes time keys to the month in the formatYYYY-MM(2026-03-16T09:37:51->2026-03)StartOfQuarterMapper: normalizes time keys to the quarter in the formatYYYY-Q<n>(2026-03-16T09:37:51->2026-Q1). The quarters are based on the calendar year, Q1 starts in January, Q2 in April, Q3 in July, and Q4 in October.StartOfYearMapper: normalizes time keys to the year in the formatYYYY(2026-03-16T09:37:51->2026).

ProductMapper: maps composite keys segment by segment, applying one mapper per segment and then rejoining the mapped segments. For example, with the keyFinance|2026-03-16T09:00:00,ProductMapper(IdentityMapper(), StartOfDayMapper())producesFinance|2026-03-16. See Composite partition keys.AllowedKeyMapper: validates that keys are in a fixed allow-list and passes the key through unchanged if valid. For example,AllowedKeyMapper(["Marketing", "Finance", "Sales"])accepts only those department keys and rejects all others.

You can also change the default partition key mapper for all assets in the PartitionedAssetTimetable by providing a default_partition_mapper parameter.

To override the default partition key mapper for a specific asset, you can set the partition_mapper_config parameter of the PartitionedAssetTimetable instance to a dictionary of asset instances and partition key mappers.

You can use different partition key mappers for each asset in the PartitionedAssetTimetable.

When chaining several Dags with a partitioned asset schedule, the partition key mappers need to be identical for all Dags after the first one in the chain. For example a Dag which uses a StartOfDayMapper will fail the task producing to the next asset in the chain if the next Dag in the chain uses a StartOfWeekMapper.

Composite partition keys

Composite partition keys are partition keys that are composed of multiple segments, separated by | delimiters. For example, the partition key Finance|2026-03-16T09:00:00|Revenue is a composite partition key with three segments: Finance, 2026-03-16T09:00:00, and Revenue.

You can use the ProductMapper partition key mapper to map composite keys segment by segment, applying one mapper per segment and then rejoining the mapped segments. For example, with the key Finance|2026-03-16T09:00:00, ProductMapper(IdentityMapper(), StartOfDayMapper()) produces Finance|2026-03-16.

The given composite partition key needs to match the number of segments in the ProductMapper instance and needs to be valid for all mappers in the ProductMapper instance in order to trigger a Dag run. Invalid composite partition keys cause an error.