ELT with Apache Airflow® and Databricks

ELT with Apache Airflow® and Databricks

ELT with Apache Airflow® and Databricks

This reference architecture shows how to use Apache Airflow® to copy synthetic data about a green energy initiative from an S3 bucket into a Databricks table and run several Databricks notebooks as a Databricks job to analyze the data. A demo of the architecture is shown in the How to Orchestrate Databricks Jobs Using Airflow webinar.

Databricks is a unified data and analytics platform built around fully managed Apache Spark clusters. Using the Airflow Databricks provider package, you can create a Databricks job from Databricks notebooks running as a task group in your Airflow Dag. This lets you use Airflow’s orchestration features in combination with Databricks Workflows, Databricks’ most cost-effective compute option. For detailed instructions on using the Airflow Databricks provider, see Orchestrate Databricks jobs with Airflow.

You can adapt this architecture for your use case by changing the data source, adjusting the notebook logic, or adding transformation steps.

This reference architecture consists of three main components:

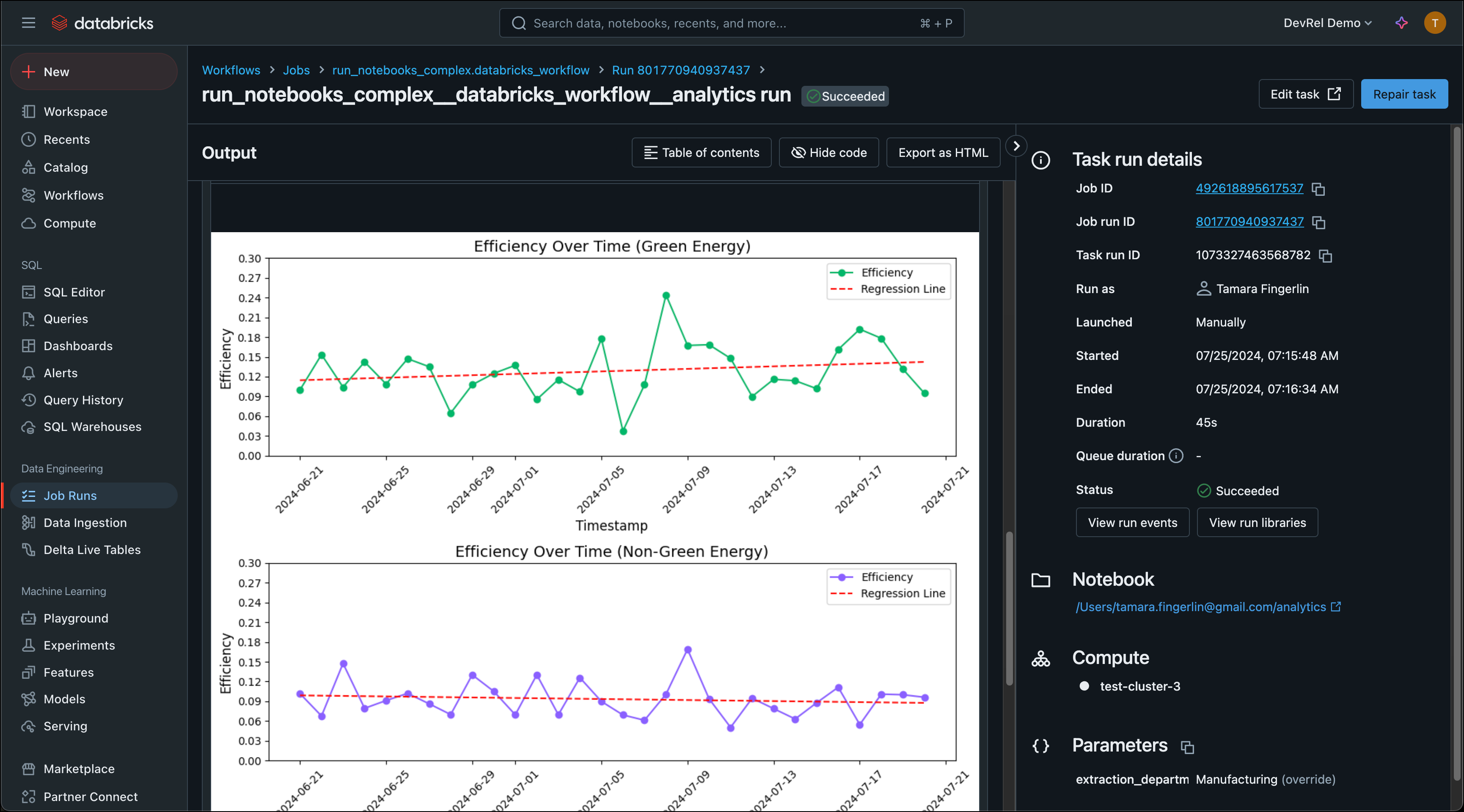

DatabricksCopyIntoOperator. Each file is loaded in parallel through dynamic task mapping.DatabricksWorkflowTaskGroup and DatabricksNotebookOperator. The notebooks extract data from the table, transform it, and load the results back into Databricks tables.Data flows in a clear sequence: local CSV files to S3 to a Databricks raw table to transformed tables via notebooks. The first Dag handles extraction and loading, then publishes an asset that triggers the second Dag for transformation.

DatabricksWorkflowTaskGroup wraps multiple notebooks into a single Databricks Workflow job, while operators like DatabricksSqlOperator and DatabricksCopyIntoOperator handle SQL execution and data loading.To build your own ELT pipeline with Databricks and Apache Airflow, explore the individual Learn guides linked in the Airflow features section for detailed implementation guidance on each pattern. Astronomer recommends deploying Airflow pipelines using a free trial of Astro.