Airflow in Action: Boosting BI at Visa. 100% Trust in Data With 70% Lower Overhead

4 min read |

Chinni Krishna Abburi, Business Intelligence Engineer at Visa, delivered a practical guide to automating business intelligence (BI) workflows at the Airflow Summit. The session walks through Visa's transformation from manual, error-prone BI processes to a fully automated system that cut data refresh times from 24 hours to under 2 hours, reduced engineer overhead by 70%, and established 100% business trust in data. Teams struggling with legacy BI setups will find actionable strategies for building reliable, scalable data pipelines on Apache Airflow®.

When Manual BI Breaks at Scale

Visa's BI team operated with a traditional setup: a SQL Server transactional system, an on-premises data warehouse, a 20-year-old ETL tool, and manually refreshed dashboards. The architecture worked fine at low data volumes. As transaction volumes exploded, the system collapsed. Dashboard refresh cycles slowed to 6-8 hours regularly, with some stretching to 24 hours. Fragile dependencies between ETL jobs created cascading failures that missed business deadlines. Stakeholders lost trust in the data.

To address these challenges the team needed three capabilities:

- End-to-end automation with zero human intervention

- Built-in reliability with automatic retries and proactive alerts

- Elastic scalability to add or remove data sources without architectural rewrites.

Why Airflow?

Airflow checked every box. The Python-based platform offered visual Dag architecture and native orchestration features, and seamless integration sealed the decision.

Figure 1: Stepping through the reasons why the BI engineering team at Visa selected Airflow. Image source.

Visa started to invest in a modern, cloud-native data stack including Databricks, AWS, Tableau, and Power BI but struggled to leverage these tools at full potential. Airflow connected them all. Its integration capabilities gave the team confidence to migrate from on-premises infrastructure to the cloud, a move they wouldn't have attempted without Airflow's orchestration layer.

Building the New Architecture

Visa redesigned their entire BI stack around Airflow. Data flows from transactional sources into AWS S3 for validation, then moves to Databricks where PySpark in notebooks transforms raw data into processed Delta tables. Airflow orchestrates every step, ensuring smooth data flow without delays or broken dependencies.

The integration with BI tools delivers the real business value. Once Databricks processes the data, Airflow triggers automated refreshes in Power BI and Tableau dashboards using their APIs. The system validates data quality before updating dashboards, ensuring accuracy. After refresh completes, Airflow sends notifications through Slack and email so stakeholders know exactly when fresh data arrives. Previous tools couldn't accomplish this workflow. Airflow made it possible.

Smart Pipeline Design

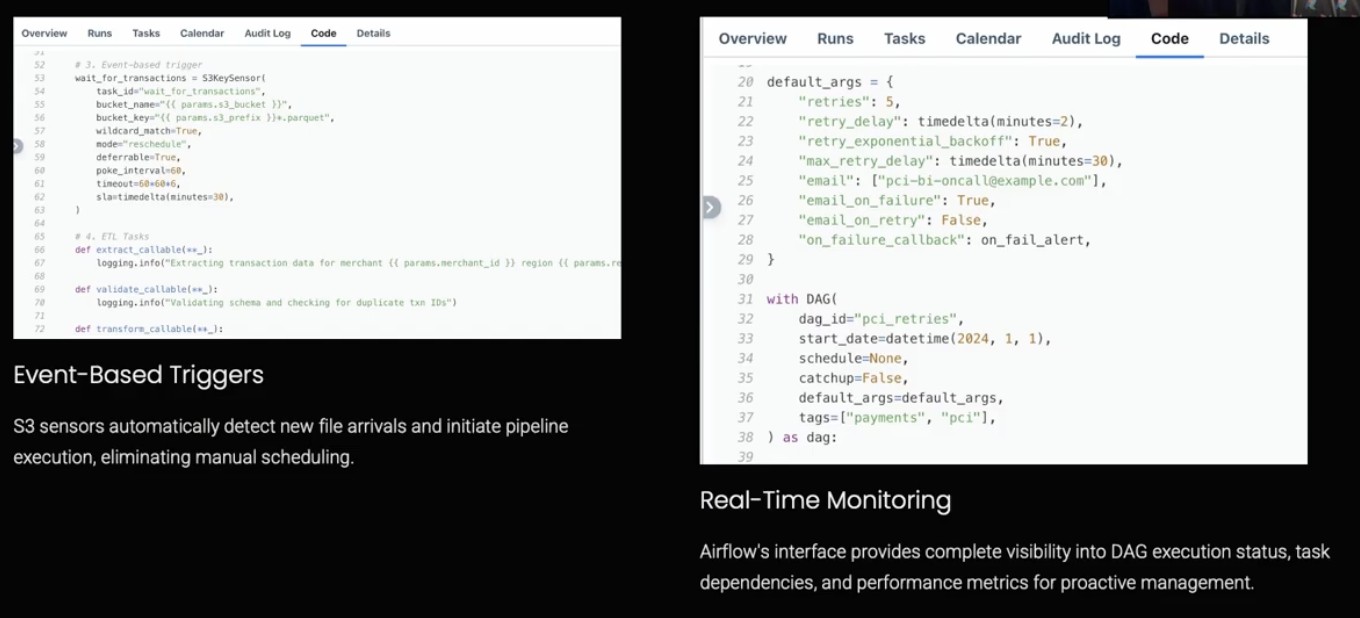

Visa embeds four critical patterns into their Dags. Parameterized templates with configuration-driven approaches reduced development time by 80%. Event-based data-processing runs as soon as new data arrives in S3, detected by an S3 sensor in deferrable mode, to maximize worker efficiency. SLA monitoring alerts the team when jobs exceed expected runtimes, enabling early intervention before business timelines suffer.

Editor's note: Astro customers can benefit from a set of advanced alerting features built in to Astro Observe, including timeliness and freshness SLAs spanning multiple Dags and assets.

Automatic retries handle transient errors while persistent errors are escalated via Email and Slack alerts for immediate engineer attention.

Figure 2: Code samples from Visa's Dags showing how the team uses event-based triggers and real-time monitoring. Image source.

The Results Speak

The metrics tell the transformation story:

- Manual intervention dropped 70%, freeing BI engineers to focus on advanced analytics instead of firefighting.

- Data refresh times fell from 24 hours to under 2 hours for critical business processes.

- The team achieved zero missed SLAs over six months.

- Most importantly, they reached 100% data trust. Business users demand perfection, and Airflow delivered it.

Lessons from the Trenches

Visa's implementation revealed five key lessons:

- Start small and scale smart: Begin with minimalistic data sources to build team confidence before tackling larger architectures

- Prioritize Dag readability: Use clean naming conventions, modular code, and comprehensive documentation

- Implement monitoring early: The team learned this the hard way. Early monitoring enables faster problem resolution

- Establish clear ownership: Tag Dags with owners and create runbooks for troubleshooting

- Treat Dags like code: Apply proper code review, version control, and software engineering practices for long-term sustainability

The Backbone of BI

Three years ago, Chinni would have said no single tool could solve all BI automation problems. After two and a half years with Airflow, his answer changed. Airflow became the backbone of Visa's BI operations, delivering operational confidence, speed to insights, and scalable infrastructure. The platform accomplished every goal the team set at the outset. Watch the full session to learn more.

ETL pipelines are at the core of Visa's BI architecture. Download our ETL/ELT patterns with Apache Airflow® 3 ebook for nine practical Dag code examples plus access to the companion GitHub repository so you can put it all the test straightaway.

Get started free.

OR

By proceeding you agree to our Privacy Policy, our Website Terms and to receive emails from Astronomer.