Airflow in Action: Migrating 200,000 Pipelines to Airflow 3 at Uber

6 min read |

At the Airflow Summit, Sumit Maheshwari, Tech Lead in Uber's data platform group and Apache Airflow® PMC member, walked through Operation Airlift: one of the most complex orchestration migrations ever undertaken.

This session covers why Uber is leaving its home-grown system behind, why Airflow 3 is the destination, and the architecture, tooling, and phased strategy they are using to move 200,000 pipelines to a single Airflow instance, without breaking the world's largest ride-sharing platform. A recap of the talk follows along with the link to the session replay.

Piper: Airflow's DNA with Uber's Engineering

Uber's internal orchestration system, Piper, started life as a fork of Airflow 1.10 in 2017. The team rewrote the scheduler in Java, split it into four discrete services, built ZooKeeper-based high availability, drag-and-drop pipeline authoring, and a full workflow governance model that automatically tiers pipelines by business criticality.

The result was a system capable of running 200,000 pipelines and processing nearly 800,000 task instances per day across a fleet of 1,000-plus machines serving over 1,000 internal teams. You can learn more from our recap of company’s Airflow Summit 2024: Data Engineering Insights from Uber and Its 200,000 Data Pipelines

When the Fork Lags the Mainline

The same depth of customisation that made Piper powerful presented its biggest challenges. Adding deferrable operators took almost a year to develop and roll out. Users had to create duplicate pipelines to handle both scheduled and manually triggered execution. There was no support for dynamic pipelines, no event-driven scheduling, and no Dag versioning. The Celery-Redis combination introduced reliability issues at scale, including tasks running twice or not at all. Local development UX was poor, and the Python SDK still looked like Airflow 1.x. The cost of maintaining Piper was growing, while its distance from the open-source ecosystem was getting harder to justify.

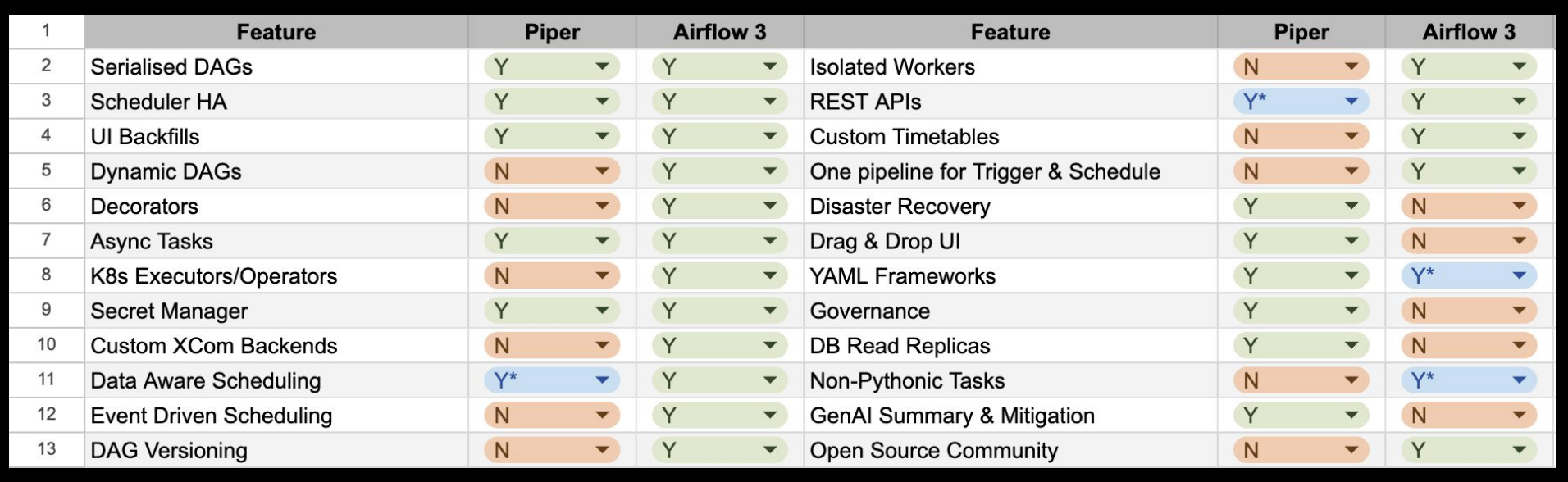

Figure 1: Comparing Piper with Apache Airflow 3. Supporting capabilities such as cross-region DR, drag and drop UI, Dag-level RBAC, and more, Astro closes the few feature gaps highlighted in the presentation. Image source.

Why Airflow 3 Changes the Calculation

The question of why Airflow and not something else came up repeatedly inside Uber. They chose to focus on Airflow, and specifically Airflow 3, for several concrete reasons:

- Familiar DSL. Because Piper was born from Airflow, the syntax is close enough that users would not need to invest cycles learning a new tool from scratch.

- Battle tested at scale. Airflow is proven at large organisations, and Uber already has first-hand knowledge of the optimizations and best practices needed to get the most out of Airflow environments .

- Active community and ecosystem. Extensive provider support, cloud vendor backing, and a large open-source community reduce the long-term maintenance burden that comes with maintaining a fork.

- Airflow 3 delivers the missing features. Isolated workers, Dag versioning, a modern REST API, event-driven scheduling, and support for non-Python tasks were not nice-to-haves. Maheshwari was direct: without those features, the case for migrating back to mainline Airflow would have been tougher.

There are areas where Piper capabilities have no direct Airflow equivalent today, with Uber raising AIPs (Airflow Improvement Proposals) to address some of those gaps. You can get the details by watching the session replay.

The Architecture and Migration Process

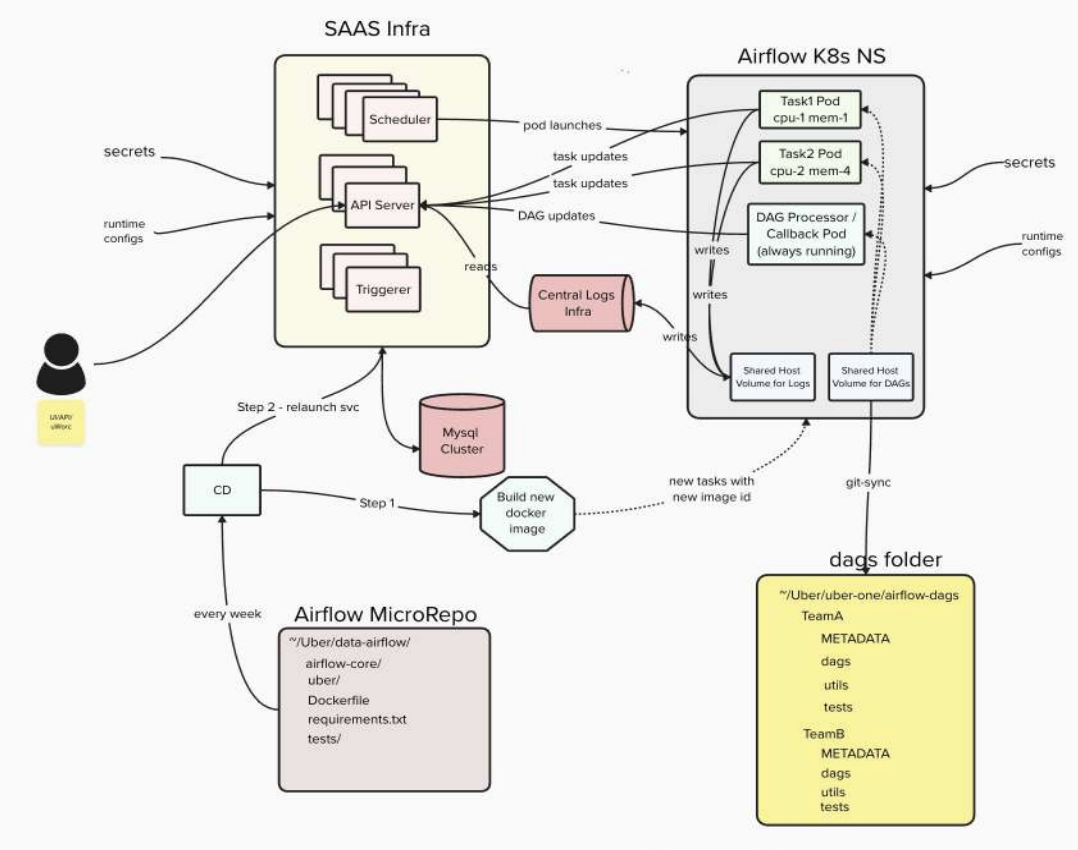

Uber's Airflow 3 implementation keeps it close to upstream, with all customization purposely done via plugins and providers.

A GitSync-based delivery mechanism pushes Dag changes to Kubernetes, making updates available within minutes across a 100 to 200 commit-per-day monorepo. They have built a custom API wrapper around the Airflow API server to integrate with Uber's own centralised identity and authorisation service.

Four pipeline types need to migrate: Python SDK DAGs, UI or API-managed pipelines, backfill pipelines, and YAML framework pipelines. For the SDK DAGs, Uber built an automated conversion tool that parses Piper pipeline code, applies a mapping ruleset, and emits valid Airflow DAGs. A centralised tool called Sheppard runs the conversion, raises diffs, and routes them to the right pipeline owners. Other types of pipelines are handled by introducing an execution engine flag that switches the runtime from Piper to Airflow without requiring users to change how they work.

Figure 2: How Uber's Airflow 3 environment is designed: from Dag authoring to task execution Image source

Uber’s End Goal

The target end state combines AI-assisted authoring, interactive development environments, dry-run staging, AI-assisted code review, safe deployments with automated rollback powered by Dag versioning, and a full observability and governance layer. At the time of the talk Uber was planning onboarding internal teams to Airflow and began the Piper end-of-life process. Full migration of all pipeline types is targeted for the second half of 2026.

Watch the full replay Operation Airlift: Uber's ongoing journey of migrating 200K pipelines to a single Airflow 3 instance to understand the architectural decisions, challenges, and what Uber is feeding back into the Airflow community along the way.

Astro: The Engineering Work You Don't Have to Do

Disaster recovery and observability are two areas where building on Airflow directly, as Uber is doing, requires significant custom engineering. Astro addresses both as managed Airflow platform capabilities.

Astro is the only managed Airflow platform to offer built-in, one-click cross-region failover and failback. It is designed to meet an RTO under 1 hour and RPO under 15 minutes with no custom architecture to build and no replication logic to write. It continuously replicates your metadata database and task logs to a secondary cluster. Deployment configurations, environment variables, connections, and run state are all mirrored in real time. Learn more in the blog post Cross-Region Disaster Recovery on Astro Is Now Generally Available: Here's How We Built It.

On observability, Astro Observe provides real-time lineage, SLA monitoring, data quality, and automated RCA all built directly into the orchestration layer, not bolted on as a separate tool.

DR is now generally available on AWS with GCP and Azure support on the roadmap, along with observability. For teams running Airflow at enterprise scale, that is 3-6 months of engineering time back in the hands of the people who should be building data products, not infrastructure. You can try Astro at no cost to see what Astro can do for your workflows and data pipelines.

If you’re planning your own move to the latest Airflow 3 generation, take a look at our eBook Practical guide: Upgrade from Apache Airflow 2 to Airflow 3. Here you’ll get upgrade checklists along with extensive explanations of breaking changes you need to be aware of, supporting a smooth transition.

Get started free.

OR

By proceeding you agree to our Privacy Policy, our Website Terms and to receive emails from Astronomer.