Airflow in Action: How SAP Delivers Trusted AI for Enterprise Clients

4 min read |

At the Airflow Summit, Sagar Sharma, Senior Software Engineer at SAP, detailed how SAP's Generative AI Foundations Team built a production-grade RAG (Retrieval Augmented Generation) pipeline using Apache Airflow®. The pipeline powers Joule for Consultants, SAP's AI copilot, and now processes over 5 million documents across more than 15 data sources, keeping AI context up to date. This post covers the architectural decisions, Dag evolution, and custom operators behind that system.

An AI Copilot Is Only as Good as Its Data

Joule for Consultants is a generative AI copilot built specifically for SAP consultants. What sets it apart from general-purpose tools like ChatGPT or Microsoft Copilot is the depth of SAP-specific knowledge it draws on: certifications, learning journeys, community posts, developer tutorials, and expert-reviewed content.

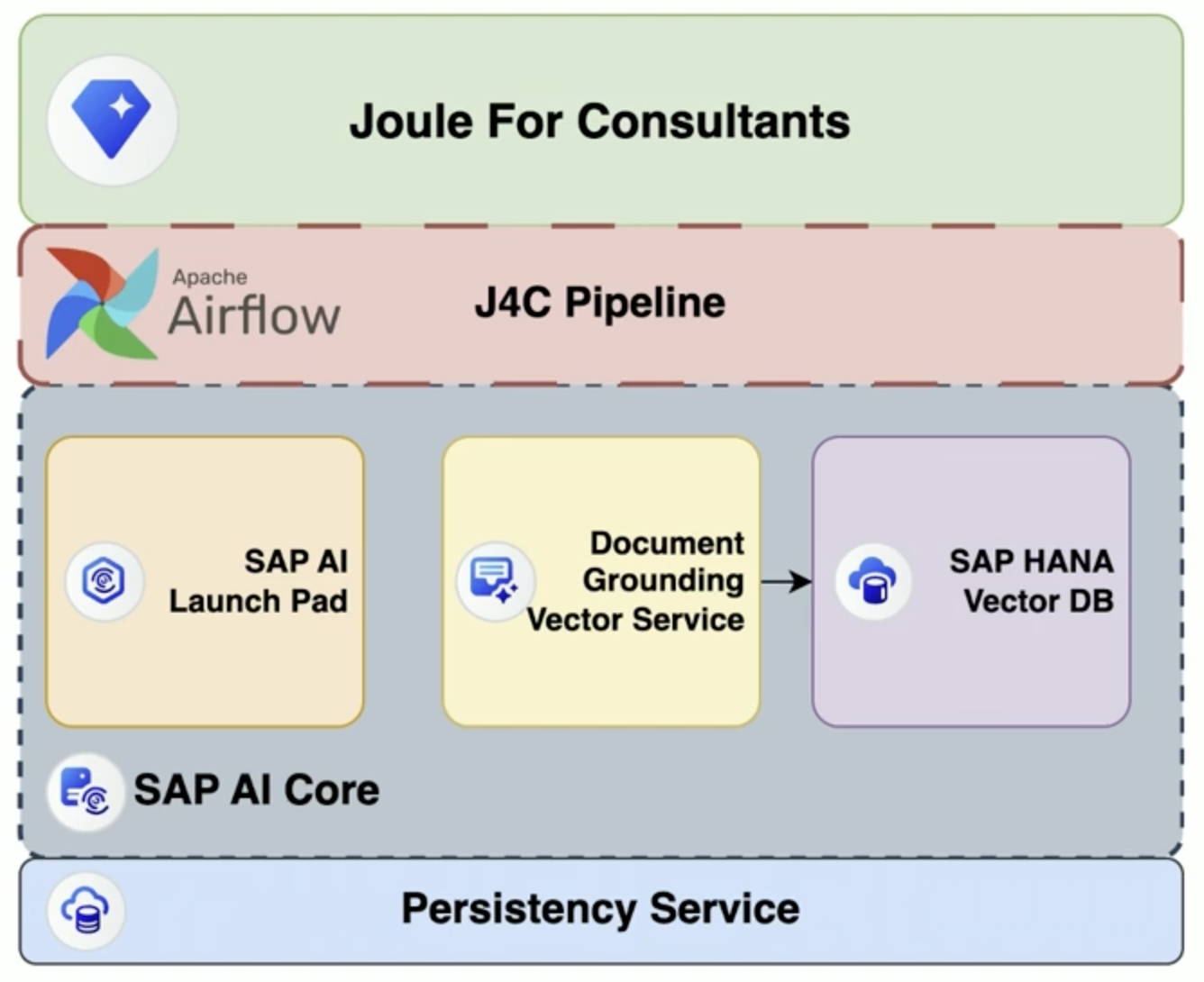

Figure 1: Behind the scenes with SAP’s Joule for Consultants architecture Image source.

That grounding delivers a measurable 30% productivity improvement for consultants using the tool along with 40% faster ABAP (Advanced Business Application Programming) code interpretation**.** But it also creates a serious data engineering challenge. Onboarding 15-plus data sources, handling terabytes of content across PDFs, Word docs, Excel files, PowerPoint slides, and multimodal formats, while maintaining GDPR compliance and keeping content continuously fresh, is not a trivial workflow problem.

Evaluating Orchestration Solutions. Landing on Airflow

SAP evaluated Prefect, Dagster, and Flyte before selecting Airflow. They also explored building in-house tooling using Kubernetes events. Airflow won on several fronts:

- Fast implementation: The team needed a solution they could move quickly with, and Airflow's Python-native design plugged directly into a codebase and team that were already Python-native.

- DevOps compatibility: Airflow integrated cleanly with their existing infrastructure built around Helm charts, Argo CD, and GitOps, removing the need to retool their deployment pipelines.

- Managed service optionality: SAP self-hosts today, but the availability of managed Airflow services such as Astro from Astronomer gave the team confidence they were not building on a dead end. Optionality matters at enterprise scale.

- Community momentum: The pace of active development and that future requirements were already being addressed in the project's roadmap and community discussions. For a team building something production-critical, betting on a platform and ecosystem with that level of momentum reduces long-term risk.

SAP runs Airflow on their Business Technology Platform Kubernetes runtime, with high-availability schedulers, Redis for queuing, and SAP Object Storage as an XCom backend. Tagged Git releases and Argo CD give them consistent deployments across dev, staging, and three production availability zones, with clean rollback if something breaks.

The Evolution of Airflow Pipelines

The Dag architecture matured through three natural phases as the pipeline grew from proof of concept to production scale:

- The team started with a single hard-coded Dag, which worked well initially but became harder to extend cleanly as the number of data sources grew.

- They then introduced Airflow Variables containing configuration, based on which tasks were mapped dynamically, greatly reducing code repetition.

- As the pipeline took on more data and more users, they refined the design further, splitting ETL and injection into separate pipelines that could be configured with Dag params, triggered independently and run in parallel.

The result is a flexible, scalable architecture that supports both production workloads and AI/ML experimentation without either getting in the way of the other.

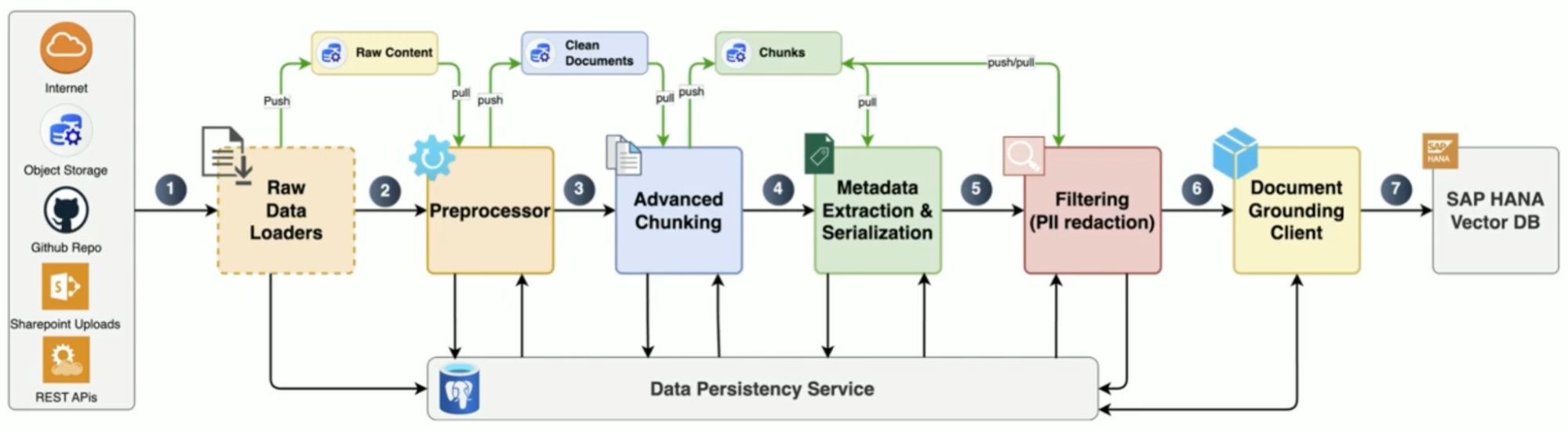

Figure 2: SAP’s Joules for Consultants pipeline architecture, orchestrated by Airflow Image source.

The pipeline runs six modular, restartable stages: raw data ingestion with persistent storage for reprocessing, preprocessing with layout-preserving document cleaning, advanced chunking tuned per data source type, metadata extraction and serialization, PII redaction for GDPR compliance, and vector DB injection into SAP HANA.

The custom operators are where the AI-specific engineering comes through. SAP built:

- Chunking service operator supporting multiple configurable algorithms

- Evaluation operators using Ragas and LLM-as-a-judge for quality scoring

- Vector DB injection operator that handles embedding model rate limits, connection pooling, and state preservation on failure.

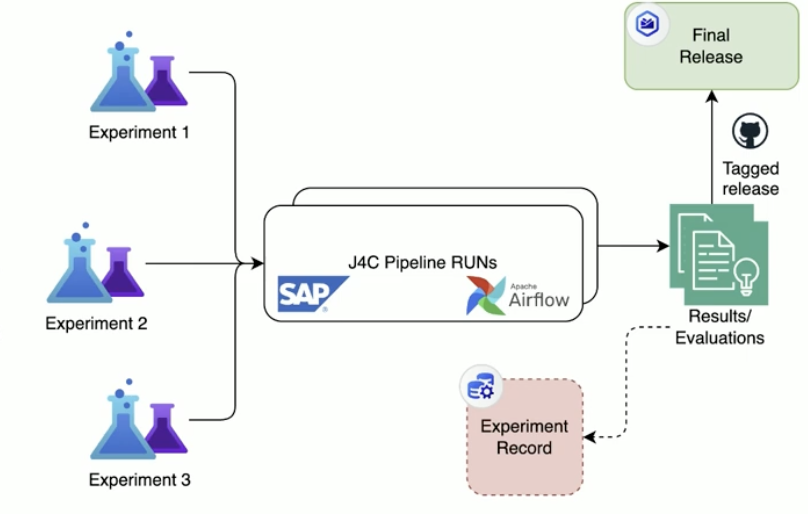

Figure 3: SAP’s ML/AI experiment workflows powered by Airflow Image source.

Content freshness runs on monthly schedules, hashing raw data to detect changes and triggering targeted deletes and re-ingestion for stale content.

Putting AI to Work with Airflow

The full session goes deeper on scaling challenges including CICD setup for Airflow code, data refreshing strategies, and the path from ML experiment to production deployment. Watch the replay to get the complete picture.

If you’re building AI-driven applications, download our eBook “Orchestrate LLMs and Agents with Apache Airflow®” for actionable patterns and code examples on how to scale AI pipelines, event-driven inference, multi-agent workflows, and more.

Get started free.

OR

By proceeding you agree to our Privacy Policy, our Website Terms and to receive emails from Astronomer.