ORCHESTRATING THE FUTURE OF MANUFACTURING

Board-level bets need production-grade data

From industrial manufacturing to CPG, food, transport equipment, and life sciences, manufacturers are entering a three-year window where competitiveness depends less on choosing new technology and more on operationalizing it across plants, suppliers, and products.

Manufacturing IT investment is concentrating around five bet-the-company initiatives:

- Scaling AI from pilots to plant-wide and network-wide execution

- Building resilient, adaptive supply chains with end-to-end visibility

- Securing OT/IT and proving digital trust in regulated environments

- Standardizing the industrial data foundation (IT/OT convergence and real-time operations)

- Decarbonizing operations and industrializing sustainability reporting

All five share the same constraint: reliable, secure, observable orchestration of data and workflows. Manufacturers cannot execute these initiatives on brittle handoffs, point schedulers, or fragmented data tooling. They need a unified orchestration control plane that spans clouds, plants, warehouses, and modern data stacks.

Apache Airflow® is the industry standard for data orchestration. Astro, Astronomer's unified orchestration platform, elevates Airflow into a managed, enterprise-grade control plane for engineering teams to build, run, and observe workflows across the manufacturing enterprise.

INITIATIVE ONE Scaling AI from pilots to plant-wide execution

Manufacturers want AI to move from isolated experiments to repeatable operational leverage: higher yield, fewer defects, less downtime, faster changeovers, and higher throughput. Deloitte reports that most manufacturing executives plan heavy investment in smart manufacturing, with 80% allocating 20% or more of improvement budgets to these efforts.

Yet most organizations remain stuck at pilot scale. Execution gaps widen as manufacturers embed AI directly into products, where failures affect safety, compliance, and brand trust.

Top AI use cases manufacturers are targeting

- Predictive maintenance for failure prediction and maintenance scheduling

- Computer vision for quality inspection and defect detection

- Production optimization across throughput, sequencing, and constraints

- AI embedded into manufactured products, such as driver assistance systems, smart appliances, and intelligent medical devices

- Frontline copilots for troubleshooting, digital work instructions, and faster onboarding

The AI ambition vs. operations reality

AI breaks in production when it is treated as a model problem instead of a workflow problem. Data arrives late or inconsistently, lifecycle steps are stitched together with fragile scripts, and teams cannot trace outcomes back to upstream data and pipeline decisions.

AI becomes durable when it is run like any other critical system: orchestrated, observable, and secured at execution boundaries.

| What you need | How Astro helps |

| End-to-end orchestration for the ML lifecycle | Airflow orchestrates MLOps feature generation, training, evaluation, deployment, and retraining as explicit, testable workflows with retries and branching. |

| LLM and agentic workflow orchestration | The Airflow Common AI Provider orchestrates multi-step LLM and agent workflows managing branching logic, tool calls into MES/ERP/CMMS/PLM, evaluations, and failure recovery so these workflows run with production-grade reliability. |

| Event-driven AI workflows (plant and product signals) | Event-based scheduling triggers AI pipelines when telemetry, batch lots, QA results, or supplier updates land, not on arbitrary timers. |

| Secure execution where the data lives | Remote Execution keeps execution inside your environment (plant/edge VPC, enterprise cloud, or regulated boundary), while Astro manages orchestration. Data and IP stay local. |

| Observability tied to outcomes | Astro Observe links data quality checks, anomalies, and SLA breaches directly to AI pipelines, so teams can trace bad predictions to pipeline steps and lineage. |

| Cost visibility across AI and data pipelines | Astro Observe ties pipeline execution to compute, warehouse, and GPU usage, enabling platform teams to see which AI training, inference, and data workloads drive cost spikes, and optimize accordingly. |

| Fast iteration with guardrails | Astro Runtime + Astro IDE + CI/CD provide hardened releases, consistent dev workflows, and safe deployments across teams and plants. |

INITIATIVE TWO Building resilient, adaptive supply chains with end-to-end visibility

Supply chain volatility is structural. McKinsey reports 82% of organizations are affected by new tariffs, while Gartner finds only 29% have the capabilities required for future performance.

Manufacturers are shifting from reactive expediting to predictive, scenario-driven execution, spanning demand sensing, inventory optimization, logistics planning, supplier risk management, and traceability.

What it takes to operationalize supply chain resilience

Signals are fragmented across ERP, MES, WMS, TMS, supplier portals, and spreadsheets. Data quality issues prevent automation, planning outputs fail to operationalize, and responses cannot be coordinated across systems and regions.

Supply chain resilience is a workflow problem. Orchestration turns visibility into action.

| What you need | How Astro helps |

| Orchestration across ERP, plant systems, suppliers, and cloud | Airflow's ecosystem of 2,100+ integrations orchestrates ingestion and synchronization across the stack, including data warehouses and operational systems. |

| Unify orchestration and transformation to manage complex analytics | Orchestrate, run and observe dbt workflows with Cosmos, the open-source standard for seamless dbt orchestration and model-level visibility in Apache Airflow |

| Diagnose pipeline failures in minutes | Otto, the data engineering agent for Astro, pulls the logs, analyzes the failure, and proposes a fix. Get to the root cause in minutes instead of hours, without manually digging through code and logs. |

| Near-real-time response and dependency management | Event triggers run downstream workflows immediately when upstream data is ready (e.g., late supplier ASN triggers replan). |

| Trust in supply-chain data products | Astro Observe enforces freshness, volume, completeness, and schema checks on critical feeds powering planning, allocation, and customer commitments. |

| Traceability with lineage and auditability | Lineage visualization and execution metadata give a defensible path from source signals to decisions and actions. |

| Production-grade reliability from day one | Autoscaling, cross-region DR, and zero-downtime updates deliver a 99.9% uptime SLA replacing the significant operational overhead of self-managing Airflow clusters. |

Astro in Action Building Workflow Resilience: Unifying dbt Transformations on Astro with Cosmos As part of its initiative to improve resilience across its most critical data pipelines, a leading medical equipment manufacturer used Astronomer's Cosmos to move its transformations from dbt Cloud to Astro. As a result, the company has improved developer velocity by 15% and saved over $500,000 annually in redundant cloud costs, all while improving visibility across its pipelines by unifying previously fragmented workflows. "The cost savings with Cosmos are substantial, but the real value lies in how much more efficient our developers will be. By consolidating everything into a single environment on Astro, we're simplifying our workflows and increasing developer productivity." Data Team Leader, leading medical equipment manufacturer Supply Chain Modernization Across Azure, Databricks, and Snowflake Amid global expansion and acquisitions, one of the world's largest aluminum manufacturers needed a modern orchestration foundation to unify data workflows that support global operations and supply chain decision-making. Legacy schedulers like Control-M lacked scalability and visibility, making it harder to trust operational data and increasing run costs. The company standardized on Astro for enterprise-grade observability, alerting, Azure compatibility, and support, integrating with core services like Azure DevOps, Databricks, Snowflake, and the Astro CLI. Professional Services helped accelerate the rollout and establish repeatable migration patterns. The team migrated 176 jobs in five weeks, improved system insight and trust through full observability and alerting, and unlocked ~$600K in savings by eliminating Control-M. With a single orchestration control plane in place, the company is positioned to expand modernization efforts across additional workflow platforms as it scales globally.

INITIATIVE THREE Securing OT/IT and proving digital trust

Manufacturers must modernize without disrupting production. Plants need to adopt cloud and AI while reducing OT attack surfaces, limiting lateral movement, and automating audit evidence and access control. Regulated sectors must prove compliance, not just claim it.

What it takes to operationalize digital trust

OT systems cannot tolerate downtime, security controls are fragmented across plants, and audit trails are inconsistent. Data sovereignty and IP constraints further limit what can move into SaaS environments.

Secure manufacturing transformation depends on orchestrated controls you can prove.

| What you need | How Astro helps |

| Deterministic, repeatable security workflows | Code-defined pipelines deployed via CI/CD embed validation, logging, and approvals as enforced steps. |

| Zero-trust ready architecture | Remote Execution keeps sensitive execution and data inside your environment while Astro manages orchestration metadata. |

| Hardened runtime and controlled upgrades | Astro Runtime provides a security-hardened Airflow distribution, timely patches, and controlled image updates. |

| Enterprise access control + auditability | RBAC, SSO/IAM integration, workspace isolation, audit logging for sensitive workflows across teams and plants. |

| Automated compliance monitoring | With centralized metadata and usage dashboards, Astro helps detect failures, SLA breaches, or anomalies, surfacing deviations in pipeline behavior that impact sensitive processes. |

| Operational visibility across pipelines | Astro Observe provides pipeline-aware monitoring, alerting, and root cause analysis tied to execution context. |

| 24x7 support. commercially-backed SLAs | Airflow experts on call provided by the engineers that built it. With Astronomer's team you accelerate adoption, resolve issues faster, and keep mission-critical pipelines running. |

Remote Execution: Cloud Agility, Plant-Level Control

Manufacturers need the freedom to run workflows where they create the most value: in the cloud, in regional environments, and directly on factory-floor infrastructure close to machines, MES, and plant networks. Remote Execution on Astro makes that possible by separating orchestration from execution, so you can keep execution on purpose-built environments (including plant and edge) while still managing everything centrally in Astro.

Security comes with the architecture: sensitive production, quality, and supplier data stays inside your approved boundary, reducing exposure risks tied to moving data into a vendor environment.

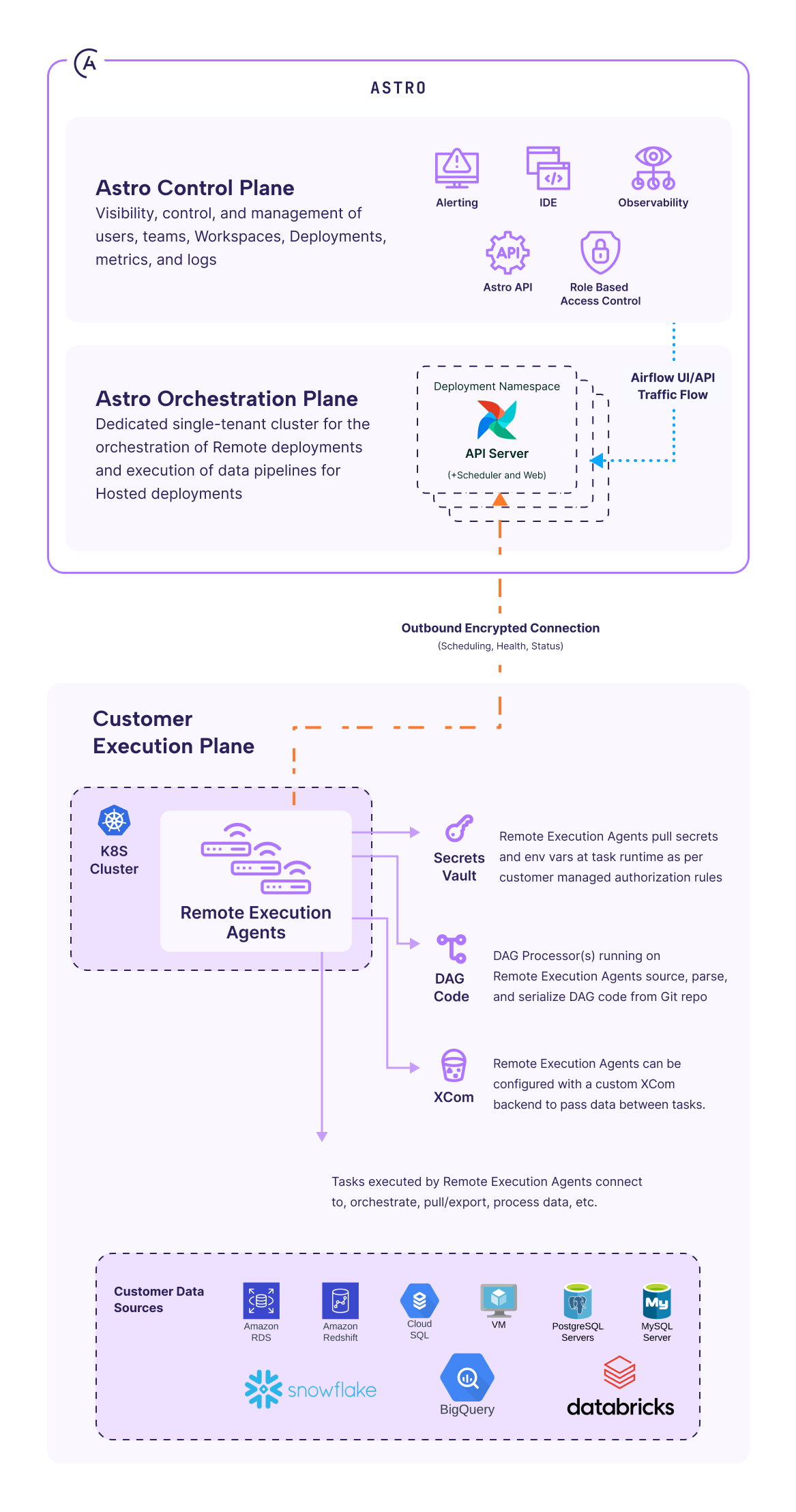

Remote Execution uses a three-plane architecture:

- The control plane manages users and metadata but never sees your data.

- The orchestration plane schedules workflows in a single-tenant environment.

- The execution plane (fully yours) runs the tasks using your infra, secrets, and permissions.

Figure 1: Remote Execution three-plane architecture. The control plane manages metadata, the orchestration plane schedules workflows, and the customer-owned execution plane runs tasks — connected via a single outbound encrypted connection.

Only outbound encrypted connections are used. There is no need for inbound firewall exceptions. Remote Execution Agents pull work, run it locally under customer-managed identities, and report status back to Astro, aligning with zero-trust principles.

Bottom line: Astro delivers managed-service agility and visibility while letting manufacturers execute workloads anywhere—including the factory floor—without exposing data, code, or secrets.

You can learn more by downloading our whitepaper: Remote Execution: Powering Hybrid Orchestration Without Compromise.

Astro Private Cloud

For organizations that cannot adopt any managed services, Astro Private Cloud delivers enterprise-grade Airflow-as-a-Service entirely within your own environment. It runs exclusively on customer-managed infrastructure—across private cloud, on-premises, or fully air-gapped deployments—providing complete ownership over data, network boundaries, and security controls.

Astro Private Cloud consolidates fragmented Airflow usage into a centrally governed platform with isolated, multi-tenant deployments. A unified control plane enables teams to standardize orchestration, enforce security and governance policies, and manage multiple Airflow environments while individual teams operate independently within dedicated namespaces.

By combining centralized governance with full infrastructure control, Astro Private Cloud reduces operational overhead, strengthens security and compliance, and enables organizations to reliably scale orchestration across the enterprise.

Note: Astro Private Cloud does not include features specific to the hosted Astro service, such as the Astro IDE and Astro Observe.

INITIATIVE FOUR Standardizing the industrial data foundation (IT/OT convergence + real-time operations)

Most manufacturers have data, but it is trapped in MES historians, PLCs, ERPs, and cloud silos. The shift is from collecting data to making it operational.

The goal is a shared data foundation that supports cross-plant performance management, real-time decisioning, standardized data products, and faster integration after acquisitions.

What it takes to make industrial data operational

Platform efforts fail when pipelines are built per plant and vendor, event streams remain timer-based, and orchestration is fragmented. Without lineage, failures are slow to diagnose.

Manufacturers do not need more tools. They need a standard control plane.

| What you need | How Astro helps |

| A standard orchestration layer across plants and clouds | Astro provides a managed Airflow control plane to unify orchestration patterns across teams, tools, and environments. |

| Event-driven, dependency-aware execution | Event-based + dataset-aware scheduling coordinates real-time and scheduled workflows without brittle polling. |

| Diagnose pipeline failures in minutes | Otto, the data engineering agent for Astro, pulls the logs, analyzes the failure, and proposes a fix. Get to the root cause in minutes instead of hours, without manually digging through code and logs. |

| Integration breadth | Airflow's connector ecosystem orchestrates across warehouses, lakes, databases, and operational systems. |

| Data quality and lineage at the pipeline level | Astro Observe ties data checks and lineage to the exact tasks and Dags producing operational KPIs. |

| Scale without operational overhead | Autoscaling + high availability with cross-region DR + 24x7 support keep mission-critical operations stable while pipeline volume grows. |

Astro in Action Data teams in manufacturers adopt Astro to eliminate the legacy schedulers that often cripple the ability to ship new data products and workflows. Moving from legacy orchestration systems such as AutoSys, Control-M, Informatica or Apache Oozie to Astro unlocks strategic and operational gains: Cut costs by up to 75%. Organizations moving to Astro typically realize major savings through reduced infrastructure, licensing, and operational overhead, freeing budget for innovation. Unblock agility and scale with cloud-native orchestration. As a modern orchestration platform, Astro gives teams the flexibility, resilience, and scalability needed to support fast-moving data and AI initiatives without the constraints of legacy tooling and manual overhead. Attract and retain top engineering talent. Airflow embodies code-first and open source philosophies. By using Airflow, data teams recruit top talent more easily and onboard faster while avoiding lock-in to niche or proprietary technology. No matter what workload or legacy orchestration tool your organization is using, Astronomer's Professional Services team can help. The company's experts can build an operational framework to smoothly and safely migrate your workloads to Astro. Qualcomm Standardizes Semiconductor Design Workflows with Airflow Qualcomm's Oryon Custom CPU team needed to standardize and scale chip design and verification workflows that run at extreme HPC scale across multiple global data centers and lab hardware. A Jenkins-heavy approach created brittle infrastructure, high maintenance overhead, and unversioned "freestyle" workflows that were hard to reuse, hard to audit, and painful to operate as failures and throughput demands increased. The company's engineering team replaced Jenkins with Apache Airflow, using Python-authored, versioned pipelines and a web UI that improved visibility and debugging. Airflow integrates with SLURM and LSF, launching dynamic Celery workers on demand with explicit resource leases, plus housekeeping automation. To escape "data center handcuffs," they adopted EdgeExecutor, routing work to wherever compute is available and extending the same control plane into the lab for post-silicon tests. Airflow now orchestrates millions of tasks per day, improves reliability and reuse across teams, and enables consistent execution across heterogeneous, multi-site infrastructure. Learn more from Qualcomm's Airflow Summit session. Consolidating Legacy Schedulers into a Unified Orchestration Control Plane A privately held American multinational corporation involved in food, agriculture, and industrial products was modernizing its data platform and needed reliable orchestration across hundreds of teams. A mix of legacy schedulers (including Oozie and AutoSys) and isolated open source Airflow deployments created fragmented operations, high cost to run, and limited visibility when pipelines failed. The company standardized on Astro with Astro Observe and a central enablement model to consolidate scheduling and support 100+ teams on a single orchestration foundation. With Airflow and Snowflake at the core, teams improved issue visibility and resolution, reduced operational risk, and retired multiple schedulers to drive long-term cost savings while scaling supply chain, pricing, and customer insight workloads.

INITIATIVE FIVE Decarbonizing operations and industrializing sustainability reporting

Sustainability is now a competitiveness issue. Manufacturers must reduce energy intensity and waste while producing defensible sustainability reporting across operations and suppliers.

Why this becomes a data-and-workflow problem

Sustainability data spans finance, operations, suppliers, and facilities. Manual reporting creates inconsistency, while asynchronous data makes calculations brittle. Improvement requires closed loops: measure, diagnose, act, verify.

| What you need | How Astro helps |

| Repeatable sustainability data products | Orchestrate ingestion, transformations, and calculations as versioned workflows with CI/CD and rollback. |

| Evidence, lineage, and auditability | Astro Observe lineage + pipeline logs create a defensible chain from raw inputs to reported metrics. |

| Data quality enforcement | Freshness/completeness checks prevent stale supplier or meter feeds from contaminating reporting. |

| Hybrid execution aligned to data boundaries | Remote Execution runs workloads directly inside your manufacturing facilities. |

| Operational response loops | Event-driven workflows trigger remediation: anomalies in energy use or scrap rates can kick off diagnostics and corrective actions. |

Airflow in Action Panasonic Energy of North America (PENA), a lithium-ion battery manufacturer supporting the EV transition, needed to scale operational analytics as production grew. Their early setup relied on Windows Task Scheduler, ad hoc Python scripts running on a factory-floor PC, and manual CSV workflows, creating reliability and scalability issues. They standardized on Airflow, moving to modular pipelines with task groups and GitLab CI/CD, plus automated maintenance and proactive monitoring with daily failure reporting. Integrated into their operational analytics stack with tight-latency dashboards, along with a roadmap to streaming and Kubernetes for MES and broker-driven data, Airflow became a durable foundation for scaling EV battery manufacturing and sustainable operations. Read more in the Airflow in Action blog post.Conclusion: Orchestration as manufacturing's control plane

AI, supply chains, security, data platforms, and sustainability look like separate initiatives. In practice, they share the same requirements: trusted data, reliable workflows, and governance built into execution.

Manufacturers that win this cycle will treat orchestration as the control plane for their operations. Apache Airflow provides the open standard. Astro operationalizes it with enterprise security, observability, and hybrid deployment—so you can build, run, and observe workflows anywhere.

→ Build a trusted, future-ready data stack today

Run an Astro TCO analysis and get in touch with our experts today to get results faster.

GET THE FULL GUIDE

Keep reading to learn how manufacturers are orchestrating data to secure OT/IT, standardize the industrial data foundation, and decarbonize operations.

By proceeding you agree to our Privacy Policy, our Website Terms and to receive emails from Astronomer.

Get started free.

OR

By proceeding you agree to our Privacy Policy, our Website Terms and to receive emails from Astronomer.