Note: There is a newer version of this webinar available: Programmatic workflow management with the Airflow and Astro APIs

Webinar links:

- Github repo: https://github.com/astronomer/airflow-api-webinar/tree/main

- Airflow API docs: https://airflow.apache.org/docs/apache-airflow/stable/stable-rest-api-ref.html#section/Overview

- Registry: https://registry.astronomer.io/

Agenda:

- What is Apache Airflow®?

- Apache Airflow® 2

- Airflow API: How it Used to Be

- Airflow API

- Using the API

- Some Common Use Cases

- Event-Based DAGs with Remote Triggering

- Demo

- Appendix: API Calls Used

What is Apache Airflow®?

Apache Airflow® is one of the world’s most popular open-source data orchestrators — a platform that lets you programmatically author, schedule, and monitor your data pipelines.

Apache Airflow® was created by Maxime Beauchemin in late 2014. It was brought into the Apache Software Foundation’s Incubator Program in March 2016, and has seen growing success since. In 2019, Airflow was announced as a Top-Level Apache Project, and it is now considered the industry’s leading workflow orchestration solution.

Key benefits of Airflow:

- Proven core functionality for data pipelining

- An extensible framework

- Scalability

- A large, vibrant community

Apache Airflow® 2

Airflow 2 was released in December 2020. The new version is faster, more reliable, and more performant at scale. Increased adoption across a wide range of use cases is now possible thanks to the new features, which include:

Stable REST API A new, fully stable REST API with increased functionality and a robust authorization and permissions framework.

TaskFlow API An API that makes for easier data sharing between tasks using XCom and provides decorators for cleanly writing your DAGs.

Deferrable Operators Deferrable operators that reduce infrastructure costs by releasing worker slots during long-running tasks.

HA Scheduler A highly available scheduler that eliminates a single point of failure, reduces latency, and allows for horizontal scalability.

Improved UI/UX A cleaner, more functional UI with additional views and capabilities. Check out the Calendar and DAG Dependencies Views!

Timetables Timetables that allow you to define your custom schedules, going beyond Cron where needed.

Airflow API

How it Used to Be:

Before Airflow 2.0, the Airflow API was experimental, and neither officially supported nor well documented. It was only used to trigger DAG runs programmatically.

How It Is Now:

The new full REST API is:

- Stable: Fully supported by Airflow and well documented

- Based on Swagger/OpenAPI spec: Easily accessible by third parties

- Secure: Authorization capabilities parallel to Airflow UI

- Functional: Includes CRUD operations on all Airflow resources

What Can You Do With It?

The REST API generally supports almost anything you can do in the Airflow UI, including:

- Trigger new DAG runs and rerun DAGs or tasks

- Delete DAGs

- Set connections and variables

- Test for import errors

- Create pools

- Monitor the status of your metastore and scheduler

- Manage users

- And more functionalities, available in the extensive API documentation.

None of these were possible with the old API.

Using the API

Authentication is required with the Airflow REST API.

- Airflow supports many forms of authentication:

- Kerberos, Basic Auth, roll your own, etc.

- Controlled with the setting auth_backend

- Default is to deny all access

- To check what is currently set, use:

airflow config get-value api auth_backend

- Airflow docs have instructions for setting up

- The auth backend is set up for Astronomer customers—just create a service account.

Common Use Cases

The API is commonly used for things like:

- Programmatically rerunning tasks and DAGs

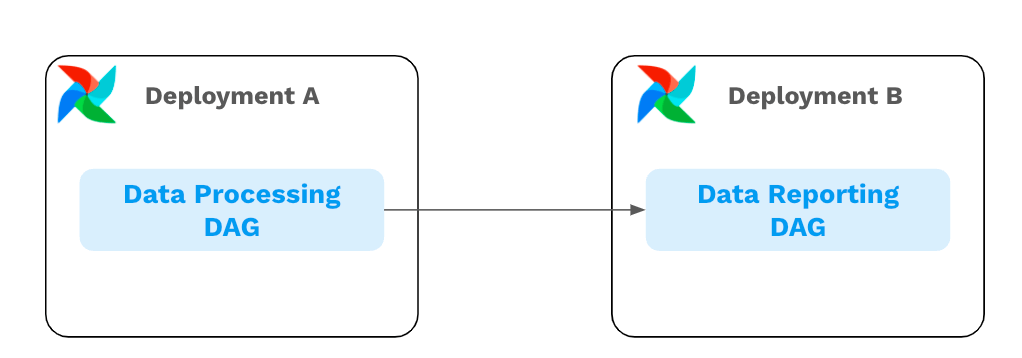

- Implementing cross-deployment DAG dependencies

- Creating event-based DAGs with remote triggering

Event-Based DAGs with Remote Triggering

The API can be used to trigger DAGs on an ad-hoc basis. At Astronomer, we often see use cases such as:

- Your website has a form page for potential customers to fill out. After the form is submitted, you have a DAG that processes the data. Building a POST request to the dagRuns endpoint into your website backend will trigger that DAG as soon as it’s needed.

- Your company’s data ecosystem includes many AWS services and Airflow for orchestration. Your DAG should run when a particular AWS state is reached, but sensors don’t exist for every service you need to monitor. Rather than writing your own sensors, you use AWS Lambda to call the Airflow API.

- Your team has analysts who need to run SQL jobs in an ad-hoc manner, but don’t know Python or how to write DAGs. You’ve built an Airflow plugin that allows the analysts to input their SQL, and a templated DAG is run for them behind the scenes. The API allows them to trigger the DAG run through the plugin.

Demo

The demo starts at minute 10 of the video and covers:

- The documentation

- Setting up authorization

- Astronomer CLI (open-source)

- Common use cases of the Airflow API

- Real-world scenarios

- Implementing cross-deployment deck dependencies

All the code from the demo is available in this webinar’s github repository.

Appendix: API Calls Used

We made the following Airflow API calls using Postman during this webinar (to Airflow running locally on localhost:8080 using the Astronomer CLI):

- Get Providers: GET request to

http://localhost:8080/api/v1/providers - Get Import Errors: GET request to

http://localhost:8080/api/v1/importErrors - Trigger a DAG Run: POST request to

http://localhost:8080/api/v1/dags/random_failures/dagRuns- Body: {“execution_date”: “2022-02-03T14:15:22Z”}

- GET DAG Runs: GET request to

http://localhost:8080/api/v1/dags/random_failures/dagRuns - Rerun Tasks: POST request to

http://localhost:8080/api/v1/dags/random_failures/clearTaskInstances- Body: {“dry_run”: false, “task_ids”: [“random-task], “start_date”: “2022-02-03T17:29:00.056990+00:00”, “end_date”: “2022-02-03T17:52:59.147853+00:00”, “only_failed”: true, “only_running”: false, “include_subdags”: false, “include_parentdag”: false, “reset_dag_runs”: true}

Note: when running locally with the Astronomer CLI, all these requests can use Basic Auth with admin:admin credentials.