Airflow in Action: Kaiser Permanente's AI Pipelines Detecting Disease Before Clinicians Can

5 min read |

Kaiser Permanente's Division of Research is running complex AI and ML workloads, entirely on-premises, using Apache Airflow® as the connective tissue across a complex Kubernetes-based infrastructure. In his Airflow Summit session, Lawrence Gerstley, Director of Data Science, walks through the real-world use cases, architectural decisions, and hard-won lessons from deploying Airflow in a regulated healthcare research environment. Here is a recap of the talk.

60 Years of Data. One Mission.

Kaiser Permanente is one of the largest healthcare organizations in the United States, serving over 12 million members across an integrated care model spanning insurance, hospitals, and clinical care. The Division of Research, founded in 1961 and operating out of Northern California, functions as the company's internal research institution. Its output is peer-reviewed science, spanning behavioral health, cancer, radiology, obstetrics, nephrology, and more.

The division employs over 65 research scientists and biostatisticians, supported by 17 fellows and a broader staff of roughly 600. Its early adoption of the electronic health record means it holds longitudinal patient data spanning 30-plus years, a dataset depth most research institutions can only aspire to.

Public Cloud Is Not an Option

The IT and Data Science teams provide centralized computational services to all researchers and their staff. That platform, called the Advanced Computational Infrastructure (ACI), is built on multiple on-premises Kubernetes clusters with petabytes of storage, large CPU and RAM allocations, and significant GPU capacity.

Kaiser Permanente runs everything on-premise by deliberate choice. Despite PHI-approved cloud pathways existing, the organization is unwilling to expose patient data to the risk of a cloud breach. That constraint shapes every architectural decision, including the choice to run LLMs, image analysis pipelines, and large-scale ML workloads entirely within their own infrastructure.

Airflow: The Key to Research Workflows

Kaiser Permanente uses Airflow to democratize access to compute resources across Kubernetes, without requiring researchers to understand Kubernetes directly. The practical impact is significant.

Researchers submit workloads through parameterized, templated Dags. A generalized Dag accepts a script location, a Docker image, a JSON parameter string, and resource requests (CPUs, GPUs, RAM). Airflow handles all the Kubernetes plumbing. This lets analysts run iterative experiments with different parameters and data sources without writing a single line of Kubernetes configuration.

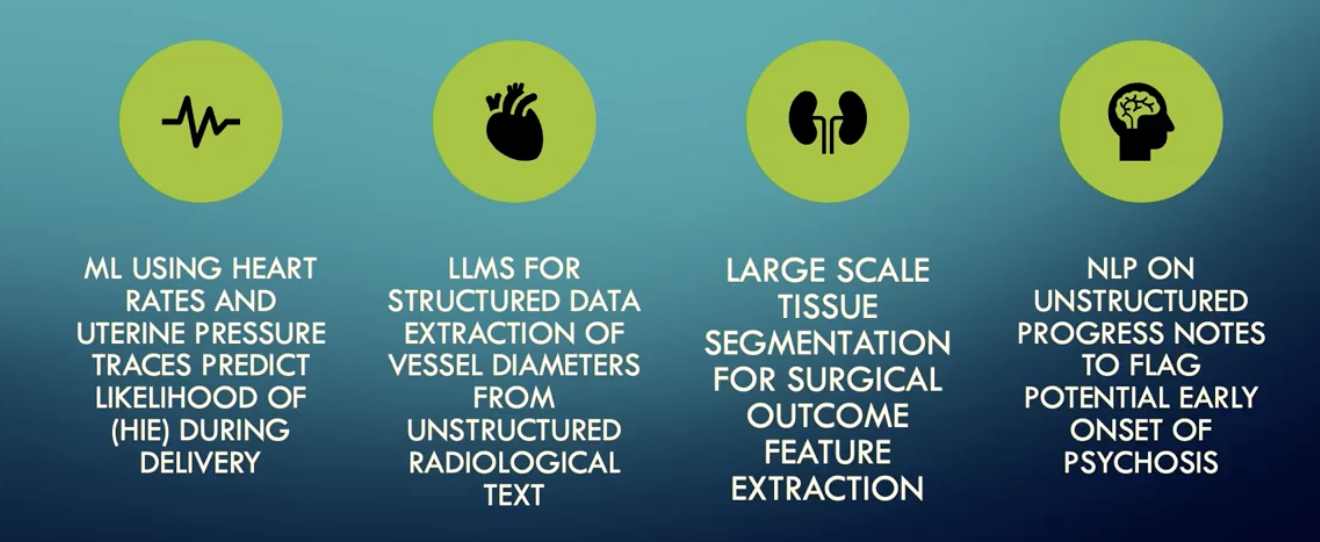

Figure 1: Key Airflow-orchestrated AI and ML workloads at Kaiser Permanente's Division of Research. Image source.

The AI and ML use cases are substantial. The IT and Data Science departments use Airflow-orchestrated pipelines to process 600,000 delivery records to train a model to predict the likelihood of hypoxic-ischemic encephalopathy during deliveries, before clinicians can detect it. LLMs extract structured vessel diameter data from unstructured radiology notes to record maximal vessel diameters in an aneurysm registry. Large-scale CT tissue segmentation pipelines quantify adipose tissue as features for statistical models. NLP pipelines analyze patient progress notes to identify early signs of psychosis.

Beyond ML, Airflow powers text and image de-identification at scale across terabytes of unstructured data, CT and MRI image retrieval from clinical PACS archives, and ETL workflows moving data from warehouses into research data lakes. During the pandemic, the team built and deployed a COVID surge prediction Dag rapidly, delivering daily forecasts to an operational dashboard to support staffing and supply decisions.

For large-scale compute jobs, Airflow spins up ephemeral Spark and H2O clusters inside Kubernetes, attaches workloads to them, and tears them down on completion. A custom cluster sensor monitors availability and health before workloads attach.

What's Next

Kaiser Permanente is actively upgrading to Airflow 3 and piloting daily LLM inference pipelines to identify patients showing early signs of aortic aneurysms from radiology notes. The team is working to expand Airflow adoption across the division and is exploring LLM-assisted Dag authoring to lower the barrier further.

Lawrence covers a lot of ground in this talk, including a discussion of multi-tenancy challenges in a shared Airflow deployment and the CI/CD-based workaround the team built using GitLab and a custom Python linter to enforce access controls at merge time. Catch the full replay of Airflow Uses in an On-Prem Research Setting to see how the company is building one of the more sophisticated on-prem Airflow deployments in healthcare research.

Running Airflow in Regulated Environments? Astronomer Can Help.

Kaiser Permanente's constraints are not unique. Many organizations want the operational benefits of managed Airflow but cannot move sensitive data or workloads outside their environment due to data residency, sovereignty, or contractual obligations. Astronomer is built for exactly that reality.

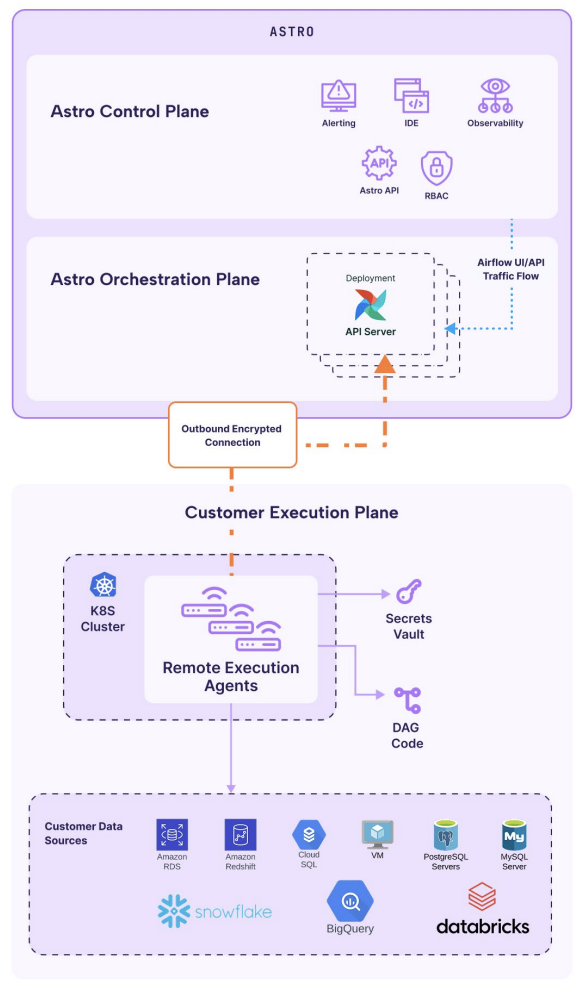

For teams that can adopt cloud infrastructure but need data and execution to stay within their own environment, Remote Execution available with the Astro managed Airflow service, cleanly separates orchestration from execution. Astronomer manages the Airflow control plane, handling scaling, reliability, and security, while all task execution runs inside your own infrastructure using your secrets, IAM, and workload identities. Only task execution results, health statistics, and scheduling metadata are sent back to Astro. Data and code never leave your environment. All communication is outbound-only and encrypted, with no inbound firewall exceptions required. You can learn more by downloading our whitepaper Remote Execution: Powering Hybrid Orchestration Without Compromise.

Figure 2: Stepping through Astro's Remote Execution's architecture and traffic flow

For organizations that require complete infrastructure ownership, Astro Private Cloud delivers enterprise-grade Airflow entirely within your own environment, whether that is private cloud, on-premises, or fully air-gapped. It consolidates fragmented Airflow deployments into a centrally governed platform, giving teams isolated multi-tenant environments while enforcing consistent security and governance policies across the organization.

Kaiser Permanente built a sophisticated, compliance-first Airflow deployment entirely on their own terms. Astronomer exists to give every organization needs to orchestrate on their own terms the same freedom, whether that means keeping execution inside your own cloud environment or running Airflow entirely within your own walls.

Get started free.

OR

By proceeding you agree to our Privacy Policy, our Website Terms and to receive emails from Astronomer.