Watch the recording

Note: To learn more about how to scale Airflow check out our Scaling Airflow to optimize performance guide.

1. Key Points About Scaling Airflow

- Virtually unlimited scaling potential

- Use CeleryExecutor and KubernetesExecutor

- Tune parameters to fit your needs

- Easy to scale to more capacity

- Aggregate logging is important

2. High-Level Steps to Scale Airflow

2. Why Scale Apache Airflow®?

- Because workload outgrows your initial infrastructure

- More DAGs, more Tasks

- More intensive individual tasks

- Because your core Airflow components need more durability

- To Prepare for more DAGRuns and compute load

- To benefit from elasticity — save money by scaling as needed

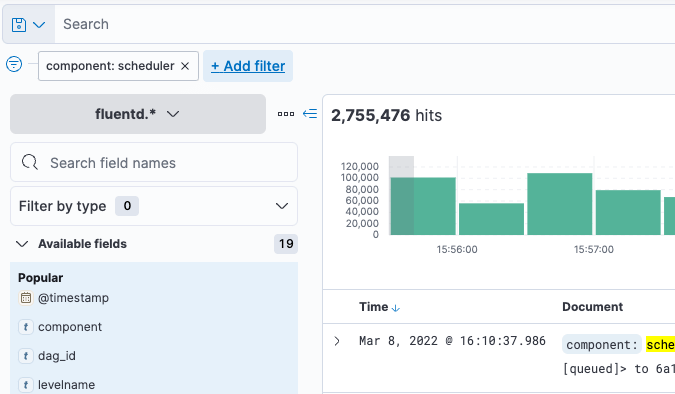

4. Symptoms That Mean You’re Ready to Scale

- Many tasks stuck in Queued or Scheduled state

- Unacceptable latency between tasks

- Missing SLAs

- High resource usage on Scheduler or Webserver

- Out of Memory (OOM) errors on Tasks

Principles of Scaling Systems

5. Basics of Scaling Systems

- Vertical Scaling

- Increase the size of instance

- (RAM, CPU, etc.)

- Adds more power to an existing worker

- Gives individual tasks more horsepower

- Gets very expensive, very quickly

- If vertical scaling reaches a threshold, think about delegating work to a dedicated distributed processing engine — e.g., Spark, Dask, Ray

- Horizontal Scaling

- Increase number of instances

- (RAM, CPU, etc.)

- Adds more nodes to the cluster

- Increases maximum number of tasks and DAGRuns that the system can handle

- Fits Airflow’s orchestration model

- Celery and Kubernetes executors well designed for horizontal scaling

Scaling Airflow as a Distributed Platform

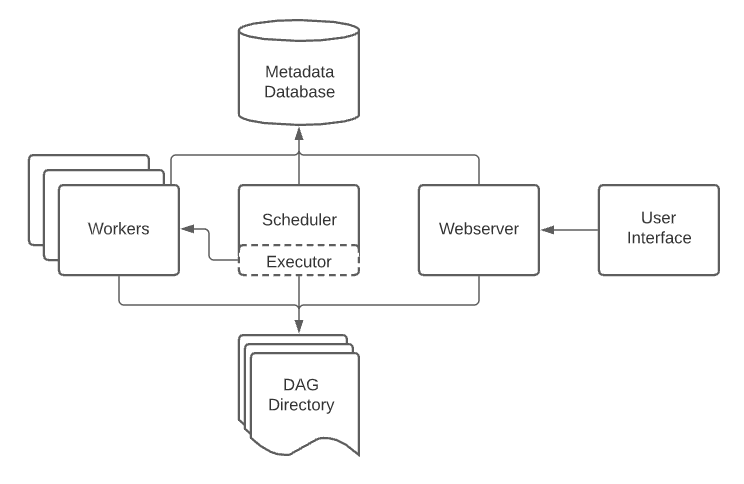

6. Scaling with CeleryExecutor

https://airflow.apache.org/docs/apache-airflow/stable/executor/celery.html

- Allows for easy horizontal scaling

- Runs workers’ processes that process TaskInstances

- To scale, add a new worker process

- Can be on a new node or an existing node

- The connection between workers uses Celery broker and metadatabase

- $AIRFLOW_HOME looks identical to other worker nodes

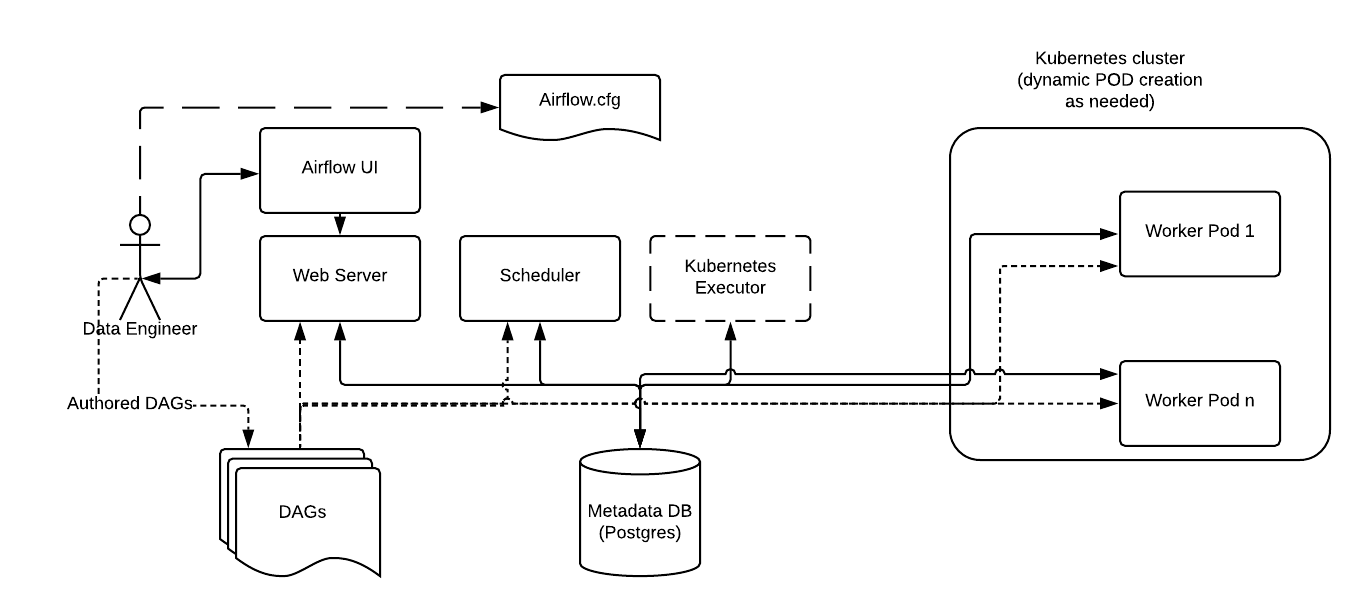

7. Scaling with KubernetesExecutor

https://airflow.apache.org/docs/apache-airflow/stable/executor/kubernetes.html TaskInstances run on K8s pods

- TaskInstance pods are ephemeral

- Each task get its own pod

- No workers’ processes

Parameter Tuning when Scaling Airflow

https://airflow.apache.org/docs/apache-airflow/stable/configurations-ref.html

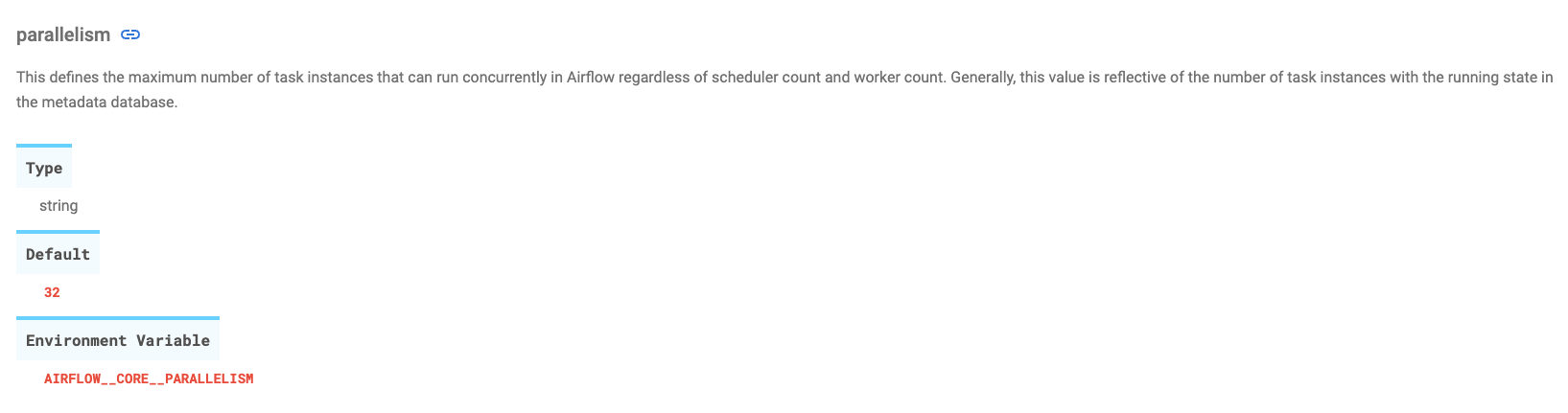

- parallelism

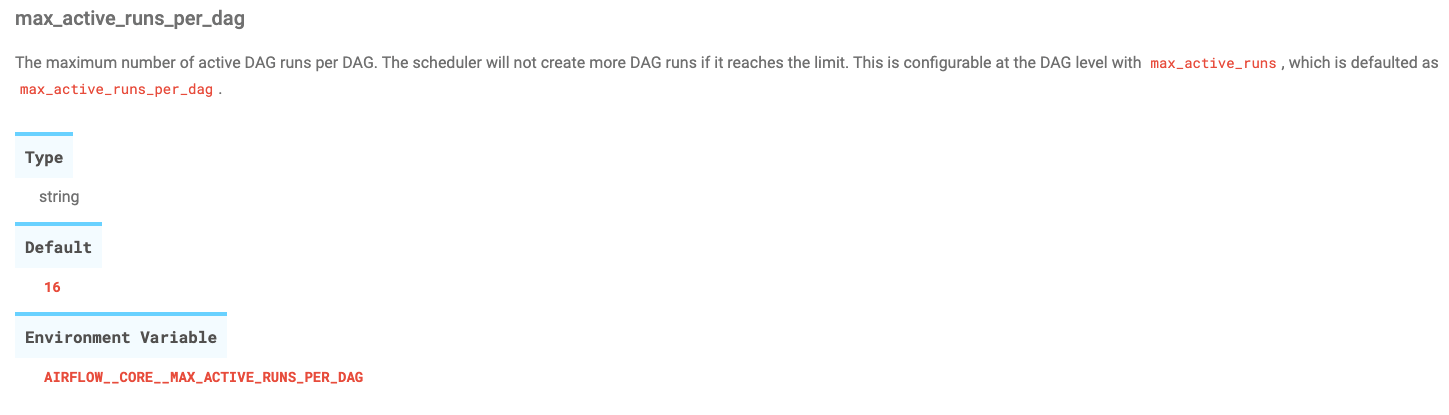

- max_active_runs_per_dag

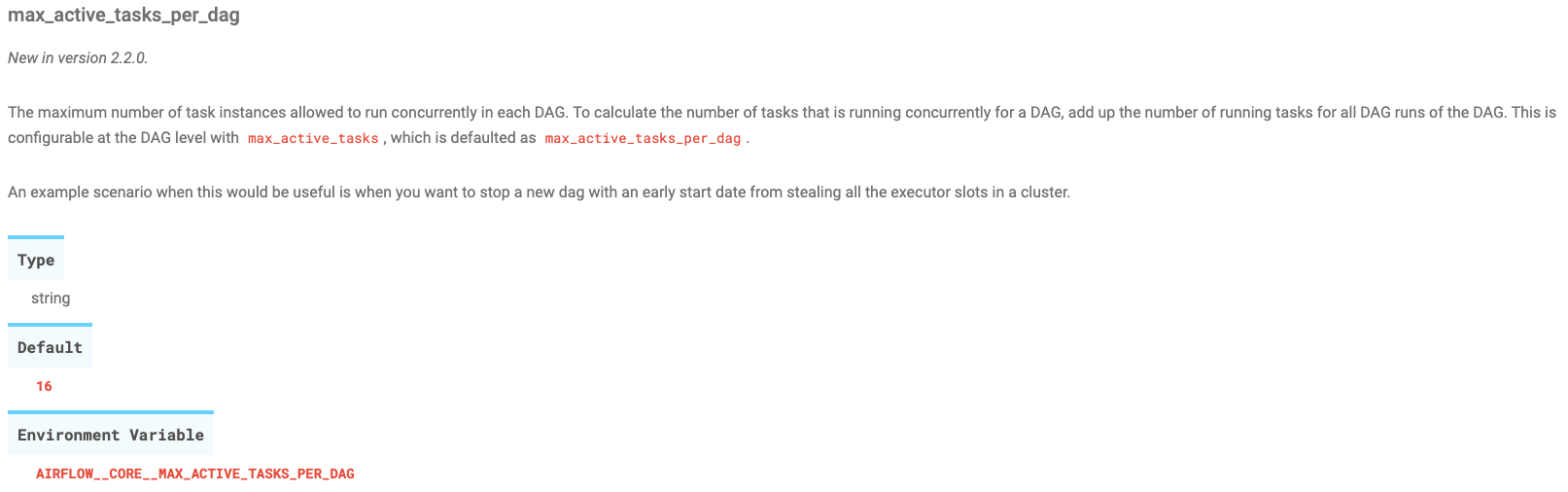

- max_active_tasks_per_dag

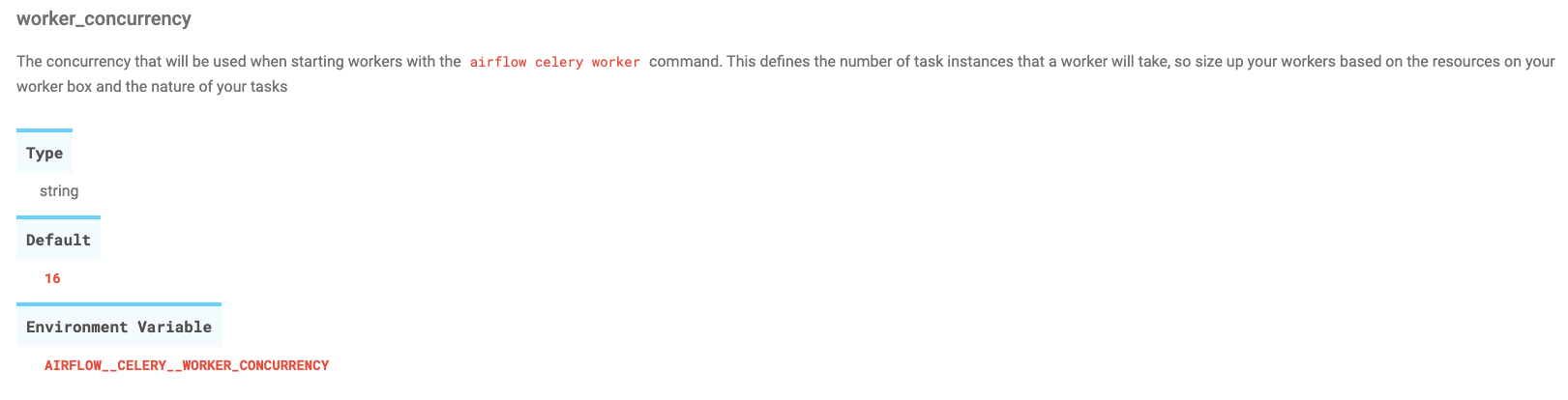

- worker_concurrency

- Pool size

These parameters control the number of tasks that can be run at a time.

8. Sizing Pools

- Pools are another way that we can limit the amounts of tasks that can run

- Can be used to circumvent executor slots being hogged by heavy DAGs with a lot of tasks

- Limits the number of running and queued tasks (active tasks that are under the control of the executor)

- Groups tasks together to limit the active instances by group

High Availability Airflow Components

Other Airflow Components can Scale!

Scheduler

- Airflow allows for multiple schedulers

- Increases the number of tasks that can be scheduled

- Allows the scheduling platform more stability

Web Server

- Multiple web servers increase the load and capacity of the Web UI

Logging on Distributed Airflow

9. Traits of a Great Logging System

- Aggregated

- Historical

- Indexed

- Searchable

10. Importance of Good Logging Practices

- 85% of problems have clues for a solution in the scheduler logs

- Important, if there are multiple schedulers, to be able to collect all of their logs in one place, since those components are working together on the same solution

- Need to keep a history of the logs in a searchable format in order to diagnose problems and work out solutions

- The first step for debugging is to correlate timestamps between problems in task logs with scheduler logs at the same time

11. Debugging Distributed Airflow

- 90% of problems have clues for a solution in the scheduler logs

- 8% of problems are resource consumption issues

- Out of memory

- CPU limited cycles for tasks

- The other 2% is the hard part

How to Scale your Deployment - Demo

Get started free.

OR

API Access

Alerting

SAML-Based SSO

Airflow AI Assistant

Deployment Rollbacks

Audit Logging

By proceeding you agree to our Privacy Policy, our Website Terms and to receive emails from Astronomer.