Note: There is a newer version of this webinar available: Scheduling in Airflow: A Comprehensive Introduction.

1. Scheduling basics before and After Airflow 2.2 release

Now that Airflow 2.2 is out, there is a new feature which is a real gamechanger.

a. Before:

Most important concepts:

- start_date ⮕ Date at which tasks start being scheduled

- Must be defined within your DAG

- Always specify a static, non dynamical start date

- schedule_interval ⮕ Interval of time from the min(start_date) at which DAG is triggered

- Can be monthly, daily, weekly - defines the frequency

- end_date ⮕ Date at which your DAG stops being scheduled

- Can be defined with Cron or time deltas.

The DAG [X] starts being scheduled from the start_date and will be triggered after every schedule_interval.

Important to remember! DAG will get triggered at start_date PLUS schedule_interval. The moment of running the DAG used to be called execution_date. Confusing!

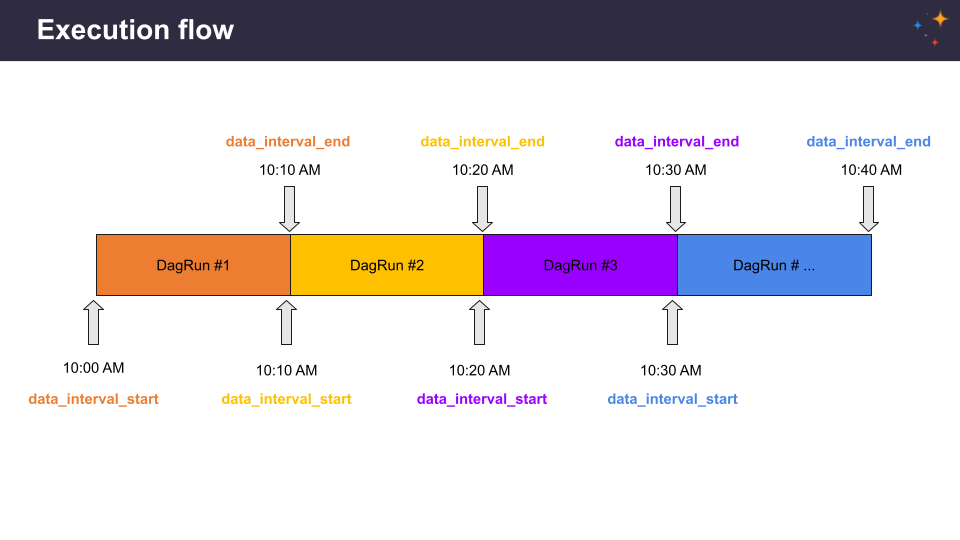

Execution flow assuming a start_date at 10:00 AM and a schedule interval every 10 mins:

b. After: New concepts!

data_interval_start = logical_date = execution_date

- data_interval_start ⮕ Start date of the data interval = the actual execution date

- data_interval_end ⮕ End date of the data interval

- logical_date ⮕ New name of the old execution_date

Execution flow:

2. Off-topic definitions

| {{ data_interval_start }} | Start of the data interval (pendulum.Pendulum) |

| {{ data_interval_end }} | End of the data interval (pendulum.Pendulum) |

| {{ ds }} | Start of the data interval as YYYY-MM-DD. Same as {{ data_interval_start | ds }} |

| {{ prev_data_interval_start_success }} | Start of the data interval from prior successful DAG run (pendulum.Pendulum or None). |

| {{ prev_data_interval_end_success }} | End of the data interval from prior successful DAG run (pendulum.Pendulum or None). |

| {{ prev_start_date_success }} | Start date from prior successful dag run (if available) (pendulum.Pendulum or None). |

IMPORTANT! By default, all dates are converted in UTC. Stick with UTC, don’t mess up the time zones, you will only have a bad time :)

3. Do you know the difference between:

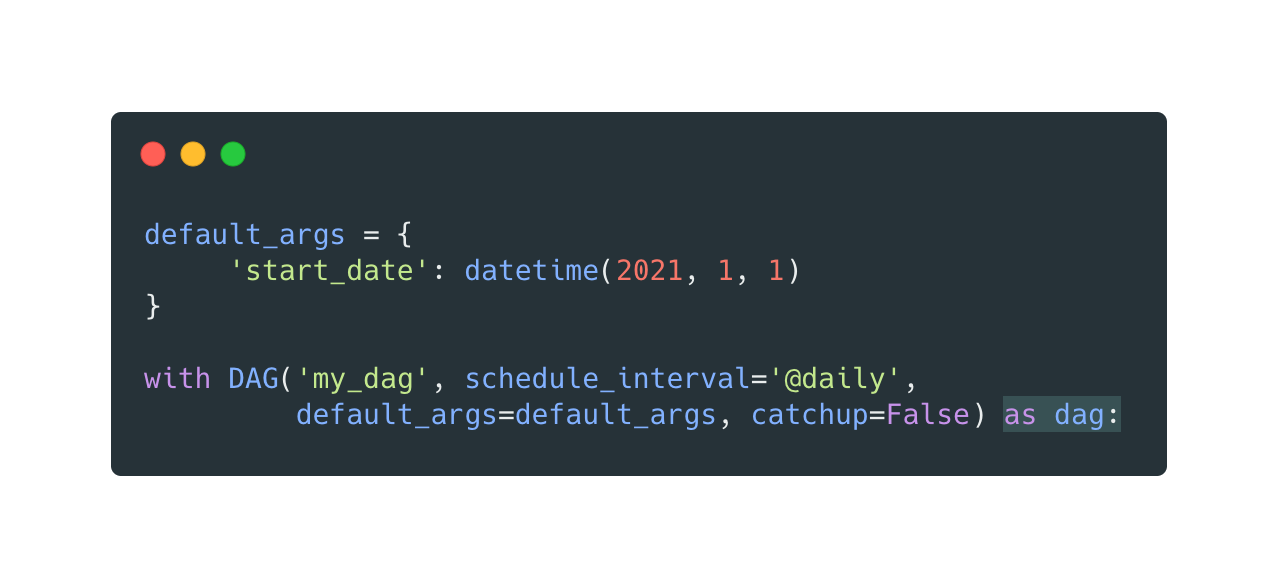

Defining the start date in default args and…

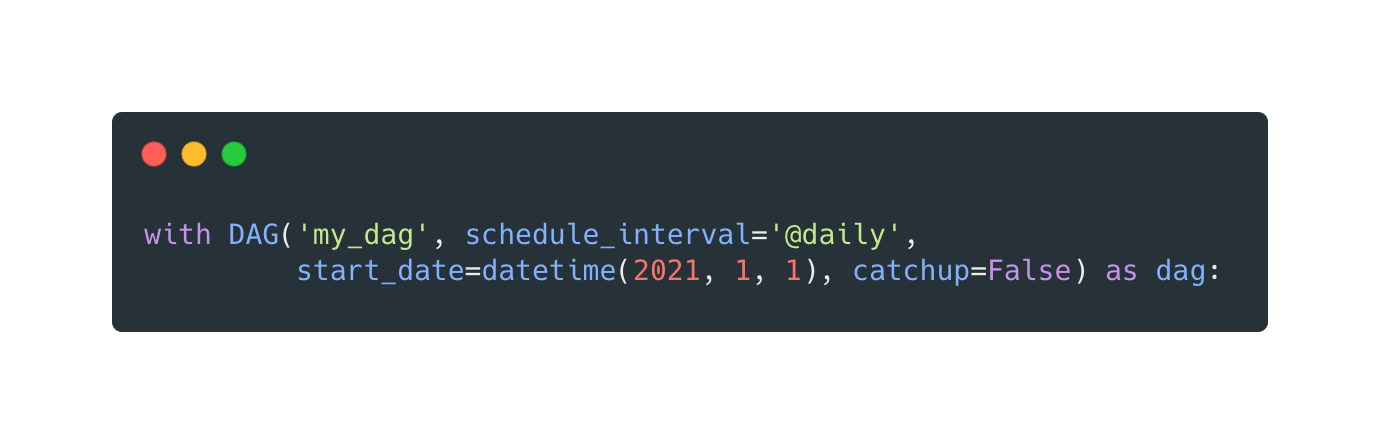

…defining the start date in the DAG object?

Have a look!

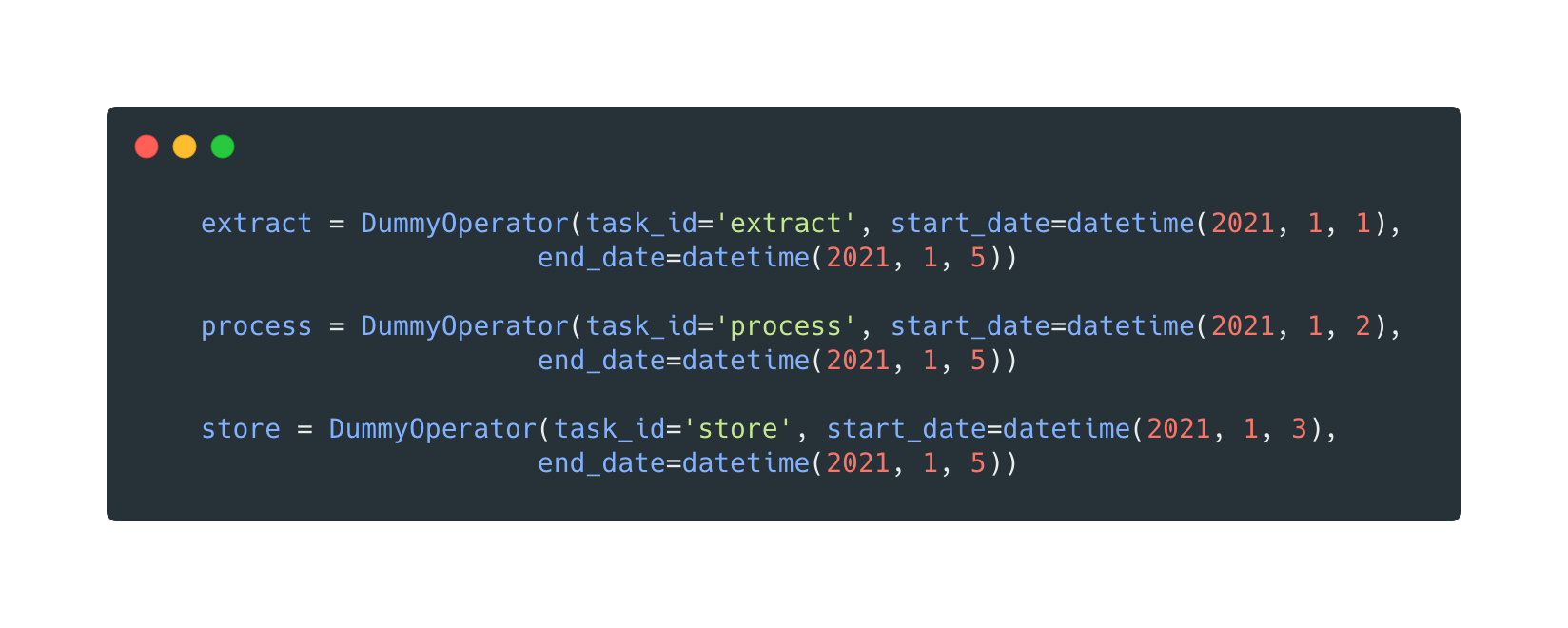

This is possible - each process has a different start date:

What will be the date of the first DAG Run?

The first DagRun to be created will be based on the min(start_date) for all your tasks - in that case the first task.

Takeaway: Always define the start date within your DAG! There is no point in using start_date in default_args. Start_date should only be used on task level if a user likes to have a different start_date for that particular task.

If start_date is specified on both dag level and task level, the max between them is selected.

4. What is the difference between a cron expression and a timedelta object?

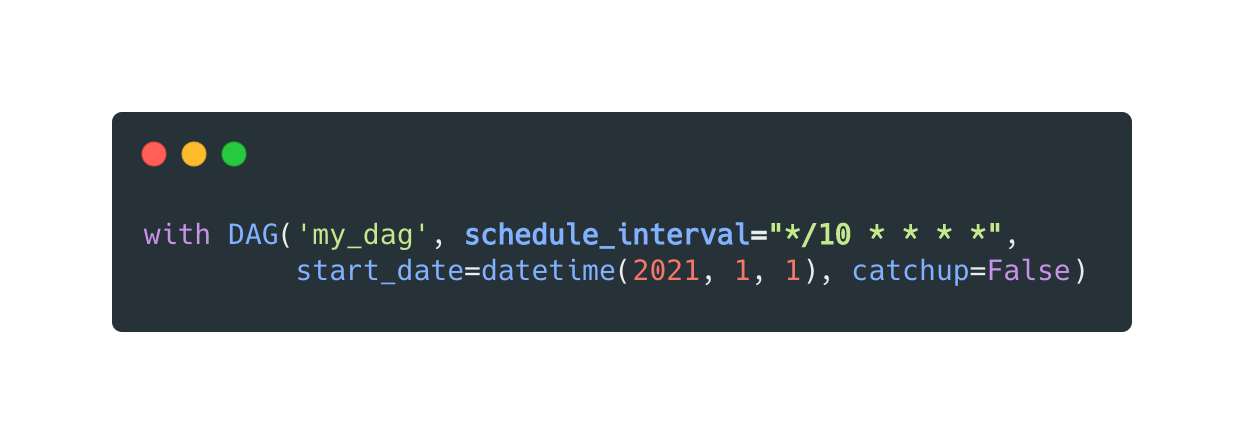

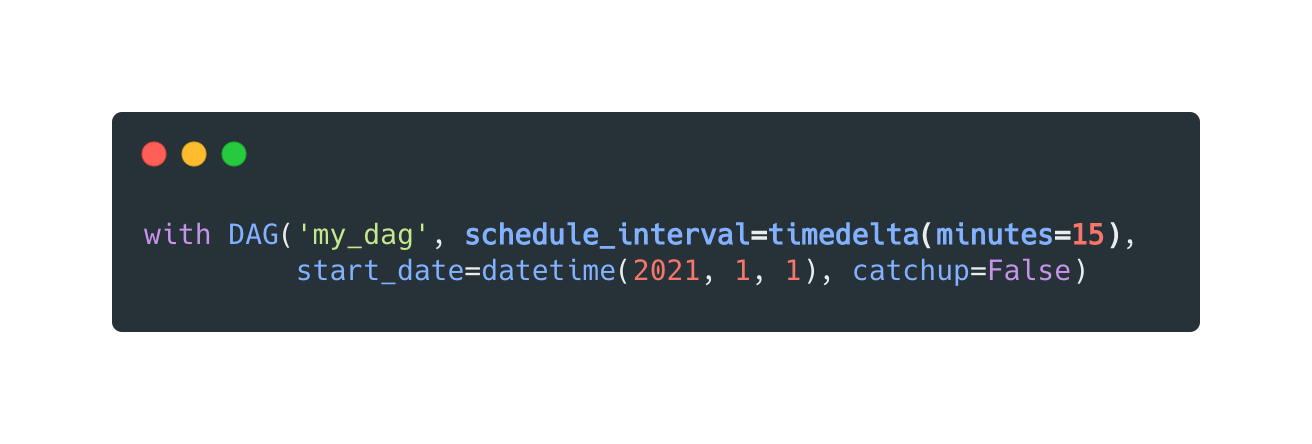

Schedule interval can be defined by:

A Cron expression or…

…a Timedelta object:

What is the difference?

Cron every three days (stateless):

Timedelta every three days (stateful):

Timedelta will always keep the scheduled interval.

5. Daylight Saving Time

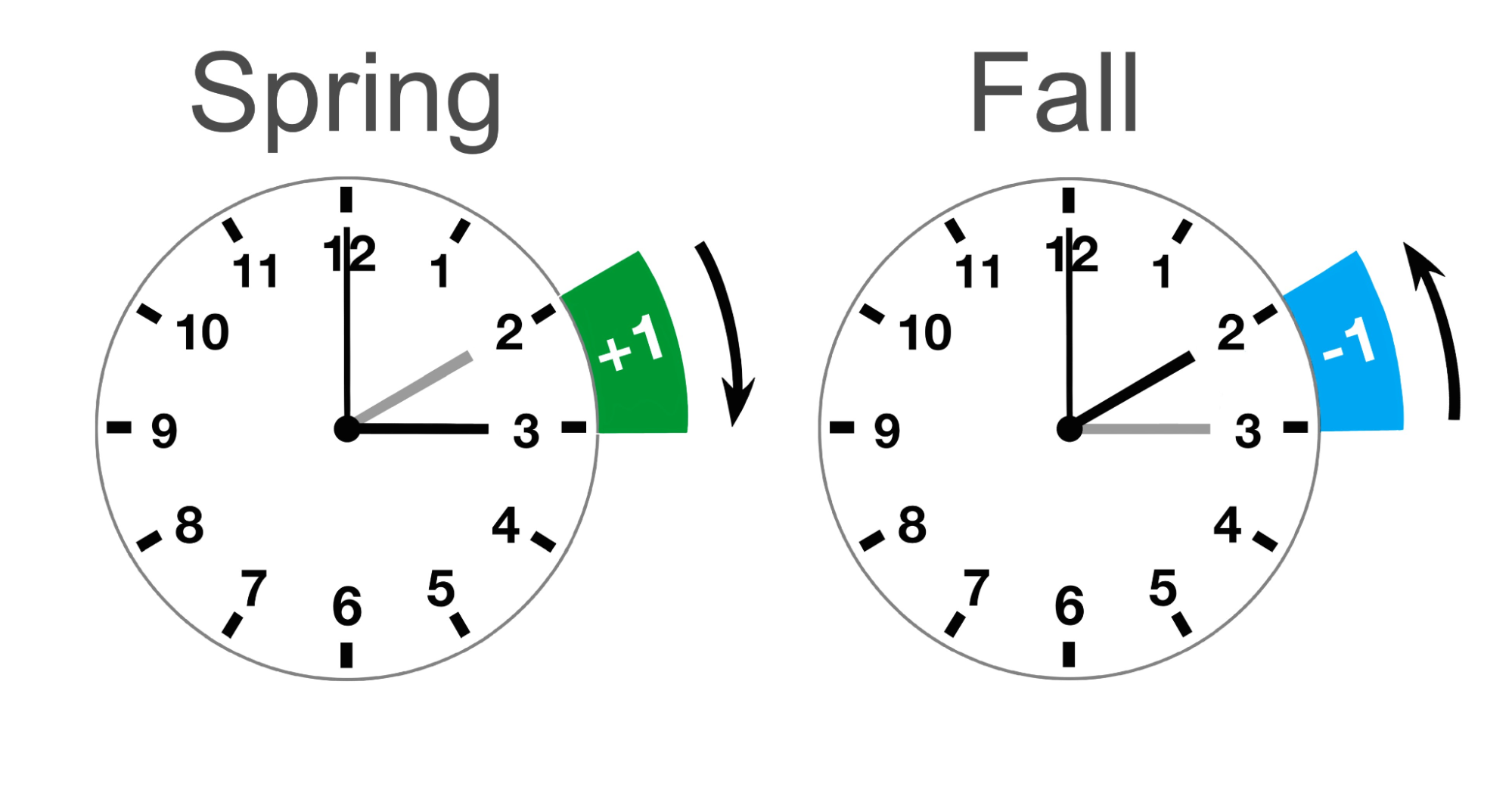

The main reason is that many countries use Daylight Saving Time (DST), where clocks are moved forward in spring and backward in autumn.

Time zone aware DAGs that use cron schedules respect daylight savings time.

Time zone aware DAGs that use timedelta or relativedelta schedules respect daylight savings time for the start date but do not adjust for daylight savings time when scheduling subsequent runs.

If you set a local timezone: CRON respects DST. Timedelta doesn’t.

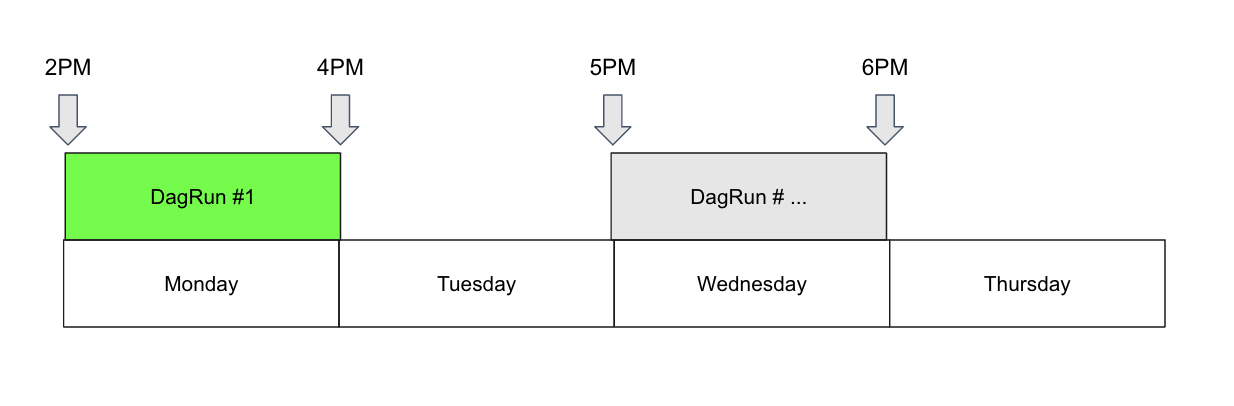

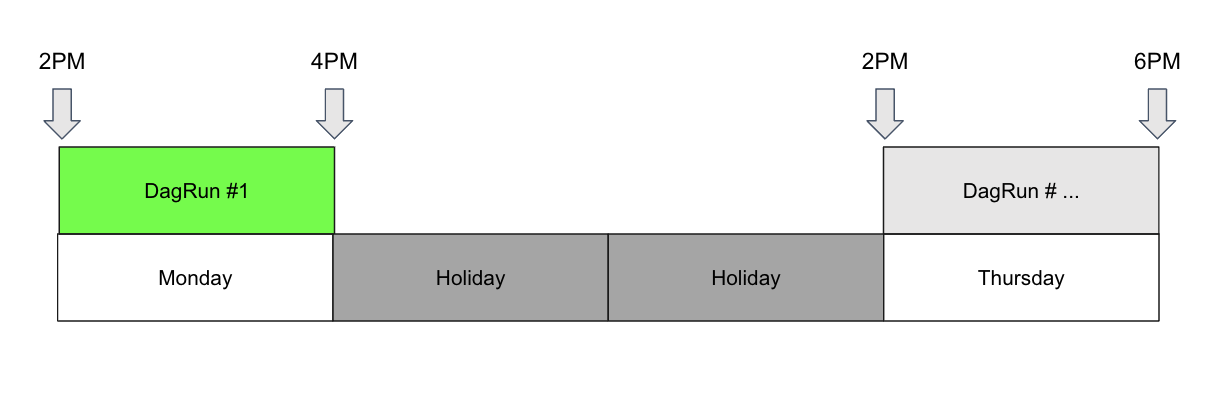

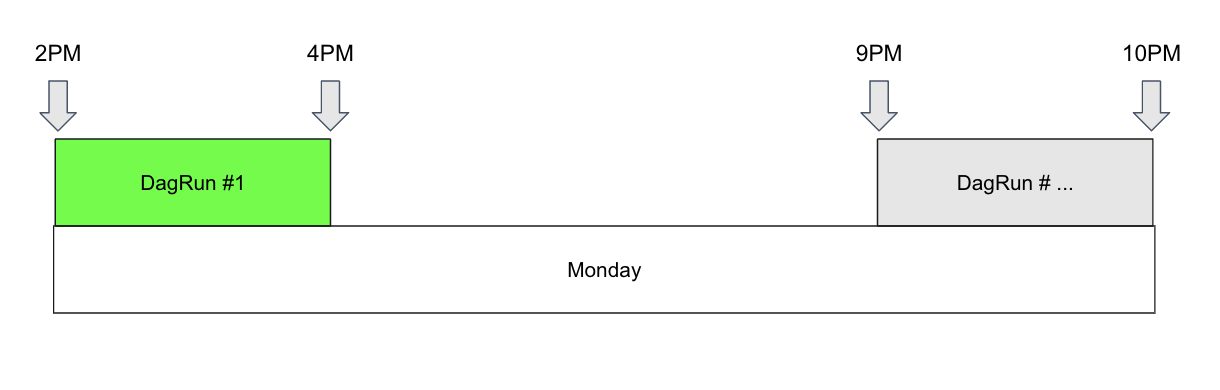

6. What if you wanted to…

Schedule a DAG at different times on different days?

Schedule a DAG daily except for holidays?

Schedule a DAG at multiple times daily with uneven intervals (e.g. 1pm and 4:30pm)?

7. The answer is: The New Timetables!

All the scheduling flexibility and freedom you ever dreamed of.

Timetable steps: 1st: Define your constraints 2nd: Register your timetable as a plugin 3rd: Restart your web server and scheduler to implement modifications 4th: Implement your timetable

Code examples used at the webinar: Customizing DAG Scheduling with Timetables