Introducing Otto: The Only Data Engineering Agent Built for Airflow

7 min read |

Data engineering is the discipline that every company depends on. It's the work that keeps dashboards current, AI models trustworthy, and customer-facing applications running, and the scope is expanding, not shrinking.

Data teams are still largely the same size they were two years ago, but the demand on them has grown considerably. The pressure shows up everywhere: upgrade debt that keeps accumulating, 2am production failures that eat the next morning, institutional knowledge that walks out the door when a senior engineer moves on.

Today, we're introducing Otto, our data engineering agent, built to take on the operational load so your team can focus on the work that matters.

Your Airflow expert, always available

Data engineers spend too much of their time on work that scales poorly: manually tracing a failure through logs, planning an Airflow upgrade across hundreds of Dags, onboarding a new engineer who doesn't yet know which Snowflake connection to use for prod. Otto is built to take on that work alongside you, and accelerate it.

Otto is the only data engineering agent purpose-built for Airflow, and the first to bring Astronomer's depth of operational Airflow knowledge directly to the engineers doing the work.

It knows your environment, and learns from your team's conventions. Ask it to build a Dag, investigate a failure, or plan your next upgrade, and Otto acts using your actual Dag patterns, run history, and proven upgrade paths.

The longer your team uses it, the more it knows. Your conventions accumulate, corrections stick, and new engineers inherit the context that would otherwise take months to absorb.

Why we built Otto

We've spent years helping enterprise data teams run Airflow at scale. Through that work, we've accumulated knowledge about what breaks, what works, and what it actually takes to keep a production data platform healthy. That knowledge has lived in our support teams, our documentation, and the expertise of the engineers we've worked alongside.

Otto is how we put that knowledge to work for every data engineer, directly in the tools you use every day.

Building it on Astro also means Otto has access to something no standalone agent can replicate: the full operational history of your data platform. Every pipeline execution, failure, and correction is a record of how your data actually moves, and that context is what lets Otto reason about your environment with precision rather than guesswork. It doesn't need you to explain your environment, it already knows it.

Airflow's position as the orchestration layer across your entire data platform means Otto's reach extends to every system you connect to. And because orchestration sits in the execution path of every data operation, it doesn't just have access to context, it helps create it.

We built Otto because data engineers deserve an agent that understands their work, not just their syntax.

Accelerate every part of your workflow

Data engineering work doesn't happen in isolated tasks. It spans the full lifecycle: understanding what data exists and how it moves, building pipelines that process it reliably, diagnosing when things break, keeping the platform current, and making sure institutional knowledge outlasts the people who hold it. Otto is built to accelerate every part of that work, not just the authoring step.

Data exploration

Before writing a single line of code, a data engineer needs to understand what they're working with. Otto connects to your data warehouses across 25+ connectors, including Snowflake, Databricks, BigQuery, and Postgres, and lets you query, profile, and trace lineage directly in your agent session. Ask what tables contain customer data, pull revenue trends by product line, or understand what upstream sources feed a given table, without leaving the workflow or switching to another tool.

Dag authoring, testing, and debugging

Ask Otto to build a pipeline and it works within your actual environment: your Airflow version, installed providers, configured connections, and the conventions stored in memory. A new engineer gets your team's decorator patterns, naming conventions, and retry policies from day one, without reading the wiki. When something breaks, Otto reads the Dag code, checks task logs across recent runs, identifies patterns, and proposes fixes. It runs the Dag locally, reads the logs, catches failures, and fixes them before anything reaches your deployment.

Airflow upgrades

Otto evaluates your entire fleet against Astronomer’s proprietary compatibility knowledge base, identifies which Dags need changes and why, and produces a prioritized plan with specific code proposals. A team with 180 Dags on Airflow 2.7 that has been deferring the 3.x upgrade for two quarters can go from "we need to plan this" to "here's the batched execution plan, review and approve." What was a multi-sprint planning problem becomes an agent-assisted execution process.

Pipeline failure investigation

Otto reads task logs, traces dependency chains, and checks run history against patterns it has seen before. Ask what happened to a Dag that failed overnight and Otto comes back with a root cause, the history of similar failures, and a proposed fix.

What's available today is the foundation, with more capabilities shipping continuously.

Coming soon: migration support

Migration projects stall because the workflow-to-workflow translation is slow, manual, and requires deep expertise on both sides. Whether you're consolidating across Airflow environments or moving workloads from schedulers like Control-M or Autosys onto Airflow, the challenge is the same: translating scheduling logic, reproducing dependency semantics, and applying best practices across a fleet of workflows, without breaking anything that's already running.

Otto is designed to accelerate this work and migration support will be coming soon. If your team has an active or planned migration project, we'd love to talk to explore how Otto can help.

Three layers of context no other agent has

What makes all of this possible is context, and Otto has three layers of it that no other agent can replicate:

Open source knowledge

Airflow documentation, provider docs, community best practices, and the skills from Astronomer's open source agents repo. This is the foundation we've contributed to the entire Airflow community, available to any agent or any team.

Astronomer's proprietary knowledge.

Our years of running Airflow at enterprise scale has produced knowledge that doesn't exist in any public documentation: a living compatibility knowledge base covering version matrices, deprecation maps, provider interactions, and upgrade paths. When Otto takes on a task, it reasons from this base.

Your team's context (Otto Memory)

Every correction an engineer makes, every convention that gets codified, every environmental detail discovered during an investigation gets stored and carried forward. The @task decorator pattern your team prefers, the Snowflake connection ID for production, your retry policy, Otto learns these, stores them as memories, and applies them automatically in every future session. Corrections stick, new engineers get your conventions from day one, and institutional knowledge becomes a durable asset that scales as your team grows.

Get started with Otto

Now available in Labs for all Astro customers and free to explore with usage limits in place.

Otto meets you where you work. You can access Otto today in the Astro CLI or directly in Astro:

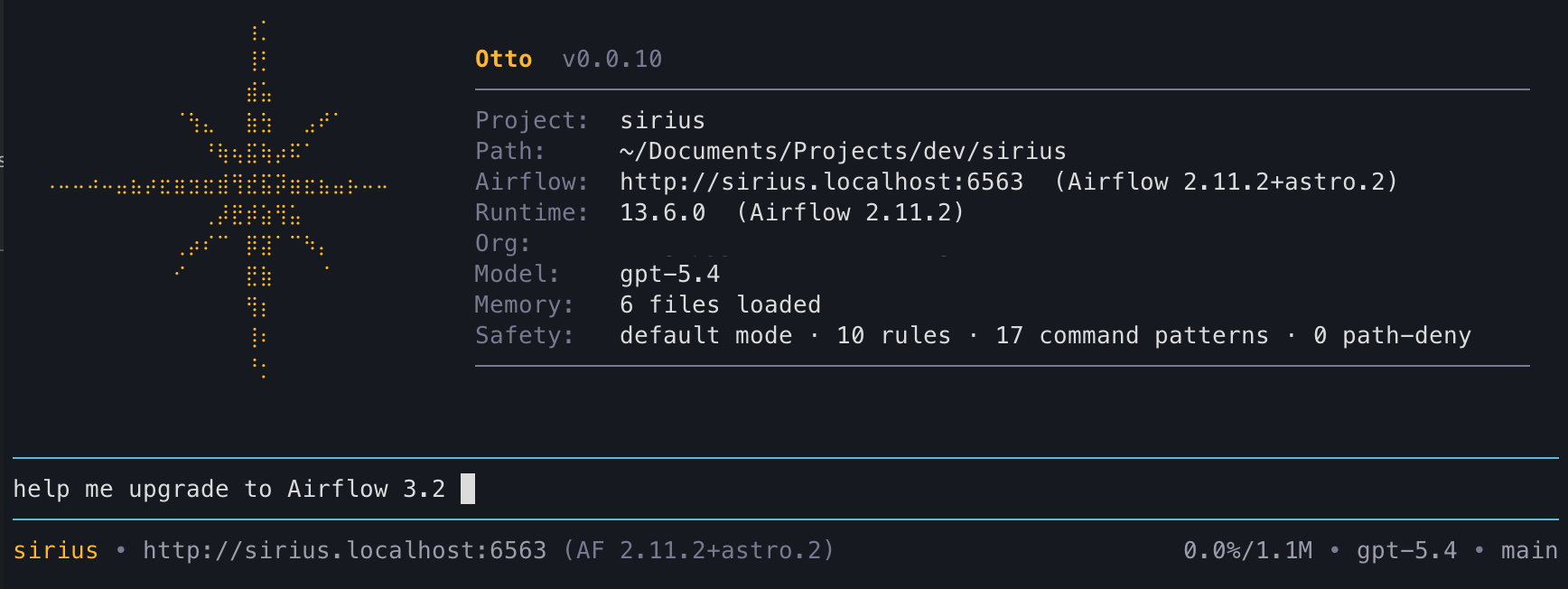

Astro CLI: Run astro otto in your terminal and the CLI handles authentication with your Astro credentials automatically. Otto comes pre-configured with skills, tool access, and proprietary context bundled so you can get started without any setup burden. You can also prompt Otto to start a local Airflow environment in standalone mode, no container runtime required, so Otto can query Dag runs, task logs, and connections on a live instance and automatically validate Dags after each edit.

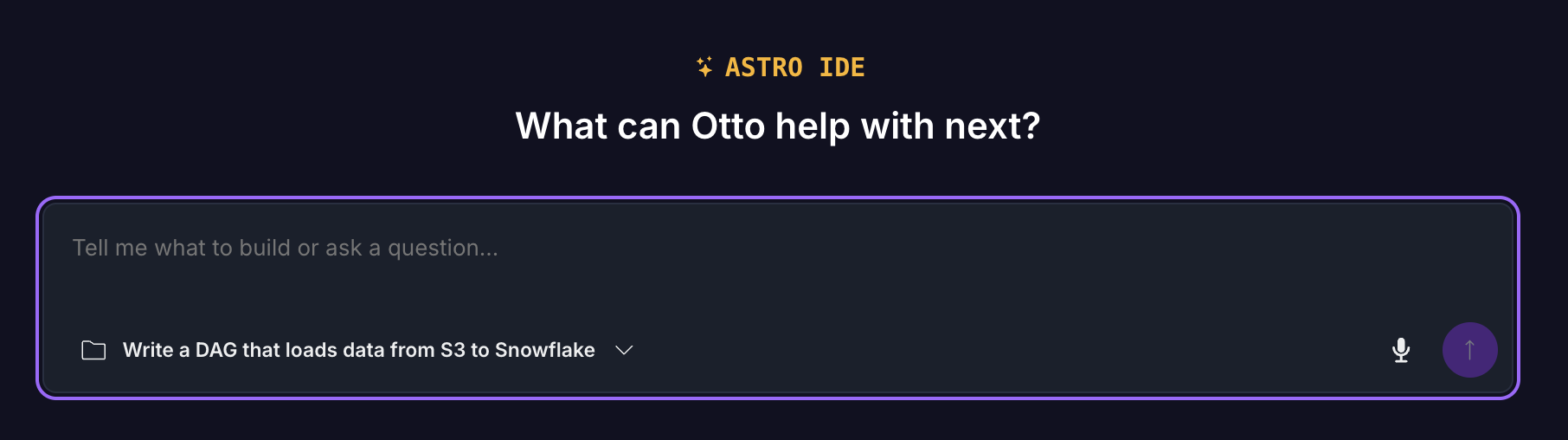

Astro: Access Otto directly in Astro for code exploration, Dag authoring and debugging, and upgrade planning, without leaving your browser in the Astro IDE.

Otto supports models from OpenAI, Anthropic, and Google, and you can choose or switch mid-session, so it works with whatever your team or your company already uses.

We'll be bringing Otto to more places soon, including a desktop app for local development and MCP support so you can use it directly inside Claude Code, Cursor, and other tools your team already relies on.

The road ahead

What ships today is the foundation. The direction is autonomous data engineering. Agents that proactively diagnose failures before your team is paged, multi-step upgrade sequences that run across days, infrastructure that maintains itself. Every team that uses Otto today is building the context layer that makes that possible.

Ready to try it yourself? Get started with

astro ottoin the Astro CLI or access it directly in Astro with the Astro IDE. See more details on how to get started here.

Want to talk through what Otto can do for your team? Reach out to us.

Get started free.

OR

By proceeding you agree to our Privacy Policy, our Website Terms and to receive emails from Astronomer.