Airflow in Action: Red Hat's Blueprint for Trusted AI Agents with Astro

5 min read |

At Airflow Summit, Shoubhik Bose, Senior Principal Software Engineer at Red Hat, walked through how the company modernized its internal data and AI platform using Apache Airflow® running on Astro, the unified orchestration platform to build, run, and observe their pipelines. This session covers the architectural decisions behind that transformation and how Airflow with Astro now powers AI agents at scale across Red Hat's business.

20 years of complexity. One platform to fix it.

Red Hat is not a data company. It ships software products and subscriptions. But after two decades of growth, including the shift to becoming the control plane for the world's hybrid cloud environments, the company realized their internal data infrastructure had not kept pace. Internal surveys surfaced the same pain points repeatedly: duplicate efforts across teams, inconsistent standards for publishing and consuming data, insufficient infrastructure, and a data culture that had not matured alongside the business.

The goal was clear. Every morning, before business executives arrived at the office, sales forecasts, ACV figures, renewal data, and quarterly pipeline numbers needed to be ready and queryable, not just in dashboards, but through natural language interfaces. Getting there required rebuilding the foundation.

Build it like open source. Run it like a product.

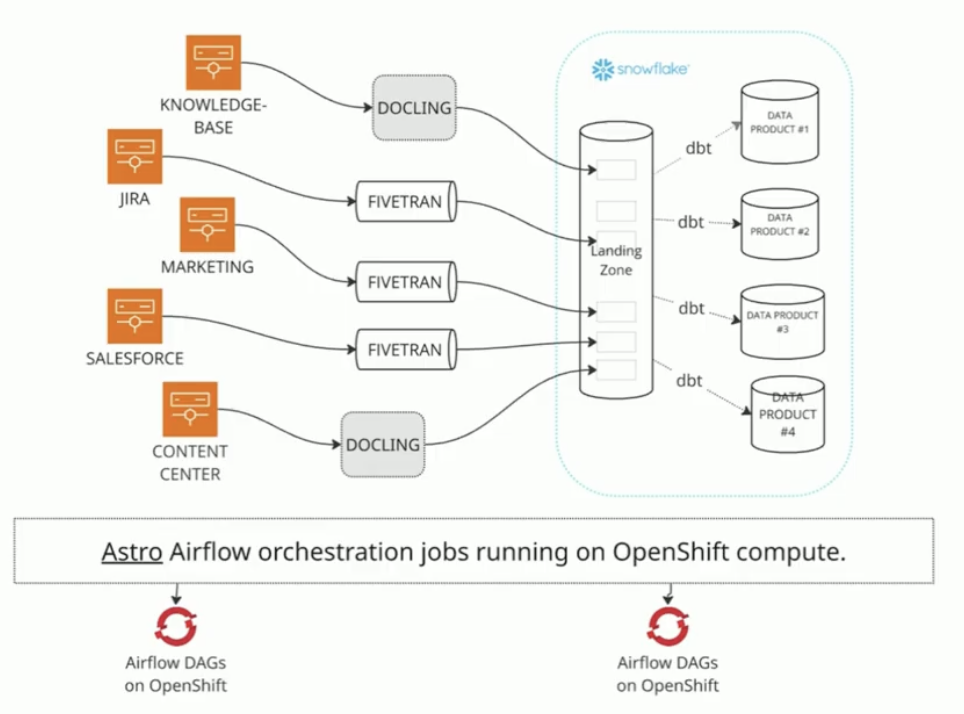

Red Hat treated its data platform as an internal open-source project, applying the same principles it uses for its software business. The team named the platform Dataverse and designed it around a data mesh architecture, defining data products as either source-aligned or aggregate, with versioning, UAT, assigned owners, and backward compatibility requirements baked in.

The stack centers on Snowflake as the data warehouse and dbt for transformation. Fivetran handles data ingestion, and in production the entire data lifecycle is orchestrated by Airflow.

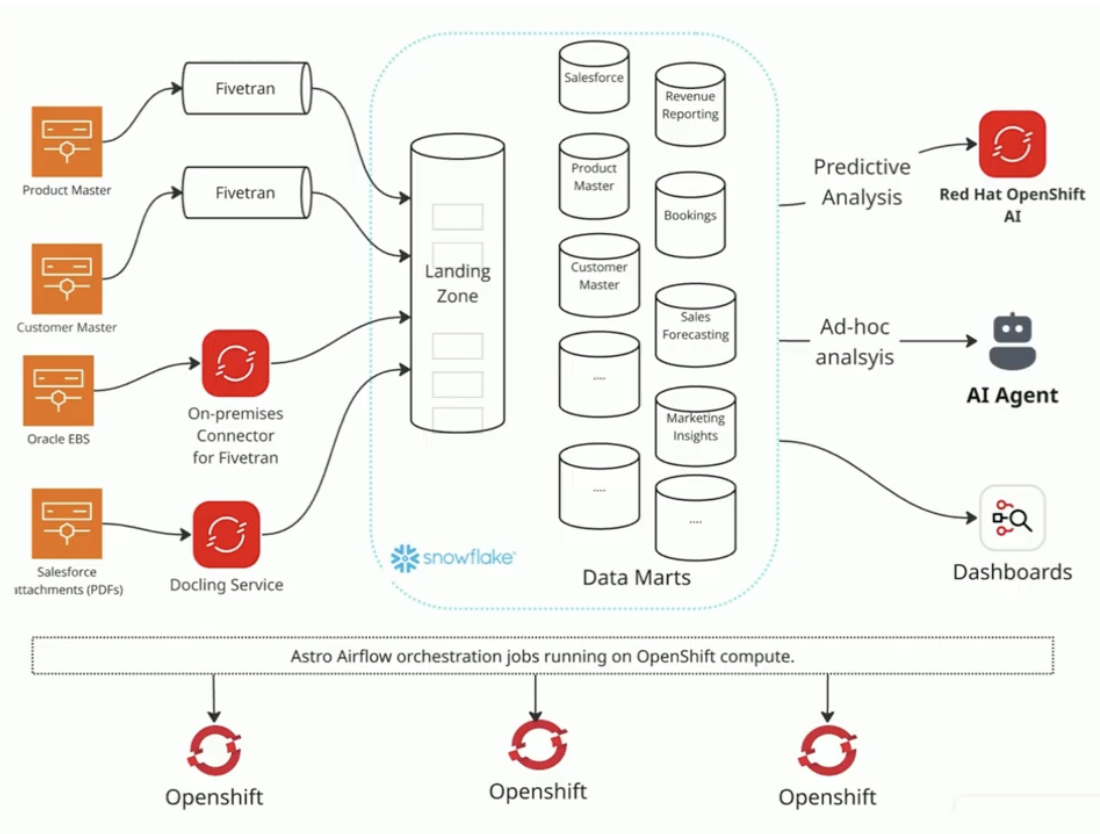

Figure 1: Red Hat’s data and AI platform, orchestrated by Airflow running on Astro with Astro Observe used to detect issues before they impact downstream systems. Image source.

Red Hat runs Astro, Astronomer's managed Airflow service, running jobs on OpenShift compute. The team started with Astronomer's on-premises software, then migrated to the fully managed Astro SaaS solution. The ability to separate the managed service layer from the compute layer was critical: Red Hat gets Astronomer's Airflow support and operational reliability without surrendering control over where workloads run or compromising its security posture. The platform has been validated for use within regulated industries, meeting enterprise compliance requirements.

As AI use cases emerged, the team extended the same orchestration platform to handle unstructured data. Using Docling, unstructured content like PDFs and customer presentations are processed, vectorized, and made available in Snowflake as governed data products, ready to power AI agents.

Figure 2: With Red Hat’s AI stack orchestrated by Astro, the team can build AI solutions using curated and compliant structured and unstructured data sets . Image source.

Just the beginning: Two agentic use cases

Dataverse currently supports close to 95 active data products, with two AI agents deployed in production, and more under active development.

The first is a business analytics agent that gives executives and sales teams the ability to query ACV, renewals, and pipeline data in natural language, without writing SQL or waiting on a data analyst. The reliability of that experience depends entirely on complex pipelines running behind the scenes, validating transactions, filtering out inter-company transfers, and ensuring only trusted, governed data reaches the query layer.

The second is a Privacy Impact Assessment (PIA) agent. Red Hat deals with regulated industries and a large vendor ecosystem, which means PIA processes are frequent and previously time-consuming. With the Dataverse foundation in place, PIA workflows are now conversational. Supervisors receive pre-validated data with assigned owners and documented UAT, compressing what used to take weeks into a faster, auditable process. Airflow orchestrates the underlying pipelines that make that data trustworthy before it is accessed by the agent.

The Trust Engine: dbt, Cosmos, and Astro Observe

Three components make the system production-grade and trusted by the business.

- Data Transformation as Code: 95 data products are maintained declaratively as dbt projects in Git, with assigned maintainers and approvers. This mirrors the open-source contribution model Red Hat uses for its software products.

- Orchestration: Airflow with Astronomer Cosmos handles unified orchestration across the multi-vendor stack. Cosmos provides first-class, native integration between dbt and Airflow, ensuring transformations execute reliably and consistently in production.

- Observability: Astro Observe surfaces pipeline status across management chains without requiring non-technical stakeholders to access deployment workspaces. Executives can see whether quarter-close pipelines are running and data is being generated, without needing edit access or Airflow expertise. Before Astro Observe, there was no clean way to give leadership that visibility.

All compute runs on Red Hat's existing OpenShift infrastructure, preserving internal security configurations when moving from on-premises to SaaS.

Applying Red Hat’s learnings to data and AI

Airflow's predictability, connectivity, and reliability made it the right choice for a complex, multi-vendor data stack at an enterprise that cannot afford to treat orchestration as a simple script or low level component. Astro removes the operational burden of running Airflow at scale, freeing the team to focus on data products and business outcomes rather than infrastructure.

The result is a platform that delivers trusted data to executives in near real time, powers production AI agents, and scales to support the next generation of use cases Red Hat is building.

Watch the session: Applying Airflow to drive the digital workforce in the Enterprise to get the complete architectural breakdown, including how Red Hat structured its data mesh, how Airflow and Astro unifies orchestration across the data stack, and the engineering decisions behind the company’s AI agent rollout.

The quickest way to get started with Airflow is to sign up for free on the Astro platform.

Get started free.

OR

By proceeding you agree to our Privacy Policy, our Website Terms and to receive emails from Astronomer.