Airflow in Action: Unlocking Airflow 3's Power for Multi-Tenancy at Datadog

5 min read |

Datadog processes over 100 trillion events daily, accumulating data at a scale few companies face. In their Why Datadog Chose Airflow 3 session at the Airflow Summit, Principal Engineer Julien Le Dem and Senior Software Engineer Zach Gottesman share how Datadog evolved from Luigi to building a custom orchestrator to ultimately adopting Apache Airflow®. They reveal the technical requirements that drove their journey, talk about the importance of advanced data-aware scheduling features, and demonstrate how Airflow 3 enabled them to develop their own multi-tenancy set up.

The Wrong Horse

Datadog adopted Luigi in 2015, right when Airflow emerged as a newcomer from Airbnb. But as open source contribution history showed, Luigi plateaued while Airflow's community momentum exploded.

The team faced critical challenges. They needed data lifecycle management, not just task lifecycle management. When data required restatement and downstream propagation, “task-centric only” orchestration in Airflow 1 would have demanded careful manual intervention in the case of Datadogs partitioned pipelines. They also needed to decouple task and data set dependencies from team ownership, implementing a pattern of data-aware scheduling, without suffering with one giant Dag or managing countless tiny Dags with clunky workarounds.

Building from First Principles (Almost)

In 2021, Datadog evaluated Airflow 2.1 but found that at the time it still lacked data-aware features. The team identified partition-based scheduling as core to their needs and already tracked partition updates and data quality in a metadata system. The pull to build their own orchestrator from scratch was strong. They envisioned an event-driven scheduler with lineage and quality built in from day one.

Community Momentum Plus Open Source Contribution

Airflow 3 changed everything. The platform became genuinely data-driven with data-aware scheduling, including conditional asset schedules and the possibility to combine time and asset scheduling in the same Dag , and a strong vision of even more data-awareness in Airflow in the near future. Additionally, Airflow Improvement Proposal (AIP) 67 was outlining first-class multi-tenancy support. The team recognized they couldn't compete with Airflow's community momentum. Rather than building in isolation, Datadog committed to contributing to the features outlined in AIP-73 to further increase Airflow’s data awareness and work with the community to integrate partition-based incremental scheduling directly into Airflow.

Editors note: Asset partitions are targeted for release with Airflow 3.2 in Spring 2026

Multi-Tenancy Through Worker Groups

Datadog rolled out Airflow 3 in production shortly after its release, and created their own multi-tenant setup utilizing worker groups composed of Dag collections, dedicated worker namespaces, and org identity mapping. This delivers homogeneity and predictability across one consistent platform instead of managing multiple Airflow environments on varying versions of Airflow and Postgres, each coming with their own customization adding complexity.

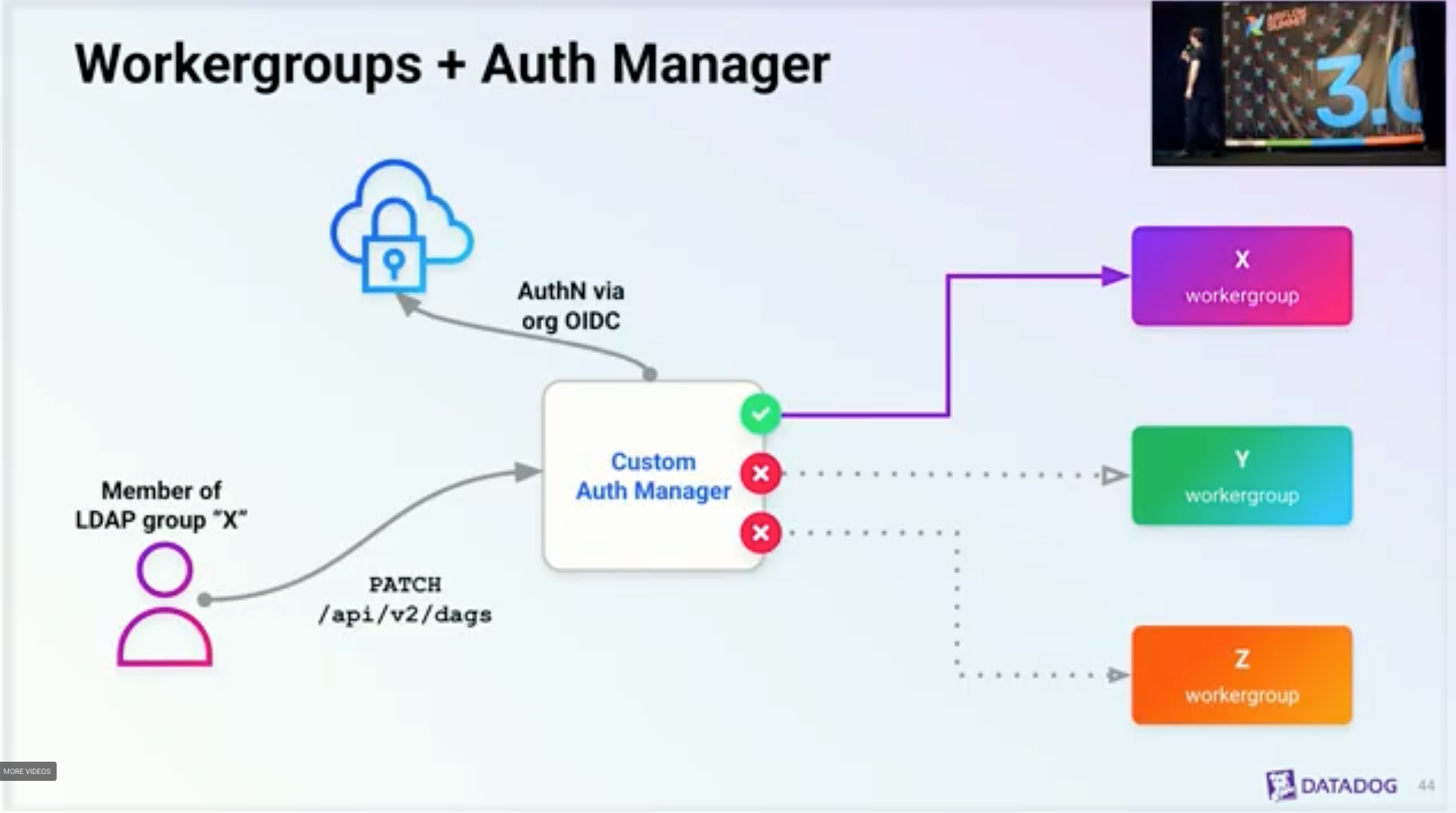

Figure 1: Datadog’s multi-tenancy set up using a custom auth manager mapping team identity in Datadog’s identity management system to worker group access. Image source.

Airflow 3's worker-specific secrets backends proved transformative. This feature enabled Datadog to isolate credentials between worker groups and separate the core namespace from worker groups entirely. The team integrated authentication with Datadog's identity management system, then implemented authorization rules so worker group members can update their own Dags but not others.

Gottesman highlighted cluster policies as essential to enforce the multi-tenancy setup but noted a critical distinction. Datadog split policies into buildtime for validation and online for mutations. The buildtime policy validates users aren't touching queue parameters, while the online policy infers worker groups and assigns queues automatically.

Isolating Environments at Scale

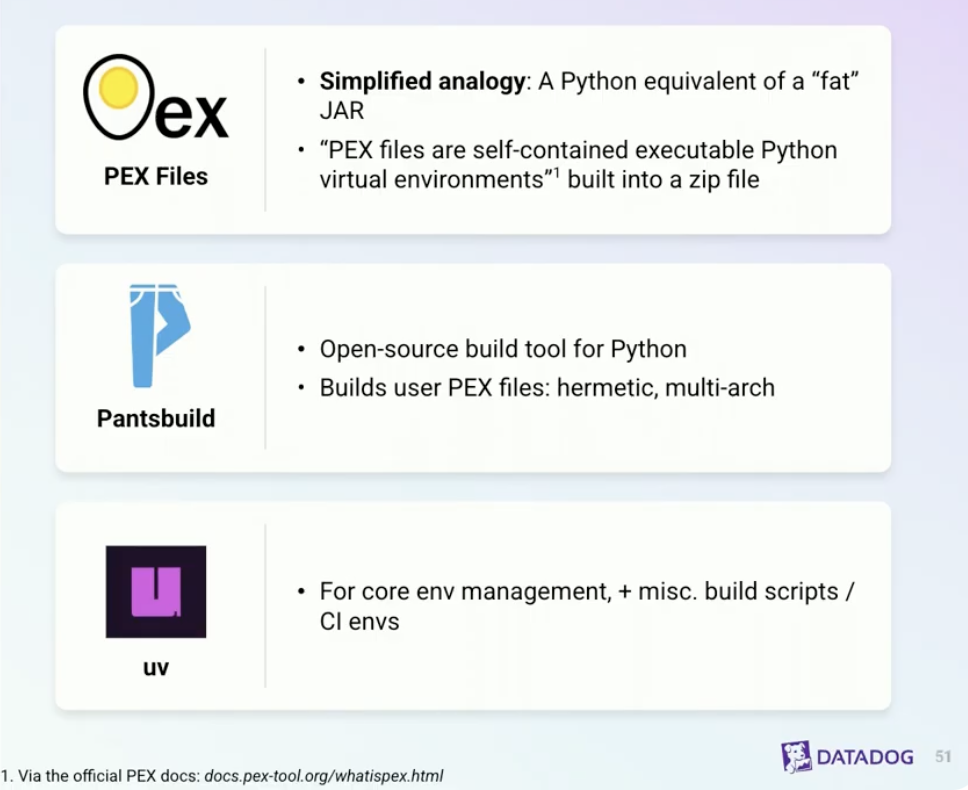

Environment isolation posed another challenge. Running custom Python scripts using the @task decorator or the PythonOperator offers no environment separation. The virtualenv operator struggles with large projects requiring arbitrary entry points. Datadog's solution: packaging user task logic into PEX files via Pantsbuild. Users publish PEX files to cloud storage, and a custom operator pulls and executes them. Data practitioners develop and run PEX files completely separate from Airflow without the need to build local Airflow environments for testing.

Figure 2: How Datadog implements environment isolation. Image source.

The Power of Community

Datadog's journey validates a fundamental truth. Open source communities move faster than any single engineering team. Airflow 3 delivered capabilities that would have taken years to build independently. The competitive advantage isn't building your own orchestrator but leveraging community momentum and contributing the features that make it perfect for your use case.

Watch the full session for detailed demonstrations of multi-tenant architecture, cluster policies, and end-to-end observability.

Multi-Tenancy Made Simple with Astro

Astro, the leading Airflow managed service from Astronomer, delivers enterprise-grade multi-tenancy out of the box. Teams get isolated Deployments with dedicated resources while platform teams maintain centralized governance and security controls. You establish consistent deployment patterns through automated pipelines, container images, credential management, and execution settings so teams ship Dags rapidly while the platform maintains reliability, guardrails, and uniform operations across all tenants. Workspace and Organization-level RBAC provides granular permissions without custom implementations.

Start a free trial at astronomer.io and deploy your first multi-tenant environment in minutes.

Get started free.

OR

By proceeding you agree to our Privacy Policy, our Website Terms and to receive emails from Astronomer.