When designing your data pipelines, you may encounter use cases that require more complex task flows than “Task A > Task B > Task C”. For example, you may have a use case where you need to decide between multiple tasks to execute based on the results of an upstream task. Or you may have a case where part of your pipeline should only run under certain external conditions. Fortunately, Airflow has multiple options for building conditional logic and/or branching into your DAGs.

In this guide, you’ll learn how you can use @task.branch (BranchPythonOperator) and @task.short_circuit (ShortCircuitOperator), other available branching operators, and additional resources to implement conditional logic in your Airflow DAGs.

To get the most out of this guide, you should have an understanding of:

@task.branch (BranchPythonOperator)One of the simplest ways to implement branching in Airflow is to use the @task.branch decorator, which is a decorated version of the BranchPythonOperator. @task.branch accepts any Python function as an input as long as the function returns a list of valid IDs for Airflow tasks that the DAG should run after the function completes.

In the following example we use a choose_branch function that returns one set of task IDs if the result is greater than 0.5 and a different set if the result is less than or equal to 0.5:

In general, the @task.branch decorator is a good choice if your branching logic can be easily implemented in a simple Python function. Whether you want to use the decorated version or the traditional operator is a question of personal preference.

The code below shows a full example of how to use @task.branch in a DAG:

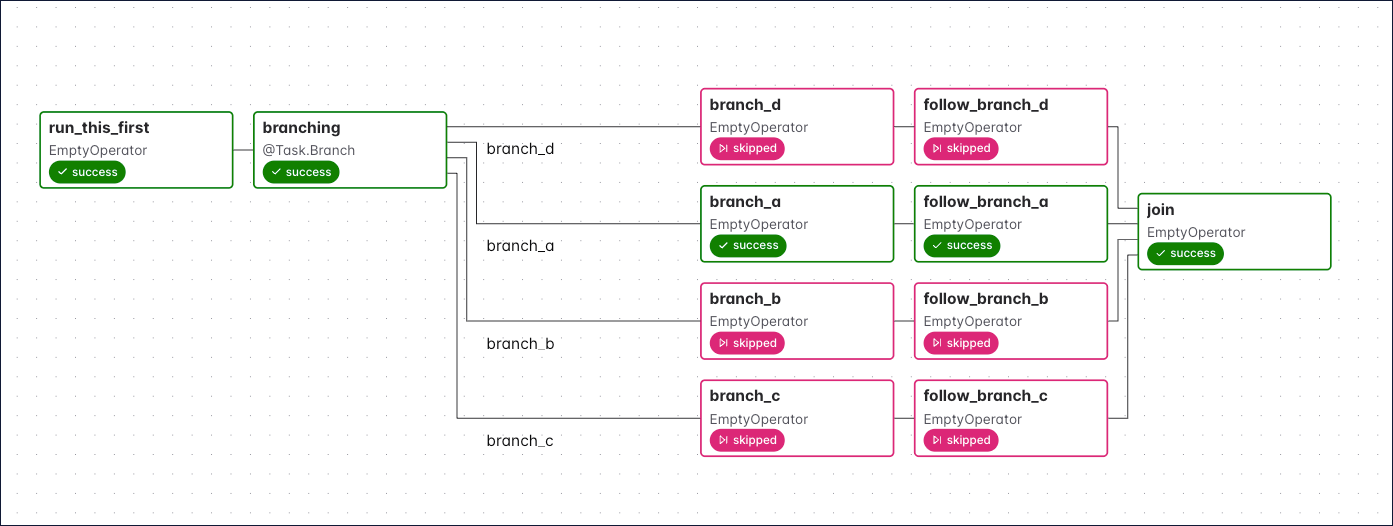

In this DAG, random.choice() returns one random option out of a list of four branches. In the following screenshot, where branch_b was randomly chosen, the two tasks in branch_b were successfully run while the others were skipped.

If you have downstream tasks that need to run regardless of which branch is taken, like the join task in the previous example, you need to update the trigger rule. The default trigger rule in Airflow is all_success, which means that if upstream tasks are skipped, then the downstream task will not run. In the previous example, none_failed_min_one_success is specified to indicate that the task should run as long as one upstream task succeeded and no tasks failed.

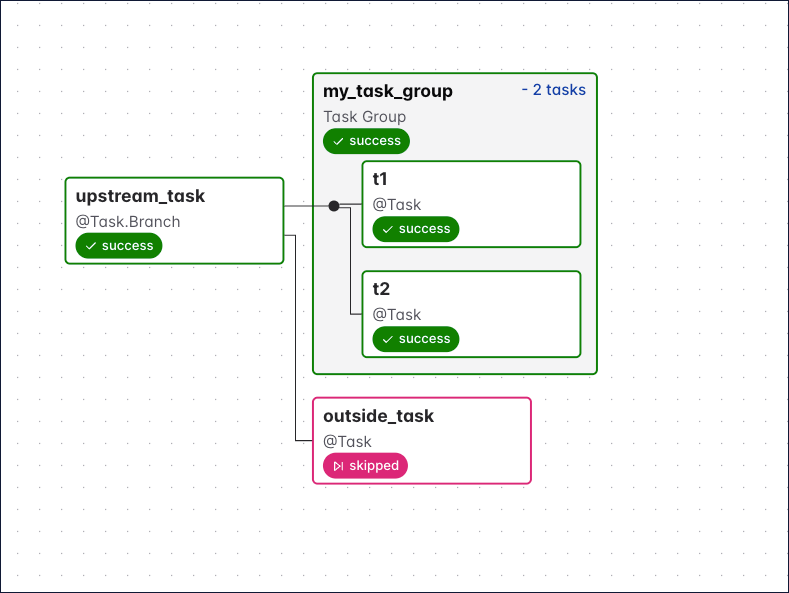

You can also set a task group as the direct downstream element of a branching task by returning its task_group_id in your decorated function or python_callable instead of a task_id. All root tasks of the task group run if the branching tasks return the task_group_id.

Finally, note that with the @task.branch decorator your Python function must return at least one task ID for whichever branch is chosen (in other words, it can’t return nothing). If one of the paths in your branching should do nothing, you can use an EmptyOperator in that branch.

@task.short_circuit (ShortCircuitOperator)Another option for implementing conditional logic in your DAGs is the @task.short_circuit decorator, which is a decorated version of the ShortCircuitOperator. This operator takes a Python function that returns True or False based on logic implemented for your use case. If True is returned, the DAG continues, and if False is returned, all downstream tasks are skipped.

@task.short_circuit is useful when you know that some tasks in your DAG should run only occasionally. For example, maybe your DAG runs daily, but some tasks should only run on Sundays. Or maybe your DAG orchestrates a machine learning model, and tasks that publish the model should only be run if a certain accuracy is reached after training. This type of logic can also be implemented with @task.branch, but that operator requires a task ID to be returned. Using the @task.short_circuit decorator can be cleaner in cases where the conditional logic equates to “run or not” as opposed to “run this or that”.

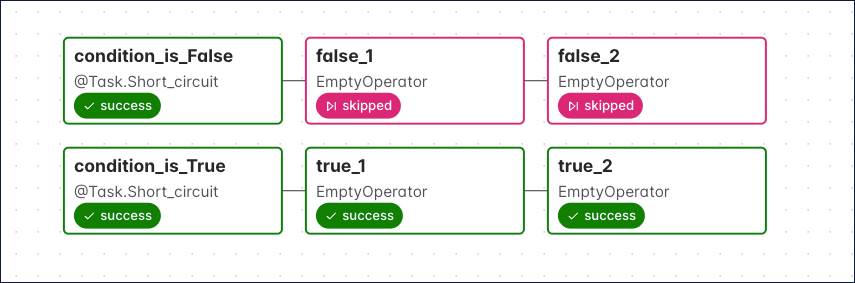

The following DAG shows an example of how to implement @task.short_circuit:

In this DAG there are two short circuits, one which always returns True and one which always returns False. When you run the DAG, you can see that tasks downstream of the True condition operator ran, while tasks downstream of the False condition operator were skipped.

Airflow offers a few other branching operators that work similarly to the BranchPythonOperator but for more specific contexts:

true or false.week_day parameter.target_lower and target_upper times.venv_cache_path.All of these operators take follow_task_ids_if_true and follow_task_ids_if_false parameters which provide the list of task(s) to include in the branch based on the logic returned by the operator.