Configure a Deployment on Astronomer Software

An Airflow Deployment on Astronomer is an instance of Apache Airflow that was created using the Software UI or the Astro CLI. Each Airflow Deployment on Astronomer is hosted on a single Kubernetes namespace, has a dedicated set of resources, and operates with an isolated Postgres metadata database.

This guide walks you through the process of creating and configuring an Airflow Deployment on Astronomer.

Prerequisites

To create an Airflow Deployment, you'll need:

- The Astro CLI installed.

- An Astronomer platform at

app.BASEDOMAIN. - An Astronomer Workspace.

Create a Deployment

To create an Airflow Deployment on Astronomer:

-

Log in to your Astronomer platform at

app.BASEDOMAIN, select a Workspace, and then click New Deployment. -

Complete the following fields:

- Name: Enter a descriptive name for the Deployment.

- Description: (Optional)

- Airflow Version: Astronomer recommends using the latest version.

- Executor: Astronomer recommends starting with Local.

-

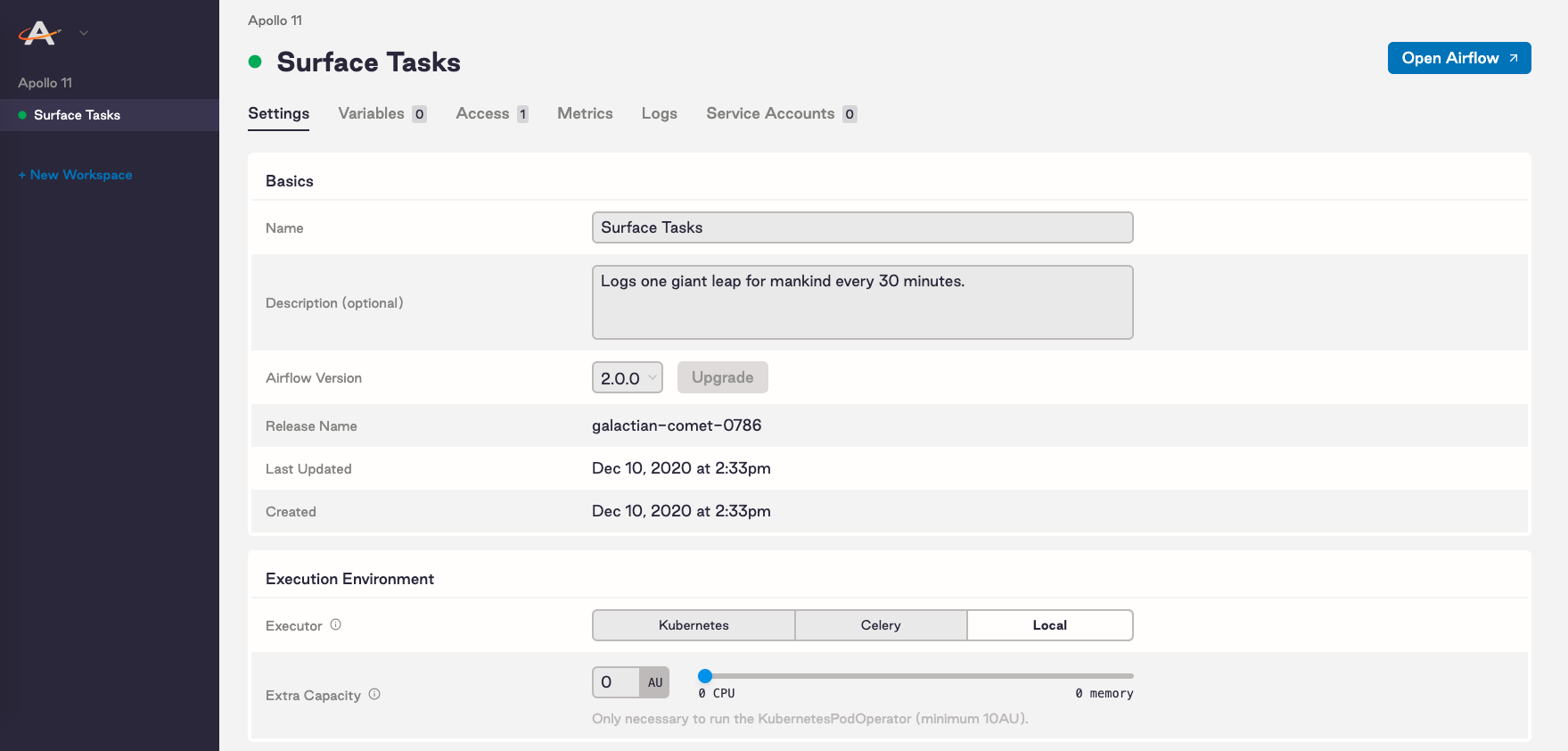

Click Create Deployment and give the Deployment a few moments to be created. After the Deployment is created, you'll be able to access the Settings page of your new Deployment:

On this tab you can modify resources for your Deployment. Specifically, you can:

- Select an Airflow executor

- Allocate resources to your Airflow scheduler and webserver

- Set scheduler count (Airflow 2.0+ only)

- Add extra capacity (Kubernetes only)

- Set worker count (Celery only)

- Adjust your worker termination grace period (Celery only)

Select an executor

The Airflow executor works closely with the Airflow scheduler to decide what resources will complete tasks as they're queued. The difference between executors comes down to their available resources and how they utilize those resources to distribute work.

Astronomer supports 3 executors:

Though it largely depends on your use case, we recommend the Local executor for development environments and the Celery or Kubernetes executors for production environments operating at scale.

For a detailed breakdown of each executor, see Airflow executors explained.

Scale core resources

Apache Airflow requires two primary components:

- The Airflow Webserver

- The Airflow Scheduler

To scale either resource, simply adjust the corresponding slider in the Software UI to increase its available computing power.

Read the following sections to help you determine which core resources to scale and when.

Airflow webserver

The Airflow webserver is responsible for rendering the Airflow UI, where users can monitor DAGs, view task logs, and set various non-code configurations.

If a function within the Airflow UI is slow or unavailable, we recommend increasing the AU allocated towards the webserver. The default resource allocation is 5 AU.

Note: Introduced in Airflow 1.10.7, DAG Serialization removes the need for the webserver to regularly parse all DAG files, making the component significantly more light-weight and performant. DAG Serialization is enabled by default in Airflow 1.10.12+ and is required in Airflow 2.0.

Airflow scheduler

The Airflow scheduler is responsible for monitoring task execution and triggering downstream tasks once dependencies have been met.

If you experience delays in task execution, which you can track via the Gantt Chart view of the Airflow UI, we recommend increasing the AU allocated towards the scheduler. The default resource allocation is 10 AU.

Tip: To set alerts that notify you via email when your Airflow scheduler is underprovisioned, refer to Airflow alerts.

Scheduler count

Airflow 2.0 comes with the ability for users to run multiple schedulers concurrently to ensure high-availability, zero recovery time, and faster performance. By adjusting the Scheduler Count slider in the Software UI, you can provision up to 4 schedulers on any Deployment running Airflow 2.0+ on Astronomer.

Each individual scheduler will be provisioned with the AU specified in Scheduler Resources. For example, if you set Scheduler Resources to 10 AU and Scheduler Count to 2, your Airflow Deployment will run with 2 Airflow schedulers using 10 AU each for a total of 20 AU.

To increase the speed at which tasks are scheduled and ensure high-availability, we recommend provisioning 2 or more Airflow schedulers for production environments. For more information on the Airflow 2.0 scheduler, refer to Astronomer's "The Airflow 2.0 Scheduler" blog post.

Triggerer

Airflow 2.2 introduces the triggerer, which is a component for running tasks with deferrable operators. Like the scheduler, the triggerer is highly-available: If a triggerer shuts down unexpectedly, the tasks it was deferring can be recovered and moved to another triggerer.

By adjusting the Triggerer slider in the Software UI, you can provision up to 2 triggerers on any Deployment running Airflow 2.2+. To take advantage of the Triggerer's high availability, we recommend provisioning 2 triggerers for production Deployments.

Kubernetes executor: Set extra capacity

On Astronomer, resources required for the KubernetesPodOperator or the Kubernetes Executor are set as Extra Capacity.

The Kubernetes executor and KubernetesPodOperator each spin up an individual Kubernetes pod for each task that needs to be executed, then spin down the pod once that task is completed.

The amount of AU (CPU and Memory) allocated to Extra Capacity maps to resource quotas on the Kubernetes Namespace in which your Airflow Deployment lives on Astronomer. More specifically, Extra Capacity represents the maximum possible resources that could be provisioned to a pod at any given time.

AU allocated to Extra Capacity does not affect scheduler or webserver performance and does not represent actual usage. It will not be charged as a fixed resource.

Celery executor: Configure workers

To optimize for flexibility and availability, the Celery executor works with a set of independent Celery workers across which it can delegate tasks. On Astronomer, you're free to configure your Celery workers to fit your use case.

Worker count

By adjusting the Worker Count slider, users can provision up to 20 Celery workers on any Airflow Deployment.

Each individual worker will be provisioned with the AU specified in Worker Resources. If you set Worker Resources to 10 AU and Worker Count to 3, for example, your Airflow Deployment will run with 3 Celery workers using 10 AU each for a total of 30 AU. Worker Resources has a maximum of 100 AU (10 CPU, 37.5 GB Memory).

Worker termination grace period

On Astronomer, Celery workers restart following every code deploy to your Airflow Deployment. This is to make sure that workers are executing with the most up-to-date code. To minimize disruption during task execution, however, Astronomer supports the ability to set a Worker Termination Grace Period.

If a deploy is triggered while a Celery worker is executing a task and Worker Termination Grace Period is set, the worker will continue to process that task up to a certain number of minutes before restarting itself. By default, the grace period is ten minutes.

Tip: The Worker Termination Grace Period is an advantage to the Celery executor. If your Airflow Deployment runs on the Local executor, the scheduler will restart immediately upon every code deploy or configuration change and potentially interrupt task execution.

Set environment variables

Environment variables can be used to set Airflow configurations and custom values, both of which can be applied to your Airflow Deployment either locally or on Astronomer.

These can include setting Airflow Parallelism, an SMTP service for alerts, or a secrets backend to manage Airflow connections and variables.

Environment variables can be set for your Airflow Deployment either in the Variables tab of the Software UI or in your Dockerfile. If you're developing locally, they can also be added to a local .env file. For more information on configuring environment variables, read Environment variables on Astronomer.

Note: Environment variables are distinct from Airflow variables and XComs, which you can configure directly via the Airflow UI and are used for inter-task communication.

Customize release names

An Airflow Deployment's release name on Astronomer is a unique, immutable identifier for that Deployment that corresponds to its Kubernetes namespace and that renders in Grafana, Kibana, and other platform-level monitoring tools. By default, release names are randomly generated in the following format: noun-noun-<4-digit-number>. For example: elementary-zenith-7243.

To customize the release name for a Deployment as you're creating it, you first need to enable the feature on your Astronomer platform. To do so, set the following value in your config.yaml file:

astronomer:

houston:

config:

deployments:

manualReleaseNames: true # Allows you to set your release names

Then, push the updated config.yaml file to your installation as described in Apply a config change.

After applying this change, the Release Name field in the Software UI becomes configurable:

DAG deploy mechanisms

You can use two different DAG deploy mechanisms on Astronomer:

- Image-based deploys

- NFS volume-based deploys

Image-based deploys

By default, you can deploy DAGs to an Airflow Deployment by building them into a Docker image and pushing that image to the Astronomer Registry via the CLI or API. This workflow is described in Deploy DAGs via the CLI.

This mechanism builds your DAGs into a Docker image alongside all other files in your Astro project directory, including your Python and OS-level packages, your Dockerfile, and your plugins.

The resulting image is then used to generate a set of Docker containers for each of Airflow's core components. Every time you run astro deploy in the Astro CLI, your DAGs are rebuilt into a new Docker image and all Docker containers are restarted.

Since the image-based deploy does not require additional setup, we recommend it for those getting started with Airflow.

Volume-based deploys

For advanced teams who deploy DAG changes more frequently, Astronomer also supports an NFS volume-based deploy mechanism.

Using this mechanism, you can deploy DAGs to an Airflow Deployment on Astronomer by adding the corresponding Python files to a shared file system on your network. Compared to image-based deploys, NFS volume-based deploys limit downtime and enable continuous deployment.

To deploy DAGs to a Deployment via an NFS volume, you must first enable the feature at the platform level. For more information, read Deploy DAGs via NFS volume.

Git-sync deploys

For teams using a Git-based workflow for DAG development, Astronomer supports a git-sync deploy mechanism.

To deploy DAGs via git-sync, you add DAGs to a repository that has been configured to sync with your Astronomer Deployment. Once the Deployment detects a change in the repository, your DAG code will automatically sync to your Deployment with no downtime. For more information on configuring this feature, read Deploy DAGs via git sync.

Delete a Deployment

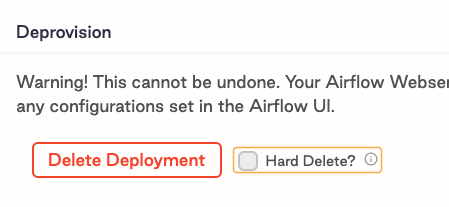

You can delete an Airflow Deployment using the Delete Deployment button at the bottom of the Deployment's Settings tab.

When you delete a Deployment, your Airflow webserver, scheduler, metadata database, and deploy history will be deleted, and you will lose any configurations set in the Airflow UI.

In your Astronomer database, the corresponding Deployment record will be given a deletedAt value and continue to persist until permanently deleted.

Hard delete a Deployment

Note: This feature must first be enabled at the platform level before it can be used. To enable this feature, set

astronomer.houston.config.deployments.hardDeleteDeployment: truein yourconfig.yamlfile and push the changes to your platform as described in Apply a config change.

To reuse a custom release name given to an existing Deployment, you need to first hard delete both the Deployment's metadata database and the Deployment's entry in your Astronomer database. To do so, select the Hard Delete? checkbox before clicking Delete Deployment. Alternatively, you can run astro deployment delete --hard via the Astro CLI.

This action permanently deletes all data associated with a Deployment, including the database and underlying Kubernetes resources.