Note: Since the release of notifiers in Airflow 2.6, you no longer need to create your own Airflow notification system from scratch. Notifiers allow you to set a notification method, and then apply it to all of your DAGs without having to include the same boiler plate code in all of them. Check out our How to monitor your pipelines with Airflow and Astro alerts webinar and our Manage Airflow DAG notifications guide to learn more!

Welcome to the recap of a webinar on notifications in Airflow - a really fun topic that’s relevant to all of us working with data pipelines!

Airflow is an ideal orchestrator - pipelines in code make it flexible, a vast network of provider packages and community contributions make it extensible. It’s also highly scalable due to its sophisticated and flexible infrastructure.

All of it sounds great… But what happens if something goes wrong?

We’d all love to think that our code has no bugs, but that isn’t realistic. And even if we do have perfect code, sometimes things happen that are outside of our control. An API or external system might go down, you might have some mysterious Kubernetes error that you’re never able to replicate. We’ve all been there.

So the question is not really IF something will go wrong, but rather how do you handle it when it happens? The first step is knowing that something occurred first, and that’s what notifications are here for!

Let’s get to know them better.

During the webinar we covered:

- Notification basics

- How notifications work within Airflow and what you can do with them

- Setting up the most common methods - email notifications, Slack notifications etc.

- More advanced topics - SLS, Data quality issues

- Demo

- Q&A

Notification Basics

How do you know that something has failed?

Constantly checking the UI just in case something happens isn’t great for production. Fortunately, Airflow has a ton of options for notifications! And they’re super-flexible.

Notifications can be based on any number of different events, and they can be sent via different methods, and to any number of various systems. Check out our complimentary guide on Airflow notifications that elaborates on the subject. A highly recommended read!

Notification Levels

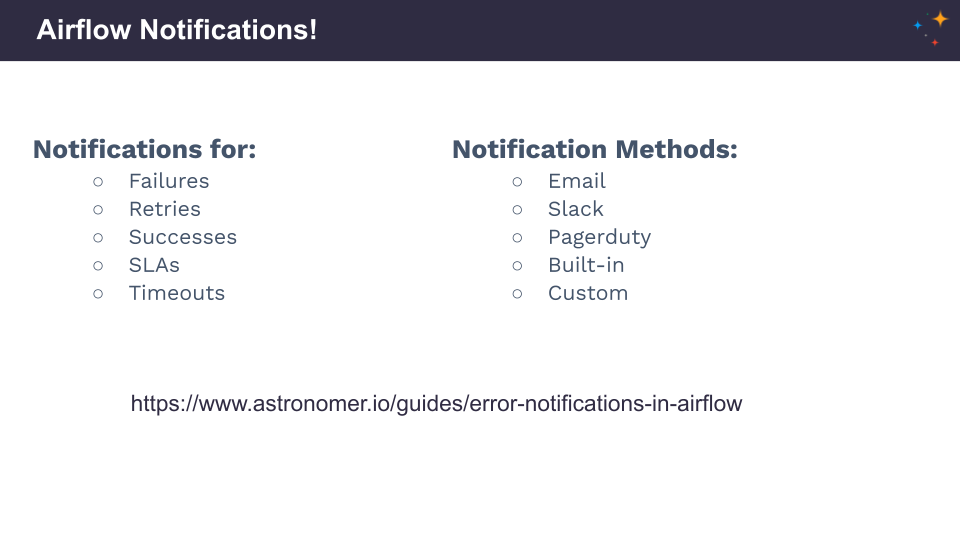

Notification level essentially means what level of your pipeline is sending the notification.

You can set notifications:

- at the DAG level (see example above - email_on_failure set to false) - they will be inherited by every task

- at the task level if you need more specific control (see example above - email_on_failure more specific)

This particular concept is not unique to notifications specifically, it’s very common within Airflow.

Any task level configurations are going to take precedence over your DAG level configurations, so combining those can be a good way to get more specific.

Notification Triggers

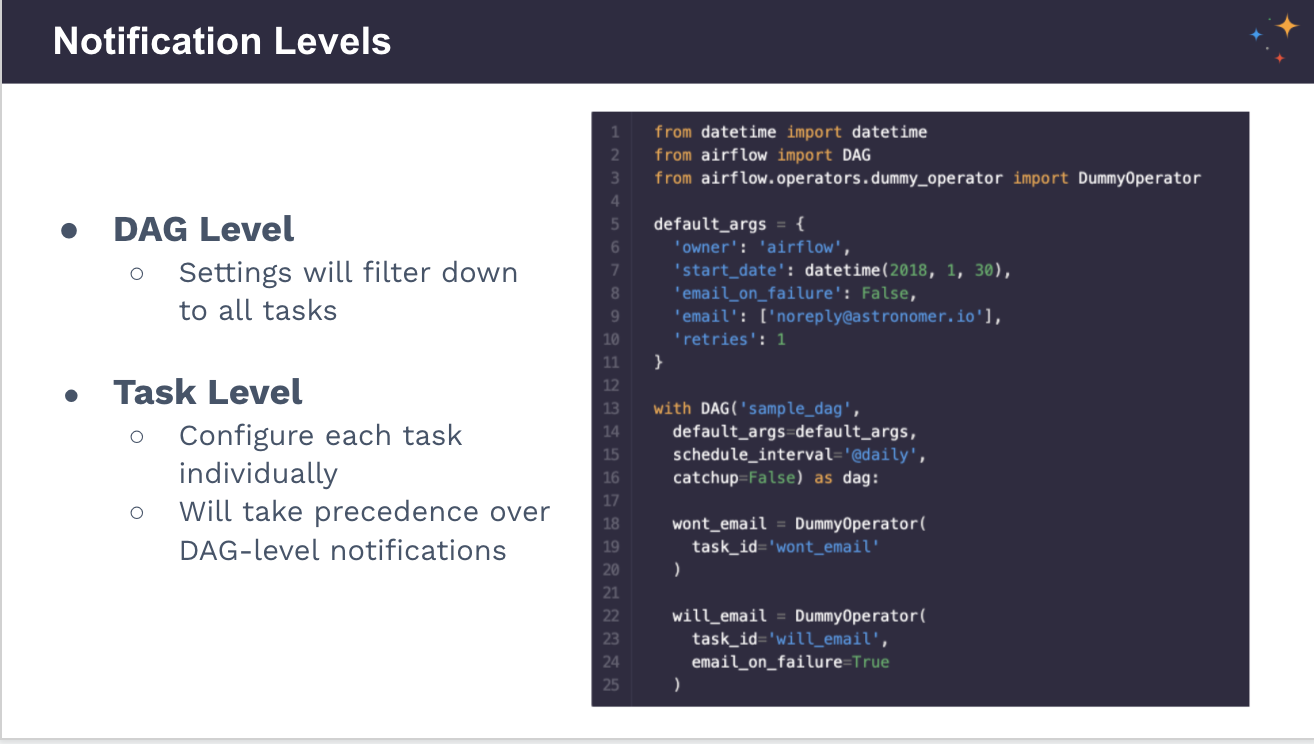

Notifications are more than failures! They can also be set for retries and successes. With Airflow, you have complete control over when you get notified.

Excellent functionalities for giving your DAGs the best chance of success:

- the retry_delay, where you can change the period of time between your retries

- the retry_exponential_backoff (see example), making the time period between each retry progressively grow

These are very useful for situations out of your control, for example, when DAGs are in DPIs or external systems that might periodically go down. They can be used in conjunction with notifications.

Custom Notifications

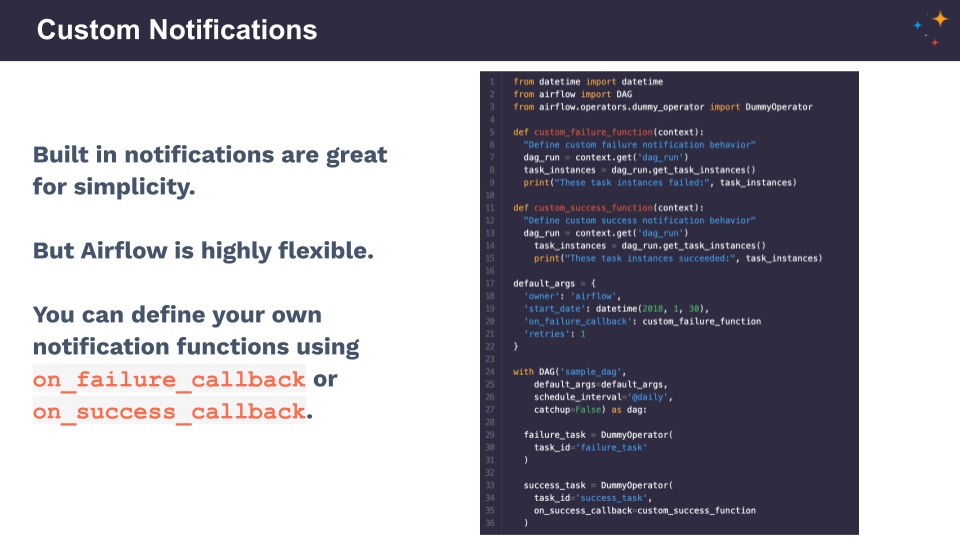

One of the most significant benefits of Airflow is that if you can write something in Python, you can do it in Airflow. Built-in notifications are easy and perfect for simplicity, but you can customize your notifications, too. They can be sent to anything that has an API.

The easiest way to define custom notifications within Airflow is using the following functions:

- on_failure_callback (example)

- on_success_callback

As a part of the base operator, these can be used within any task, and as Python functions, they can be defined as anything you want. You have full control over what happens when a task fails or succeeds.

Notification Methods

1. Email Notifications

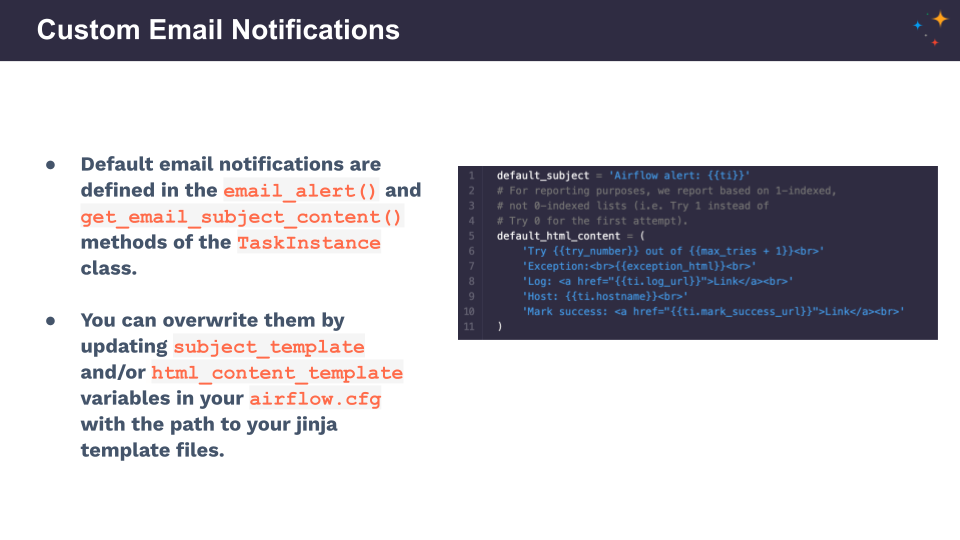

Likely everybody who has used Airflow for any length of time has set up email notifications. They are probably the most common within most organizations - when something fails, you get an email with the details.

Built-in Airflow functionality: all you have to do is configure the SMTP server, giving Airflow a way to send that email.

- Configure an SMTP in your Airflow config ➡️ SMTP section.

- Set Airflow environment variables.

- Turn notifications on - as seen in the previous examples.

You can customize your email notifications if you want a different email title, fancy graphics, more information on the DAG - simply provide Airflow with the path to the template. It’s achievable:

- In Airflow config

- Using an environment variable

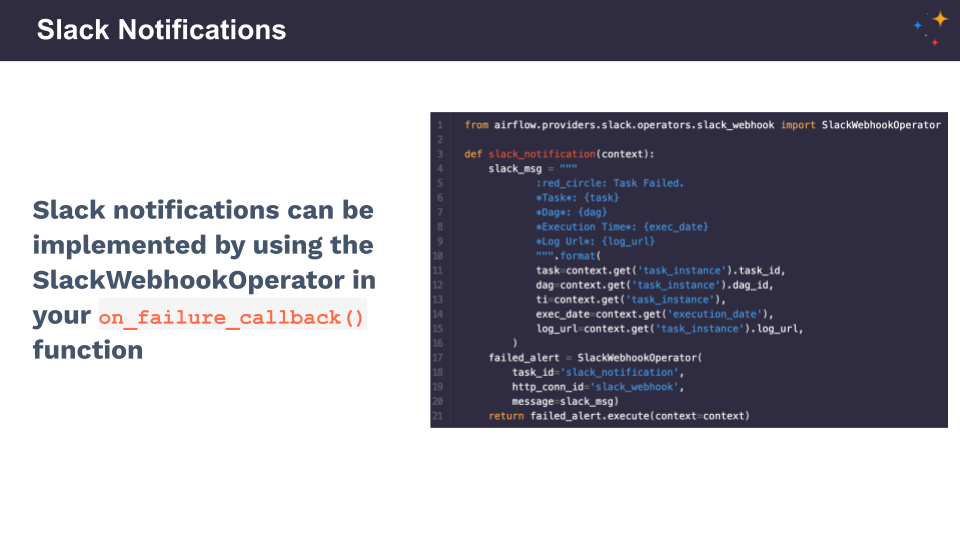

2. Slack Notifications

Perhaps your organization uses Slack rather than emails? Or both?

Setting up Slack notifications:

- Defining the on_failure_callback function

- Using the slack_webhook operator (check that out in the Astronomer registry) within the function

- Defining the slack message you want to receive

- Turning on notifications.

We highly recommend Kaxil Nalik’s blog post on setting up and customizing Slack notifications - that’s where the example comes from.

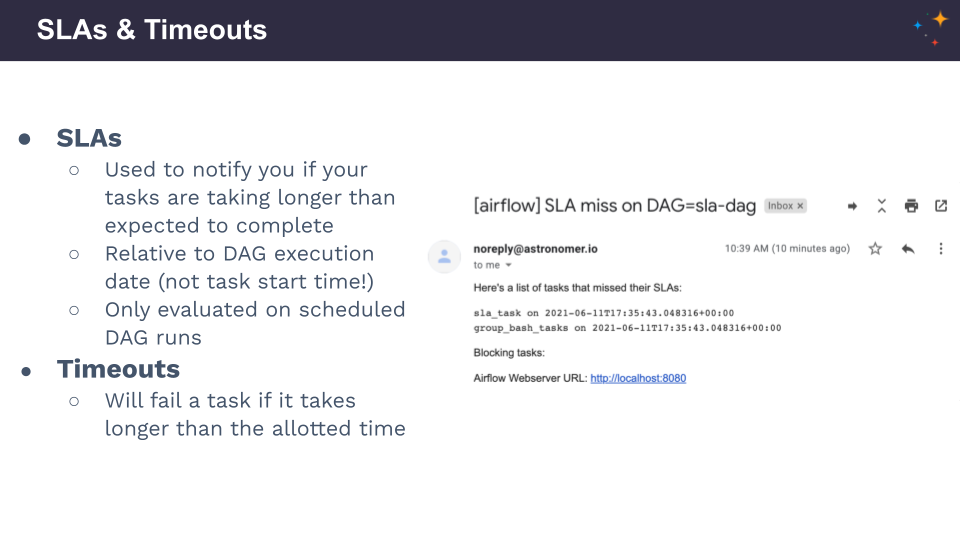

3. SLAs and Timeouts

SLAs notify you if your tasks are taking longer than expected to complete. It’s a built-in Airflow function to give you even more control over what’s happening in your DAGs. Not as common and well-known, but super helpful! It’s a notification mechanism, so it will let you know, but nothing will happen within the DAG.

They’re perfect if you want to find out if your task is taking too long.

You define the time after which you get notified. See an email notification example above.

Timeouts are going to fail the task if it takes longer than the defined period of time.

They’re perfect if you want to kill a task that’s taking too long.

If you suspect that, for some reason, your DAG is going to wait forever and hold up other important things - this is a perfect solution. A timeout will intervene automatically.

A reminder:

- SLS and timeouts are relative to the DAG execution date, not the task start time.

- Only evaluated on scheduled DAG runs. Triggered runs won’t have their SLS evaluated.

Live Demo

The live demo begins at 17:04 in the webinar video above.

What was covered:

- Code examples of setting up email notifications and exceptions

- Code examples of SLAs in a DAG

- Checking missed SLAs in the Airflow UI

- Code examples of SLAs on the task level - different SLAs for different tasks

- Data quality use case - how to get notified when there’s something wrong with the data

- Validation tasks - running a query against any database and passing or failing that task based on the results